Key Takeaway

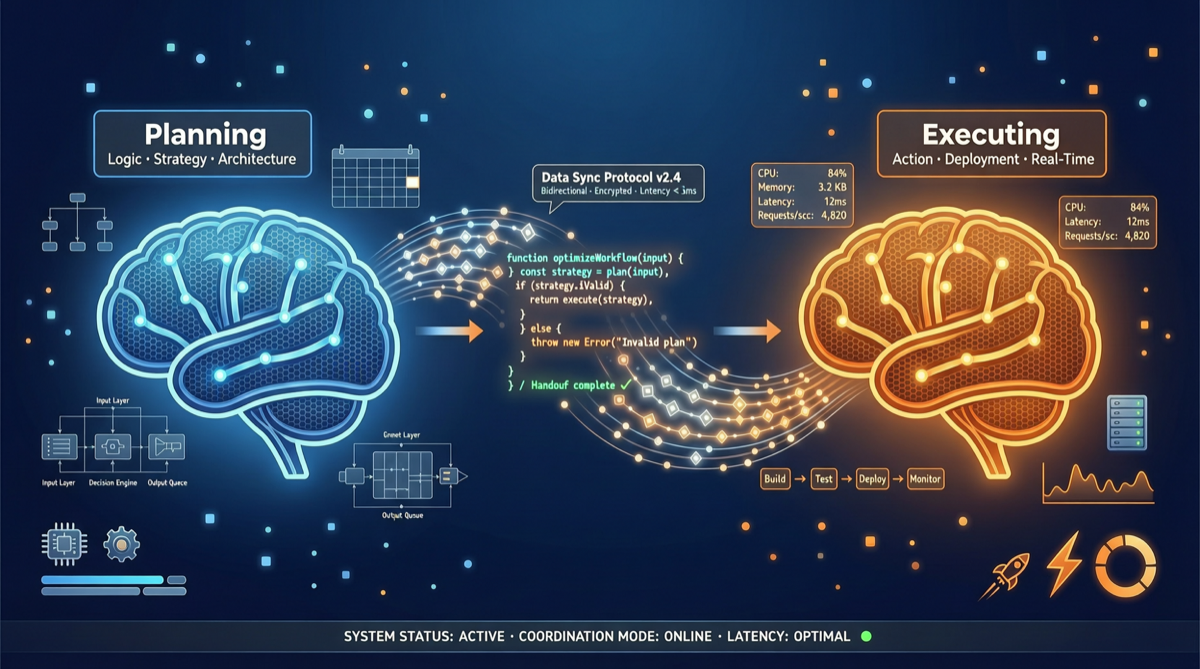

Community testing has validated a counterintuitive finding: the best AI coding workflow is not using the single strongest model, but having two models “adversarially collaborate” — Claude Opus 4.7 handles architecture planning and code review, while GPT-5.5 handles code generation and execution. This division of labor “isn’t close, it crushes” single-model approaches in coding quality.

Why Dual Models Work

The fundamental problem with single-model approaches is “capability coupling” — the same model must understand requirements, plan architecture, write code, and self-review. This leads to:

- Context pollution: Planning and execution mixed together, key decisions drowned in details

- Self-review failure: Models struggle to find their own systematic errors

- Style inconsistency: Optimal prompting strategies for different tasks conflict

The dual-model approach solves these through “role separation”:

| Role | Model | Advantage |

|---|---|---|

| Planner | Claude Opus 4.7 | Deep reasoning, architectural thinking, safety review |

| Executor | GPT-5.5 | Code generation speed, API proficiency, Terminal-Bench performance |

Workflow Design

Requirements Input

↓

[Opus 4.7] Architecture Planning

├── Module decomposition

├── Interface design

├── Technology selection

└── Risk assessment

↓

[GPT-5.5] Code Execution

├── Generate code per module

├── Write test cases

└── Fix compilation errors

↓

[Opus 4.7] Code Review

├── Architecture consistency check

├── Security vulnerability scan

└── Optimization suggestions

↓

[GPT-5.5] Iterative Fixes

↓

Final OutputPrompt Templates (Simplified Version)

Planner (Opus 4.7):

You are a senior software architect. Based on the following requirements, output:

1. Module decomposition (no more than 5 modules)

2. Interface definition for each module

3. Technology selection recommendations with rationale

4. Potential risk points

Requirements: [user input]Executor (GPT-5.5):

You are a senior developer. Please implement code strictly according to the following architecture specification:

Architecture Document: [Opus output plan]

Requirements:

- Only generate code for the specified module

- Include complete type definitions

- Write docstrings for every functionReviewer (Opus 4.7):

Please review whether the following code implementation aligns with the original architecture plan:

1. Any architecture deviations

2. Security concerns

3. Code quality score (1-10)

Architecture Plan: [original plan]

Code Implementation: [GPT output code]Cost Analysis

| Approach | Cost per Task (Estimated) | Quality |

|---|---|---|

| Opus 4.7 only | $0.80 | High |

| GPT-5.5 only | $0.30 | Medium |

| Dual-model workflow | $0.60 | Highest |

The dual-model approach costs between the two, but delivers the highest quality. The key is that the planner and reviewer consume far fewer tokens than the executor — Opus’s output is a structured planning document, not full code.

Comparison with Existing Approaches

| Approach | Advantage | Disadvantage |

|---|---|---|

| Single model (Opus/GPT) | Simple, low cost | Quality ceiling is low |

| Multi-model parallel routing | Automatically selects best model | Still single-round calls |

| Dual-model adversarial collaboration | Highest quality | Requires orchestration infrastructure |

| Agent Harness (jcode etc.) | High automation level | Complex configuration |

When to Use Dual-Model Workflows

Recommended:

- Complex project architecture design

- Production code requiring high reliability

- Security-sensitive modules (authentication, payments, etc.)

- Code review and refactoring

Not Recommended:

- Simple script writing

- Prototype development (speed priority)

- Extremely budget-constrained scenarios

Automation Path

Manual orchestration of dual-model workflows is feasible but cumbersome. Automation directions include:

- jcode / Agent Harness: Existing projects support multi-model orchestration, directly configurable

- n8n Workflows: Connect Claude and OpenAI APIs through MCP, building automated pipelines

- Custom Scripts: Chain two API calls with Python scripts, lowest cost

Industry Signal

The popularity of this workflow reflects a larger trend: the 2026 AI coding competition has shifted from “which model is strongest” to “how to orchestrate multiple models.”

As community voices have stated: “Model Quality is becoming a commodity talking point. The real moat lies in agentic workflows, trust and evaluation around tool use, and the speed at which you can swap models.”

Dual-model adversarial programming is an early practice of this trend — it does not pursue the perfection of a single model, but maximizes the value of existing models through system design.