Key Takeaway

Meta released Llama 4 Scout in late April — a 17B active / 109B total parameter MoE (Mixture of Experts) model with 16 expert routes.

- 10M token context window: Process a 300-page document without chunking

- $0.08/M token input price: Use OpenAI-compatible API via aggregators

- Open weights: The last open Meta model tier before Muse Spark goes closed-source

Architecture

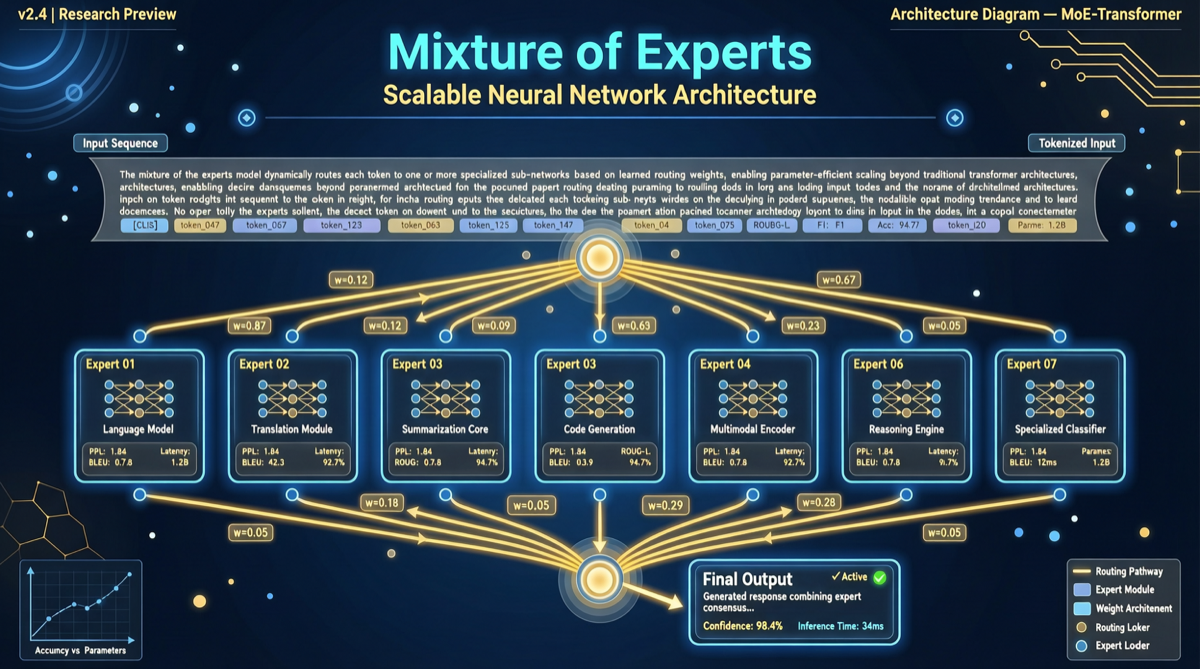

MoE Design

| Parameter | Value | Meaning |

|---|---|---|

| Total params | 109B | Total knowledge capacity |

| Active params | 17B | Actually used per inference |

| Experts | 16 | Routable sub-networks |

The MoE advantage: 109B parameters of knowledge at the inference cost of a 17B model.

Price Comparison

| Model | Input Price ($/M tokens) | Context | Open |

|---|---|---|---|

| Llama 4 Scout | $0.08 | 10M | Yes |

| GPT-5.5 | $2.50 | 1M | No |

| Claude Opus 4.7 | $15.00 | 200K | No |

| DeepSeek-V4-Flash | $0.14 | 1M | Yes |

Best Use Cases

- Long document QA — legal, financial, medical documents without chunking

- Codebase understanding — feed entire codebase at once

- Batch document processing — summaries, classification, extraction

- Cost-sensitive inference — high volume, acceptable absolute accuracy

Summary

Llama 4 Scout isn’t the strongest model, but it’s the most practical — solving a real pain point (document chunking) at extremely low cost. With Meta’s open-source strategy potentially tightening, now is the time to use it.

Meta’s open-source strategy is shifting from “open everything” to “tiered openness.” Llama 4 Scout may be the last train.