The Event

NVIDIA officially released the next-generation open omni-model Nemotron 3 Nano Omni on April 29. The model emphasizes efficiency and precision, with deep optimization for FP8 inference on Hopper and Blackwell architectures, while remaining compatible with consumer-grade GPUs like the RTX 5090 and the Jetson Thor robotics platform.

More importantly, the new model achieves up to 9x efficiency improvement in Agent application scenarios, marking a shift in the focus of large model competition from “capability ceiling” to “application efficiency.”

Why It Matters

A Paradigm Shift in Competition

Over the past year, large model competition was essentially a race for capability ceiling: whose benchmark scores are higher, whose context window is longer, whose code generation is stronger.

Entering 2026, the competitive logic has fundamentally changed: who can complete real tasks at the lowest cost, highest efficiency, and with the fewest resources has become the new winning criterion.

The release of Nemotron 3 Nano Omni is a landmark event in this paradigm shift. NVIDIA is no longer purely pursuing model scale expansion, but focusing on output efficiency per unit of compute.

Revolutionary Hardware Compatibility

Nemotron 3 Nano Omni’s hardware compatibility strategy is deeply significant:

- Consumer-grade GPUs (RTX 5090): Individual developers and small teams can run high-quality omni-models without purchasing enterprise-grade GPUs

- Jetson Thor robotics platform: Bridges the complete pipeline from cloud inference to edge deployment, paving the way for AI robotics and IoT scenarios

- Deep optimization for Hopper/Blackwell architectures: Fully leverages NVIDIA hardware compute power in enterprise scenarios

This “full-stack coverage” strategy means that whether it’s an individual developer’s local Agent, a quality inspection system on a factory floor, or multi-Agent orchestration in a data center, everyone can find a suitable deployment option.

Technical Highlights

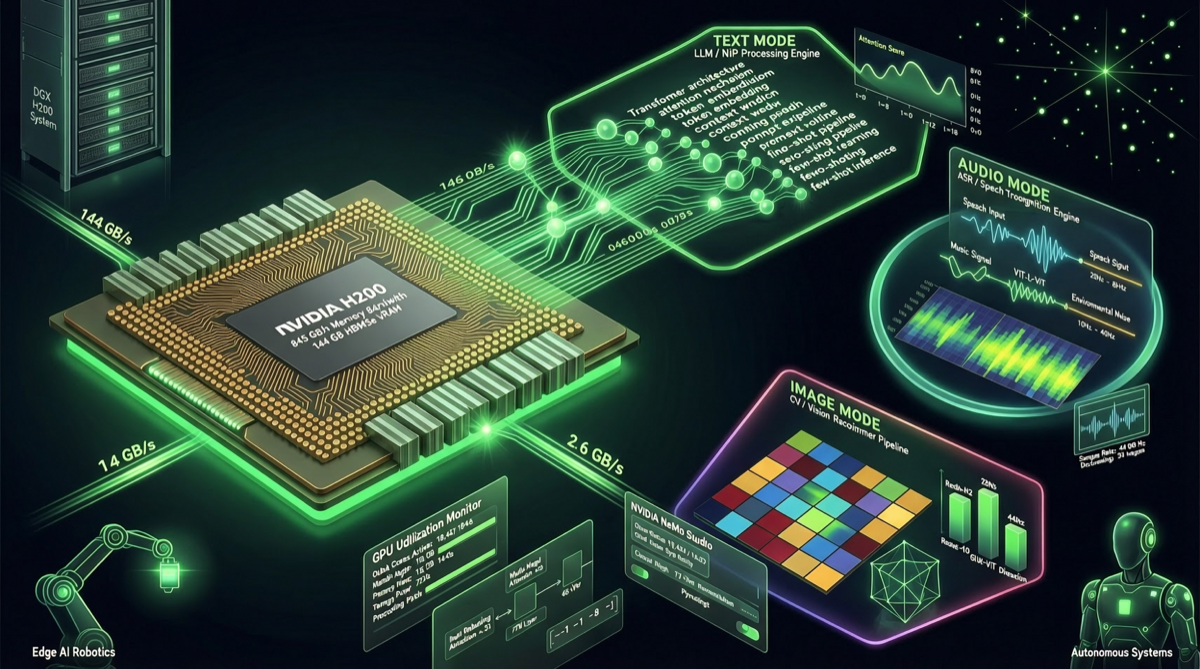

Omni-Modal Capability

The core breakthrough of Nano Omni lies in its omni-modal nature — a single model can handle text, image, audio, and other input types. This directly solves a pain point in Agent development: multi-modal tasks typically require chaining multiple specialized models, leading to high latency, high costs, and complex debugging.

Nano Omni’s omni-modal capability allows Agents to complete with a single model:

- User input analysis (text/voice/image)

- Multi-modal understanding and reasoning

- Multi-modal output generation

FP8 Inference Optimization

Deeply optimized FP8 inference is the core technical enabler behind the 9x efficiency improvement. Compared to traditional FP16 inference:

- VRAM usage reduced by approximately 50%: The same GPU can run larger models or handle longer contexts

- Inference speed improved 2-3x: FP8 compute throughput is significantly higher than FP16

- Controlled precision loss: NVIDIA’s specific quantization strategy keeps precision loss within acceptable bounds

Agent-Native Design

Nano Omni’s design goals directly target AI Agent application development. The model features targeted optimizations in:

- Tool-calling capability: Enhanced native support for MCP protocol and function calling

- Multi-step reasoning: Optimized Chain-of-Thought reasoning paths, reducing Agent “wrong turns” in complex tasks

- State persistence: Improved context management mechanisms, enabling Agents to better maintain task state across multi-turn interactions

Industry Impact

The “Pickaxe Seller” Strategy for Open Source Models

NVIDIA’s release of the Nemotron 3 series continues its “pickaxe seller” strategic positioning. Regardless of which model ultimately wins the market, they all need to run on NVIDIA hardware. By open-sourcing high-performance reference models, NVIDIA is effectively:

- Demonstrating hardware capability ceilings: Showing developers how fast and well models can run on NVIDIA chips

- Setting technical benchmarks: Establishing reference points for efficiency and precision across the industry

- Driving ecosystem prosperity: Open-source models lower the development barrier, attracting more developers into the Agent ecosystem

Accelerating Edge AI

Nano Omni’s support for consumer-grade GPUs and Jetson platforms will significantly accelerate the popularization of Edge AI. Previously, deploying AI Agents required cloud GPU servers; now, a workstation equipped with an RTX 5090 or even a Jetson embedded device can handle the job.

This means:

- Privacy-sensitive scenarios (healthcare, finance) can deploy locally without sending data to the cloud

- Offline scenarios (factories, mines, field operations) can run complete AI Agents

- Latency-sensitive scenarios (real-time control, autonomous driving) can achieve millisecond-level response

Signal & Validation

- Official NVIDIA release, high credibility

- The 9x efficiency improvement figure is based on NVIDIA’s own benchmarks and needs independent verification

- The open-source strategy lowers the technical barrier, but FP8 optimization is highly dependent on the NVIDIA hardware ecosystem

- Omni-modal capability needs to be evaluated in actual Agent scenarios

Action Items

- Assess Edge AI needs: If your business has local deployment or low-latency requirements, Nano Omni deserves serious evaluation

- Test FP8 inference: Run benchmarks on your target hardware to verify the efficiency improvement figures

- Follow the open-source community: Nano Omni’s open-source nature means the community will quickly produce adaptation solutions and best practices

- Plan multi-Agent architecture: Lower inference costs mean you can deploy more specialized Agents rather than relying on a single general-purpose model