What Happened

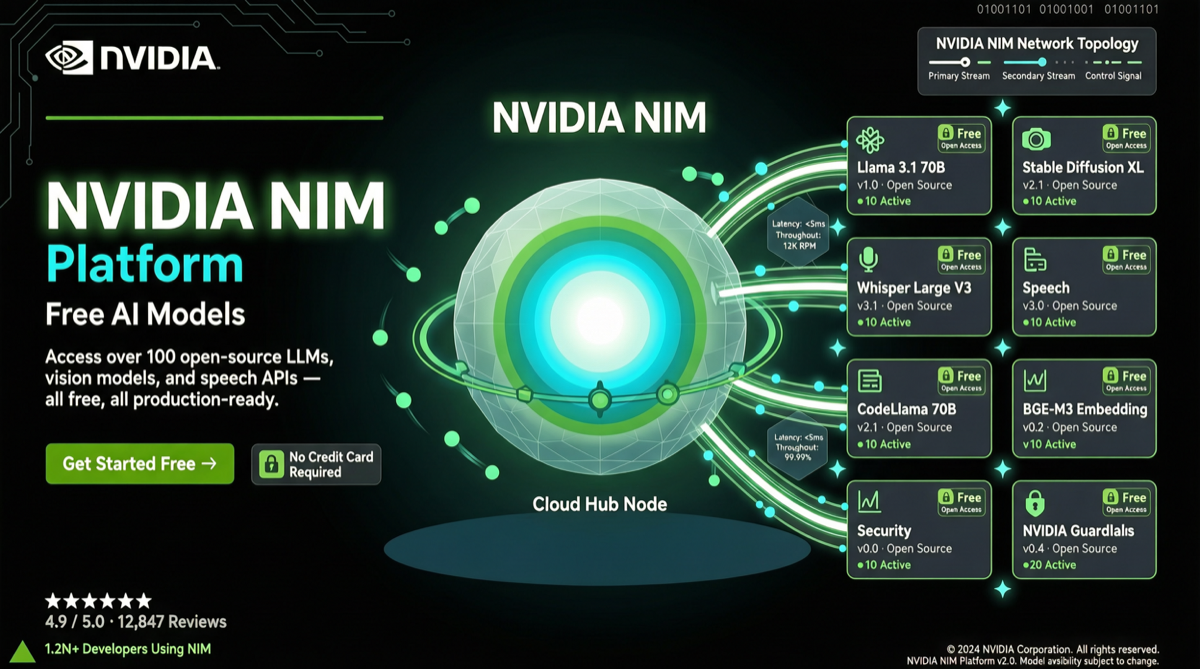

NVIDIA is doing something through its NIM (NVIDIA Inference Microservices) platform that makes other model providers nervous:

Free API access to 100+ frontier AI models, with:

- No credit card required

- No trial period limits

- No expiry date

- Real API key upon registration

- Pick a model, start building immediately

Core Free Model Catalog

| Model | Parameters | Context Window | Tier | Price on Other Platforms |

|---|---|---|---|---|

| MiniMax M2.7 | 230B (MoE) | 204,800 | GPT-4 level | $/million tokens |

| DeepSeek V3.2 | 671B (MoE) | 128K | GPT-4 level | $/million tokens |

| Llama 4 Scout | Multi-tier | 10M | Open-source flagship | $/million tokens |

| Gemma series | Various | Various | Local inference | $/million tokens |

| 100+ other models | Multi-domain | — | — | — |

These models, billed per token on other platforms, are completely free on NIM.

Why NVIDIA Is Giving This Away for Free

This looks like charity, but there’s clear commercial logic:

1. Chips as Entry Point

NIM is essentially a “front-end experience” for NVIDIA GPUs. Developers use models free on NIM → form dependency → need higher throughput/lower latency → buy NVIDIA GPUs to self-host → GPU sales loop closed.

2. Countering Cloud Vendors’ Model Markets

AWS Bedrock, Google Vertex AI, and Azure AI Studio are all competing for the “model-as-a-service” market share. NVIDIA, as a hardware vendor, uses free NIM to build a direct-to-developer channel, bypassing cloud vendors’ middle layer.

3. Global Showcase Window for Chinese Models

MiniMax M2.7 and DeepSeek V3.2, as representatives of Chinese models, gain zero-friction global developer experience through NIM. This is both NVIDIA’s ecosystem strategy and a new channel for Chinese models to go global.

Comparison with Other Free Options

| Dimension | NVIDIA NIM | OpenRouter Free | Groq Free | Official Free Quotas |

|---|---|---|---|---|

| Model count | 100+ | 200+ | Few | Per-model independent |

| Credit card needed | No | No | No | Some required |

| Quota limits | Generous | Yes | Yes | Yes |

| API quality | Enterprise-grade | Community | Community | Official |

| Chinese model coverage | Yes, multiple | Yes, multiple | No | Independent per model |

Action Recommendations

What to Do Now

- Register NIM account: Visit NVIDIA NIM platform, get API key at zero cost

- Compare models: Test MiniMax M2.7, DeepSeek V3.2, Llama 4 Scout on the same platform for specific tasks

- Prototype development: Validate product ideas quickly with free NIM API, reducing upfront investment

- Agent framework integration: Use NIM as model backend for OpenClaw, Hermes Agent, etc.

What to Watch

- Sustainability of free tier: While no expiry currently, free strategy may change anytime

- Performance ceiling: Free tier throughput and latency may differ from paid tier

- Data privacy: Free API request data may be used for model improvement; sensitive data needs caution

- Long-term architecture: After product scaling, evaluate cost-effectiveness of building self-hosted inference infrastructure

Landscape Assessment

NVIDIA NIM’s free strategy is reshaping the model service market:

- Model access cost goes to zero: For prototype development and small-scale applications, model calling cost is no longer a barrier

- Competition focus shifts: When models themselves are free, differentiation moves to latency, throughput, tool ecosystem, and integration convenience

- Chinese model globalization accelerates: NIM provides a low-friction global distribution channel for Chinese models

Free API doesn’t mean free lunch — NVIDIA’s real goal is selling GPUs. But for developers, it means the era of exploring 100+ frontier models at zero cost has arrived.