Open Source Is No Longer Just “Cheap” — It’s Starting to Win

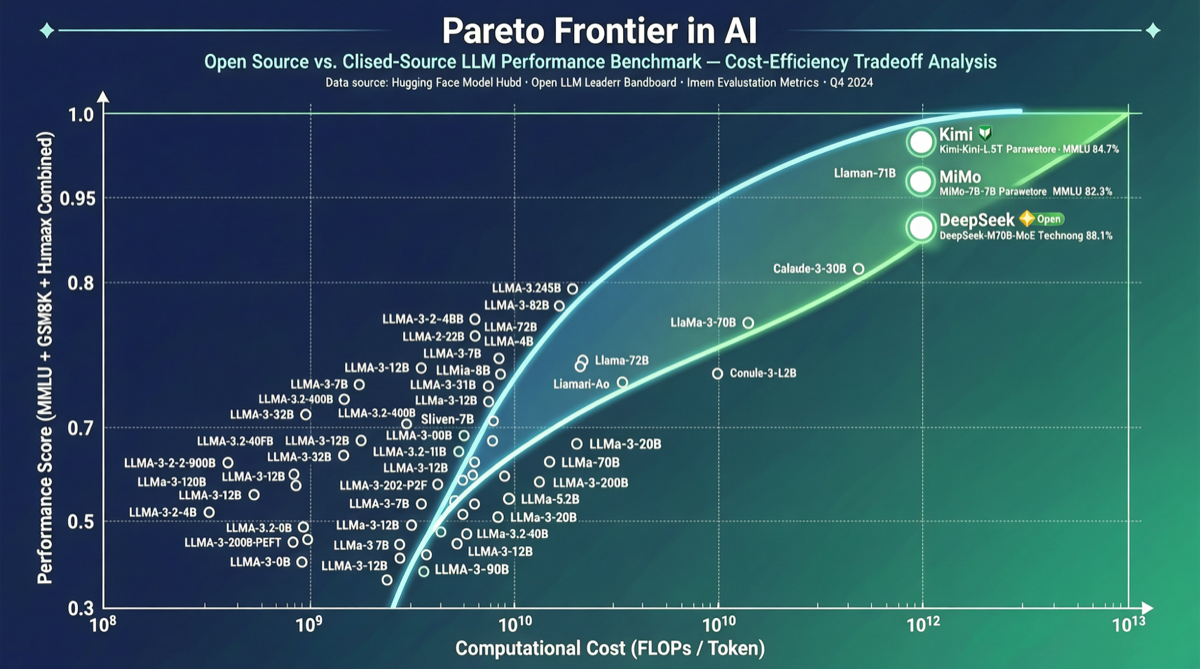

For a long time, the label “open source models” was always tied to “cost-effective” and “alternative.” But in the first week of May 2026, this narrative is being completely overturned.

The latest data from Artificial Analysis shows: on the Intelligence vs. Price Pareto frontier, 9 of 13 slots are occupied by open-weight models. More notably, this Pareto frontier is not dominated by a single company — it’s collectively held by the Chinese open-source collective.

Current Pareto Frontier Panorama

| Model | Organization | Intelligence Index | Type | GDPval-AA |

|---|---|---|---|---|

| GPT-5.5 | OpenAI | 60 | Closed | - |

| Gemini / Claude | Google/Anthropic | 57 | Closed | - |

| Kimi K2.6 | Moonshot | 54 | Open-weight | 1484 |

| MiMo V2.5 Pro | Xiaomi | 54 | Open-weight | 1578 |

| DeepSeek V4 Pro | DeepSeek | 52 | Open-weight | 1554 |

| GLM-5.1 | Zhipu | ~50 | Open-weight | 1535 |

| MiniMax M2.7 | MiniMax | ~49 | Open-weight | 1514 |

Key observations:

- Kimi K2.6 and MiMo V2.5 Pro tie at 54 points, the ceiling for open-weight models

- Both exceed some closed-source models on GDPval-AA (real Agent workloads)

- DeepSeek V4 Pro follows closely at 52 points, with API pricing at a fraction of GPT-5.5’s

Explosive Leap Within One Week

This tweet summarizes the landscape change over the past week:

Open Weights Capabilities have Exploded in the Last Week!

Kimi K2.6 & MiMo V2.5 Pro: 54 (1T MoE, up to 1M ctx) DeepSeek V4 Pro: 52 (1.6T/49B) GPT-5.5: 60 Gemini/Claude: 57

In just one week, three Chinese open-source models simultaneously broke into the top 10 of the Intelligence Index — unthinkable a year ago.

What This Means

1. Open Weight Has Crossed the “Good Enough” Threshold

When open models reach 90%+ of closed models on the Intelligence Index (54 vs 60) while costing only 1/10 or less, the “closed-source premium” is becoming increasingly hard to defend.

2. Chinese Models Have Formed an Open-Source Matrix

Not a single-point breakthrough, but a matrix-style encirclement:

| Dimension | Leader | Advantage |

|---|---|---|

| General Intelligence | Kimi K2.6 / MiMo V2.5 Pro | Tied at #54 |

| Agent Capability | MiMo V2.5 Pro | GDPval-AA 1578 |

| Context Length | DeepSeek V4 Pro | 1M+ context |

| Coding Ability | GLM-5.1 | SWE-Bench 94-95% Opus level |

| Price | DeepSeek V4 Pro | 75% discount active |

3. Where Is the Moat for Closed-Source Models?

When open models approach closed models in intelligence levels, closed-source vendors must differentiate in other dimensions:

- Security & Compliance: Enterprise SLAs, data privacy

- Ecosystem: Toolchain integration (Claude Code, GPT Engineer, etc.)

- Multimodal: Native visual/audio understanding (MiMo V2.5 Pro already has this)

Action Items

For technical decision-makers making model selections:

- If budget-sensitive: DeepSeek V4 Pro (75% discount until May 31) is the most cost-effective choice right now

- If you need Agent capability: MiMo V2.5 Pro leads on GDPval-AA, MIT license for unrestricted commercial use

- If you need long context: Kimi K2.6 and MiMo V2.5 Pro both support up to 1M context

- If you want cutting-edge capability: Closed models (GPT-5.5, Claude 5) still have a 5-6 point Intelligence advantage

Open-weight models are no longer “settling” — on the Pareto frontier, they’re becoming the “first choice.”