Core Takeaway

The Qwen team confirmed on social media: they have crossed the 27B parameter threshold, and the next target is an 8B edge model.

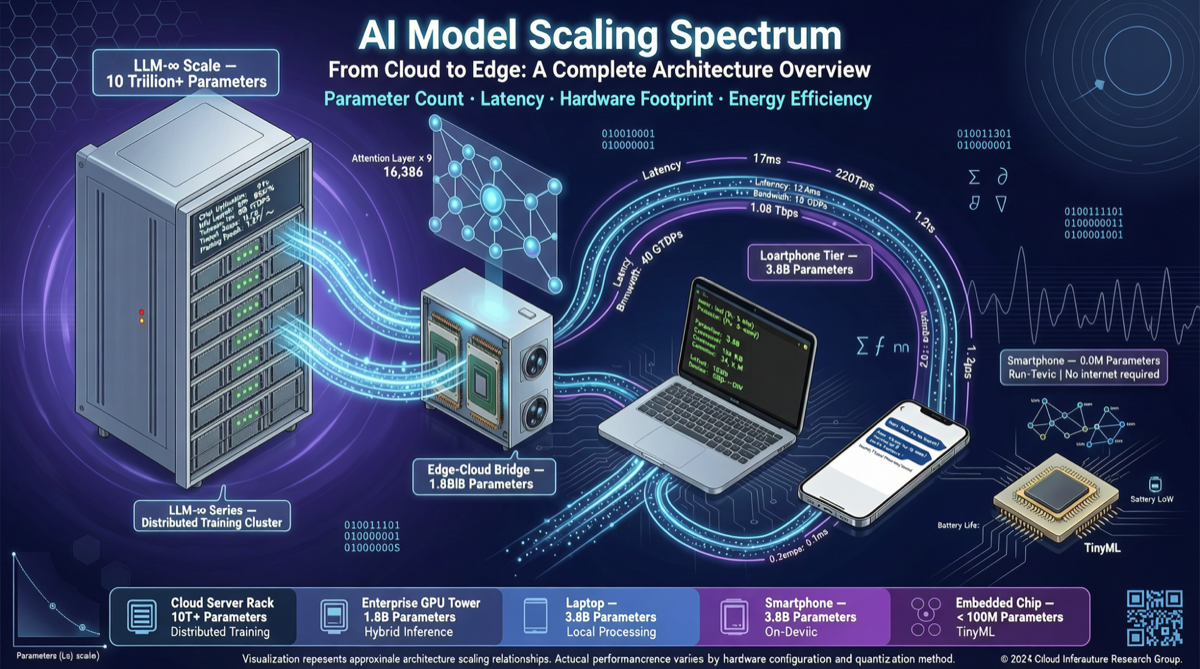

This is not just a number change. Combined with the already-public Qwen 3.6 lineup — 35B MoE, 3.6B small model, and Max Preview ultra-large model — Alibaba is building a full-scale open-source model matrix from cloud mega-models to consumer-grade edge models.

Scale Roadmap: Qwen 3.6 Four-Layer Architecture

| Model Spec | Parameters | Positioning | Target Scenario |

|---|---|---|---|

| Qwen 3.6 Max Preview | Ultra-large (undisclosed) | Flagship API model | Complex reasoning, enterprise tasks |

| Qwen 3.6 35B MoE | 35B total / 3.6B active | Efficient MoE architecture | Mid-compute deployment, cost-sensitive scenarios |

| Qwen 3.6 27B | 27B dense | Performance/efficiency balance | Single 4090/5090 GPU deployment |

| Qwen 3.6 8B (target) | 8B dense | Edge lightweight model | Laptop/mobile on-device inference |

| Qwen 3.6 3.6B | 3.6B | Ultra-lightweight | Edge devices, IoT |

The logic is clear: use 27B to establish a performance benchmark, then use 8B for scale-down deployment.

Why 8B Is the Next Key Node?

The 8B parameter size has special significance in 2026:

- Full consumer GPU coverage: RTX 4060/4070 (8-12GB VRAM) can fully load INT4-quantized 8B models

- Apple Silicon native: M4 MacBook (16GB unified memory) can smoothly run 8B model inference

- Mobile deployment feasible: 8B INT4 quantized is ~4-5GB, fitting into high-end phone memory

- Optimal knowledge distillation target: 27B → 8B distillation pipeline is mature, with performance loss controllable within 10%

Competitive Analysis: Qwen vs Llama Open Source Strategy

| Dimension | Qwen 3.6 | Llama 4 (Meta) |

|---|---|---|

| Largest open model | 35B MoE | 405B dense |

| Edge target | 8B | 3B / 8B |

| MoE support | 35B/3.6B | Yes |

| Chinese optimization | Native | Requires fine-tuning |

| Commercial license | Permissive | Permissive |

| Ecosystem toolchain | ModelScope + vLLM | Ollama + LM Studio |

Qwen’s strategy is more pragmatic than Llama — not chasing the largest parameters, but pursuing broadest deployment coverage. This better fits Chinese developers’ actual needs: not everyone has an H100, but many have a 4090 or even lower-end GPUs.

Action Guide

- If you’re evaluating Qwen 3.6: Watch the 8B version release timeline — this will be the key node for consumer-grade deployment

- If you’re building edge AI products: The INT4-quantized Qwen 3.6 8B will be one of the most cost-effective options

- If you’re using Llama for edge: Qwen 3.6 8B’s performance advantage in Chinese scenarios is worth including in A/B testing

Qwen’s “from 27B to 8B” is not a step down — it’s a dimensional strike: using smaller parameter scale and lower deployment barriers to cover a wider range of use cases.