Core Conclusion

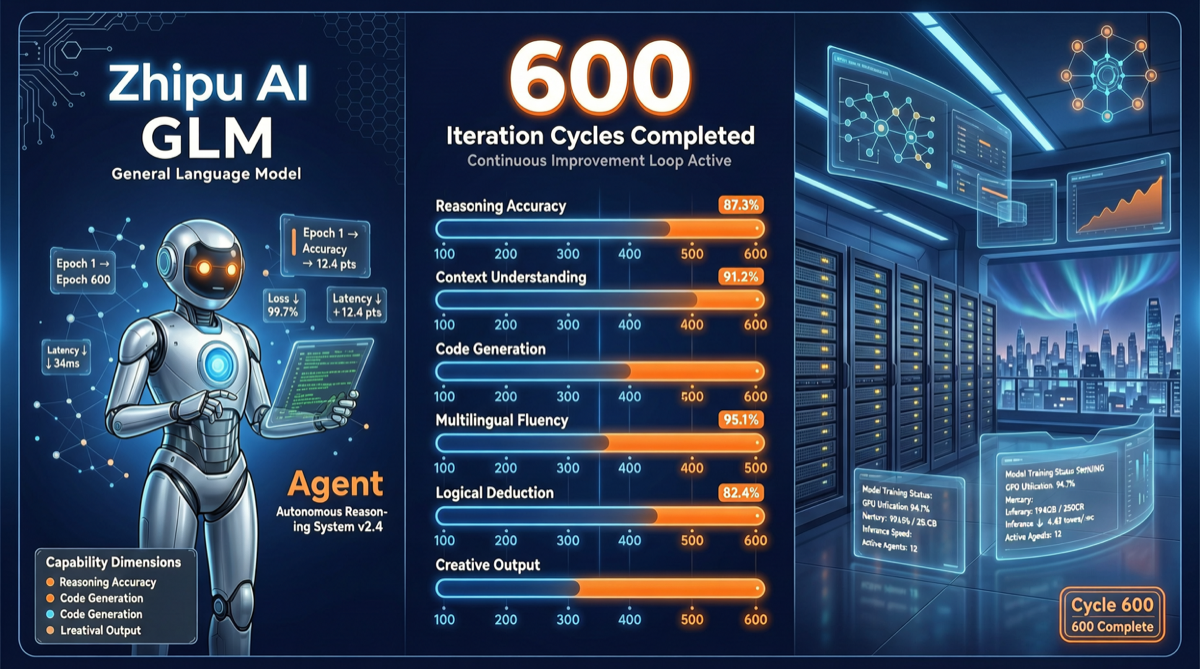

Zhipu released GLM-5.1 in early April, positioned as the next-generation flagship model for AI Agents. Its core selling point is not absolute scores on static benchmarks, but rather sustained optimization capability during long-horizon tasks — the model demonstrates continuous improvement across 600 iterations of long-range reasoning. This forms a sharp contrast with GLM-5’s “unlimited weekly quota” plan adjustment: GLM-5 is converging on commercialization, while GLM-5.1 is exploring new Agent scenarios.

GLM-5.1 Technical Highlights

Long-Horizon Task Capability

GLM-5.1’s core innovation lies in its continuous learning ability across multiple iterations. Traditional models tend to experience “capability degradation” in multi-round Agent loops — output quality declines as conversation rounds increase. GLM-5.1, through architectural optimization, maintains a trend of continuous improvement across 600 iterations.

| Capability Dimension | GLM-5 | GLM-5.1 | Improvement Direction |

|---|---|---|---|

| Long-range reasoning | Baseline | Significantly enhanced | Multi-step task decomposition and backtracking |

| Iterative optimization | Limited | 600 iterations of continuous improvement | Agent self-correction loops |

| SWE-Bench Pro | Industry-leading | Further ahead | Code repair tasks |

| Agent tool calling | Supported | Enhanced | Tool selection accuracy |

Leading in SWE-Bench Pro

In SWE-Bench Pro (the professional version of the software engineering benchmark), GLM-5.1’s performance ranks in the industry’s first tier. This benchmark simulates real code repair scenarios — given a GitHub issue and a codebase, the model needs to understand the problem, locate the code, and propose a fix.

For Agent scenarios, SWE-Bench Pro is a more meaningful metric than traditional Q&A benchmarks because it measures:

- Understanding complex codebases

- Multi-step reasoning (locate → analyze → fix → verify)

- Tool usage (search, read, edit, test)

Why It Matters

Differentiation of Domestic Models in the Agent Race

In the domestic large model competition, each vendor is finding its differentiated positioning:

| Vendor | Core Positioning | Advantage Scenarios |

|---|---|---|

| DeepSeek | Extreme cost efficiency | Large-scale API calls, long text |

| Kimi/Moonshot | Long context + search enhancement | Information retrieval, knowledge organization |

| MiniMax | Multimodal + safety | Content creation, safety-sensitive scenarios |

| Zhipu GLM | Agent + code | Programming assistance, automated workflows |

GLM-5.1’s release further strengthens Zhipu’s positioning in the Agent + code track. The sustained optimization capability for long-horizon tasks is a core requirement for Agent scenarios — a model that can continuously work for hundreds of rounds without degradation is more practically valuable than one that performs excellently in single-turn conversations.

GLM-5 Commercialization vs GLM-5.1 Innovation

Notably, Zhipu is simultaneously doing two things:

- GLM-5 commercialization convergence: Stopping the “unlimited weekly quota” old plan, moving to more refined pricing strategies

- GLM-5.1 technical breakthrough: Building technical barriers in Agent long-horizon capabilities

This “tightening old products while launching new ones” strategy is increasingly common among domestic model vendors — maintaining profit margins through product iteration during price wars.

Comparison with Competitors

Long-Horizon Agent Capability

| Model | Iteration Stability | Degradation at 600+ Rounds | Agent Scenario Fit |

|---|---|---|---|

| GLM-5.1 | Continuous improvement | Minimal | High |

| Claude Sonnet 4.6 | Stable | Low | High |

| GPT-5.5 | Medium | Medium | Medium |

| Qwen 3.5 | Good | Low | Medium-High |

| Kimi K2.5 | Good | Low | Medium-High |

Pricing Reference

Zhipu’s pricing strategy has shifted from “unlimited weekly quota” to more structured plans:

| Plan | Monthly Fee | Use Case |

|---|---|---|

| New plan (former unlimited users) | Pay-per-use | High-frequency Agent usage |

| Standard plan | Monthly subscription | Daily development assistance |

| Free trial | Limited quota | Evaluation and testing |

Note: Zhipu stopped automatic renewal of the GLM Coding Plan unlimited weekly quota old plan on April 30; affected users received 2 months of new plan benefits.

Action Recommendations

Scenarios Suitable for GLM-5.1

- Agent-driven code repair: Scenarios requiring continuous work in large codebases with multi-step reasoning

- Long-horizon automated workflows: Tasks requiring the model to maintain consistency and improvement trends across many rounds of interaction

- SWE-Bench-type evaluation tasks: Scenarios requiring high-accuracy code understanding and repair capabilities

Testing Strategy

- Run a 600-round stress test first: GLM-5.1’s core selling point is long-horizon stability; this capability should be verified with extensive iterations

- Compare SWE-Bench Pro performance: If your team cares about code quality, use actual code repair tasks to compare GLM-5.1 against other models

- Evaluate tool call accuracy: In Agent scenarios, tool call accuracy directly impacts task completion rate

Migration Recommendations

- GLM-5 users: If you previously used the unlimited weekly quota plan, note that automatic renewal stopped on April 30. You’ve received 2 months of new plan benefits. Use this time to test GLM-5.1

- New developers: GLM-5.1 represents Zhipu’s current technical frontier in the Agent track and is worth considering as one of the domestic Agent model options

- Budget-conscious users: Watch Zhipu’s pricing adjustments — the new plan may be more expensive than the old unlimited plan; ROI needs to be evaluated