Bottom Line First

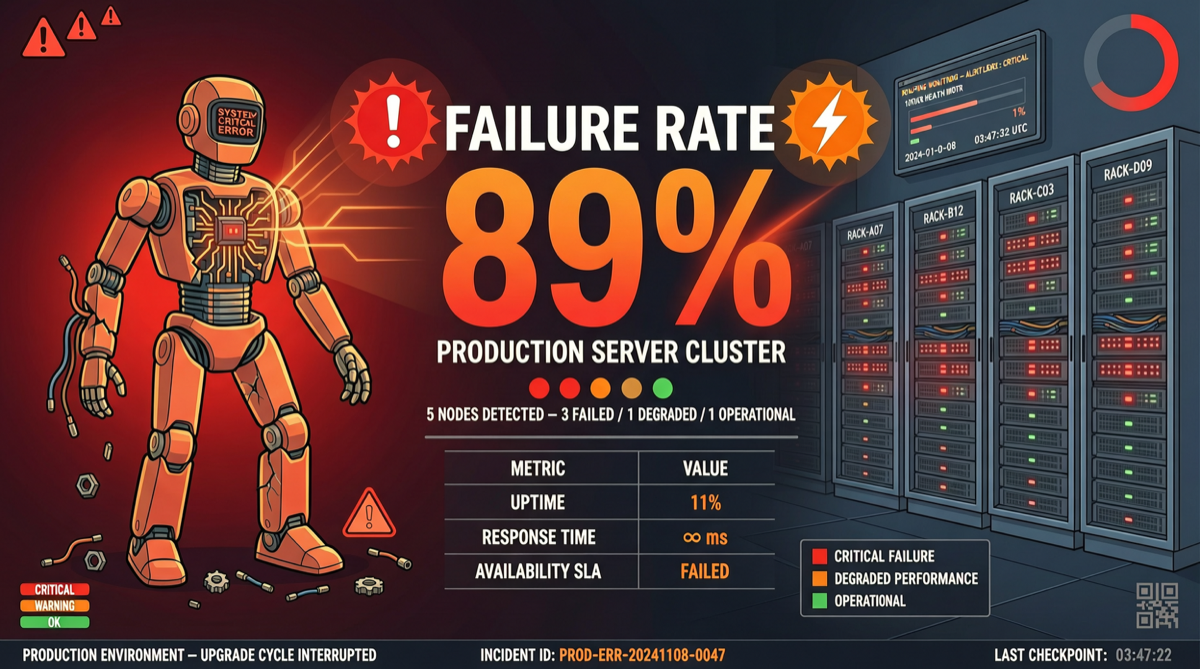

AI Agents experienced explosive growth in Q1 2026, but behind the boom is a harsh reality:

| Metric | Data | Meaning |

|---|---|---|

| Q1 Agent Shipments | 3M+ | Building barrier is extremely low |

| Production Survival Rate | 11% | 89% die after demo |

| Enterprises Requiring Human Validation | 63% (22% a year ago) | Trust decreasing, not increasing |

| AI Coding Tool Monthly Cost | $500-$2,000/engineer | Usage-driven, far exceeding SaaS pricing |

| Full-year AI budget exhausted by April | Widespread | Cost out of control |

| Agents running reliably past 90 days | Only 11% | 29-point ambition-execution gap |

What Happened

89% Failure Rate: From “Demo Day” to “Tuesday 2 AM”

Where’s the problem? As one developer put it:

“Teams are building for ‘Demo Day’, not for ‘Tuesday at 2 AM when the API times out.’”

Production AI agents need:

- Redundancy: What happens when the model goes down?

- Observability: What did the agent do wrong? Why?

- Graceful degradation: Can it continue when some tools are unavailable?

Most agents lack all three. They run perfectly when demoed, but collapse in real environments.

63% Require Human Validation — Trust Crisis

KPMG Q1 2026 AI Pulse data shows 63% of enterprises now require human validation of agent outputs, up from 22% a year ago. Nearly tripled.

This isn’t because agents got worse —quite the opposite, agents can do more now. But the more they can do, the bigger the impact of mistakes.

Gartner predicts 40% of enterprise apps will embed AI agents by end of 2026 (under 5% in 2025), but currently only 11% of companies achieve reliable autonomous operation past 90 days. The 29-point ambition-execution gap is the biggest structural problem in AI in 2026.

AI Coding Tool Cost Explosion

Another overlooked problem: AI coding tool costs (Cursor, Claude Code, Copilot etc.) are spiraling:

- ~70% of code now AI-assisted

- $500-$2,000 per engineer per month for AI tools

- Many companies’ full-year AI budgets exhausted by April

Companies assume AI tools behave like SaaS: fixed seats = predictable cost. Reality: usage intensity = unpredictable spend, cost swings of 10-100x.

Why It Matters

1. Agent Infrastructure Becoming Independent Category

When 89% of agents fail in production, agent infrastructure (observability, evaluation, governance) is no longer optional — it’s essential.

This is why projects like AgentField (“Kubernetes for AI agents”) and FutureAGI (open-source agent observability platform) are gaining attention — they target exactly this pain point.

2. “Human-in-the-Loop” Is Not Regression, It’s Maturity

63% of enterprises requiring human validation appears as “not trusting AI.” But reframed:

- Enterprises are taking agent outputs seriously

- Agents are entering critical business processes

- Human validation itself will become an optimizablestep

Good agent systems aren’t fully autonomous — they find the optimal balance between “autonomous” and “controlled.”

3. Cost Models Need Restructuring

AI tool cost problems expose SaaS pricing model’s unsuitability for AI era:

- SaaS: per user/month, usage predictable

- AI: per token/call, usage correlates with task complexity

Enterprises need new cost governance frameworks, or AI spending will continue spiraling.

Landscape Assessment

Short-term (2026):

- Agent observability and evaluation tools will grow rapidly

- Enterprises will establish AI cost governance teams and processes

- “Human-in-the-loop” becomes standard configuration for enterprise agent deployment

Medium-term (2027-2028):

- Agent infrastructure will evolve into independent service category

- Pricing will shift from token-based to outcome-based

- Frameworks that solve “2 AM API timeout” problems will win

Actionable Advice

| Your Role | Recommended Action |

|---|---|

| Agent Developers | Build observability from day one: integrate trace, eval, guard three-layer protection |

| Enterprise CTO | Establish AI cost governance framework, budget by actual usage intensity not seat count |

| Security/Compliance | Design “human-in-the-loop” processes, define boundaries and escalation paths for agent autonomous decisions |

| Investors | Focus on agent infrastructuresector (observability, evaluation, governance) not agent building tools |

Bottom line: AI agents’ problem isn’t “not smart enough” — it’s “not reliable enough.” When you can confidently let agents handle API timeouts, model degradation, and tool failures at 2 AM, agents are truly ready for production.