Conclusion

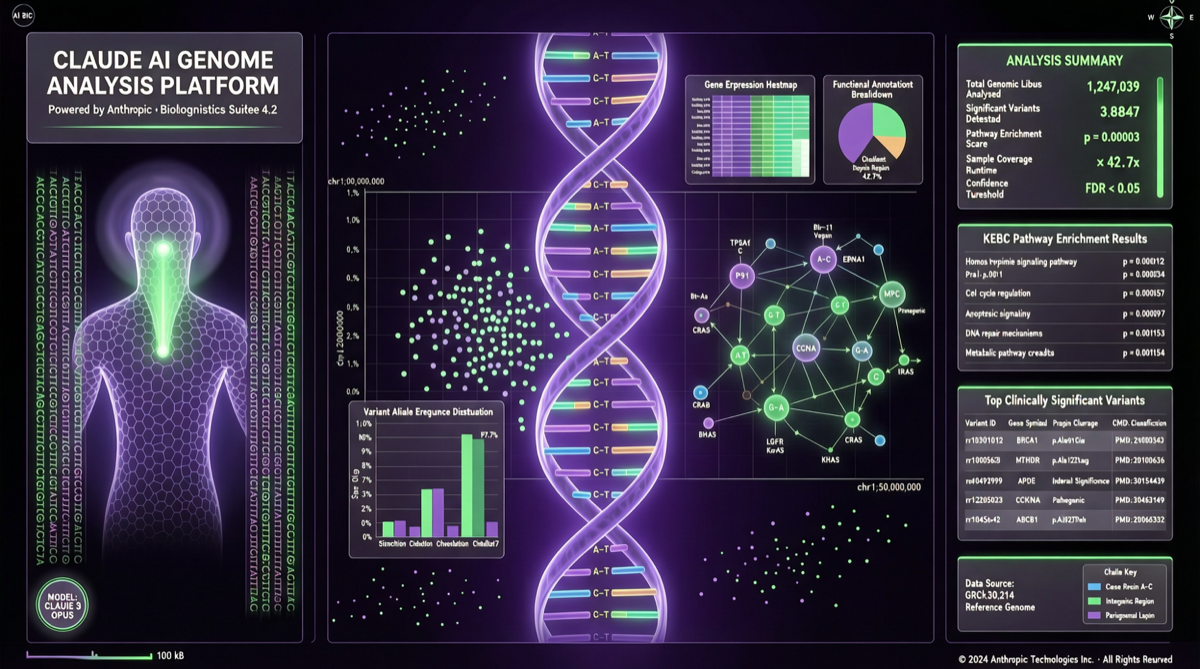

On April 29, 2026, Anthropic released BioMysteryBench — a new benchmark specifically designed to evaluate AI models’ ability to analyze real biological data. The benchmark contains 99 problems, adapted from real bioinformatics research tasks.

Key finding: 23 of the 99 problems stumped a panel of human experts. Claude’s latest models solved roughly 30% of these难题, and most of the remaining ones. This marks a new stage in AI’s role as a scientific research assistant.

Test Dimensions

BioMysteryBench Design Logic

BioMysteryBench differs from traditional academic benchmarks in that it uses real, unsolved bioinformatics research problems. The test format is not “multiple choice” or “Q&A with known answers” — instead, it requires models to propose creative solutions.

The 99 problems fall into two categories:

- Expert-solvable problems (76): Problems the human expert panel eventually solved

- Expert难题 (23): Open-ended problems the human expert panel could not solve

This design mirrors real research scenarios: most problems have answers, but a few key questions are the true challenges.

Claude’s Performance

| Problem Category | Count | Claude Solve Rate |

|---|---|---|

| Expert-solvable | 76 | Most solved |

| Expert难题 | 23 | ~30% |

Among the 23 expert难题, Claude’s latest models solved roughly 30% — meaning AI found viable solutions to approximately 7 problems that human experts could not crack.

Impact on Research Workflows

Claude’s performance in bioinformatics analysis suggests AI is transitioning from “assistant tool” to “collaborator” role:

- Hypothesis generation: Claude can propose hypotheses based on data patterns that humans might overlook

- Cross-domain association: Integrating knowledge across different biology fields to discover new connections

- Code generation: Automatically generating analysis scripts to accelerate data processing

Important caveat: AI-proposed solutions still require human expert validation. A 30% solve rate means 70% of problems still require human wisdom.

Selection Guidance

- Bioinformatics research: Claude demonstrates unique capability in real biological data analysis, suitable as a research assistant tool

- Hypothesis exploration phase: Use Claude to generate preliminary hypotheses and analysis directions, then have experts validate

- Data processing automation: Claude can auto-generate analysis scripts, reducing repetitive work

- Human oversight required: AI proposals must undergo peer review and experimental validation — they cannot replace human judgment