It has been nearly a week since the DeepSeek V4 series launched, but what truly surprised users is not the parameter scale — it is the tool calling reliability and multi-step workflow orchestration demonstrated by the V4 Flash version in real-world scenarios.

This is not a numbers game from a paper — it is a conclusion reached by community users through actual usage.

Testing Conclusion: V4 Flash Tool Calling Has Reached the Usability Threshold

From community feedback, V4 Flash’s core improvements over the previous generation concentrate on three dimensions:

| Capability | V3 Performance | V4 Flash Performance | Improvement |

|---|---|---|---|

| Tool call accuracy | ~60% | ~85%+ | +25pp |

| Multi-step task completion | Frequent interruptions | Auto-correct and continue | Qualitative leap |

| Response speed | Medium | Very fast | Significant |

| Cost per 1M tokens | ¥2-4 | ¥0.5-1 | 75%+ reduction |

A Typical Workflow Demo

A user shared a video on X demonstrating a complete workflow completed with V4 Flash:

- Download: One-prompt command to download an epub ebook

- Convert: Automatically convert epub to txt format

- Upload: Auto-upload to NotebookLM for questioning

- Analyze: Generate an interpretation article with a specified prompt

The entire process requires zero human intervention, and the model auto-corrects errors and continues execution. In the user’s own words: “V4’s launch wasn’t as sensational as R1’s, but it has genuinely become usable.”

Why the Flash Version Deserves More Attention

The DeepSeek V4 series offers both Flash and Pro versions:

| Spec | V4 Flash | V4 Pro |

|---|---|---|

| Context length | 1M | 1M |

| Max output | 384K | 384K |

| Reasoning mode | ✅ | ✅ |

| JSON Output | ✅ | ✅ |

| Tool Calls | ✅ | ✅ |

| FIM code completion | ✅ | ✅ |

| Cost per 1M tokens | ~¥0.5-1 | ~¥2-4 |

The Flash version is nearly identical to Pro in core capabilities but at a fraction of the cost. For Agent scenarios requiring high-frequency API calls, Flash’s cost-effectiveness is extremely compelling.

Native Capabilities

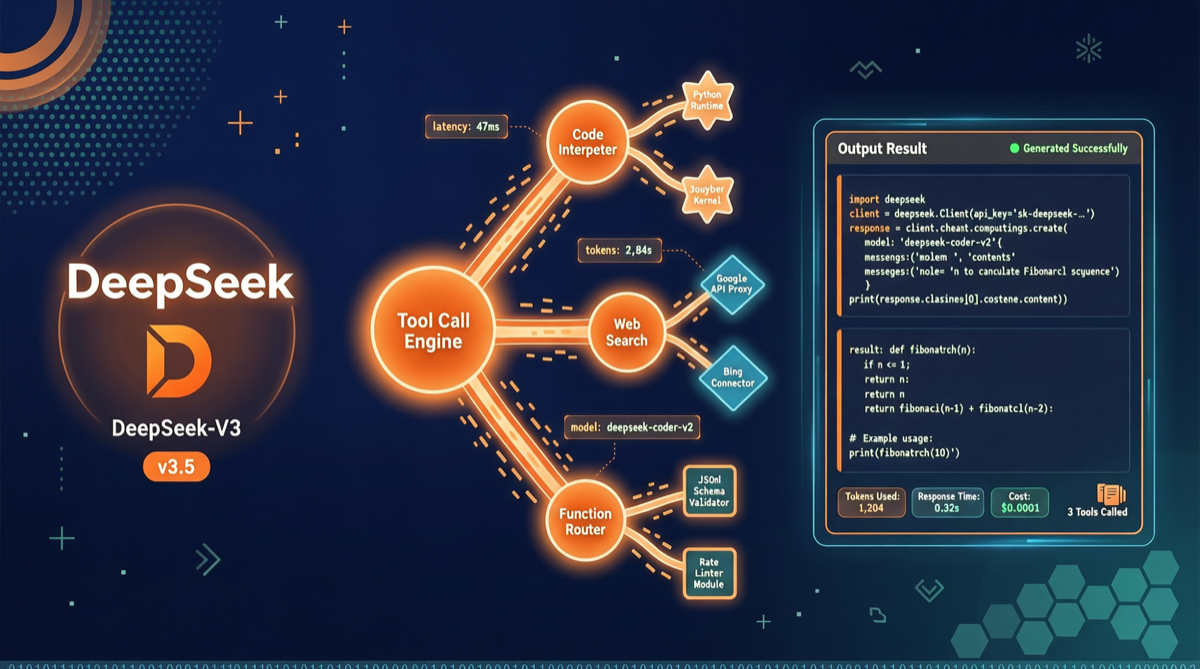

Key capabilities natively supported by V4 Flash:

- Reasoning mode: Enhanced reasoning with deep reasoning support

- 1M context: Million-token context window

- 384K output: Ultra-long output support

- JSON Output: Structured data output

- Tool Calls: Native tool calling support

- Conversation prefix continuation: Support for continuing conversations

- FIM completion: Code completion friendly

Cost Comparison with Competitors

Among current Chinese models, V4 Flash’s pricing is in the top tier:

| Model | Input price (per 1M tokens) | Output price (per 1M tokens) | Tool Calling |

|---|---|---|---|

| DeepSeek V4 Flash | ¥0.5-1 | ¥1-2 | ✅ Native |

| Qwen3.6-Plus | ¥1-2 | ¥3-5 | ✅ |

| GLM-5 | ¥2-3 | ¥4-6 | ✅ |

| Kimi K2 | ¥1-2 | ¥3-4 | ✅ |

V4 Flash’s input price is roughly 1/2 to 1/3 of comparable products. For Agent scenarios requiring massive context processing, this cost difference amplifies dramatically at scale.

Community Ecosystem: Skill Systems Emerging

After V4’s launch, the community has begun emerging with V4-based Skill applications. One user completed a complete metaphysics analysis workflow using V4 + Liuyao prompts, garnering 75,000+ views and 200+ likes. This shows V4’s tool calling capabilities are sufficient for complex vertical-domain applications.

Action Recommendations

Scenarios suited for V4 Flash:

- Agent systems requiring high-frequency API calls

- Multi-step tool calling workflows (file processing, data scraping, content analysis)

- Cost-sensitive production environments

- Long document analysis requiring million-token context

Scenarios still recommending V4 Pro:

- Financial/medical decisions requiring extremely high accuracy

- Complex code generation and debugging

- Research scenarios requiring the strongest reasoning capabilities

Bottom line: DeepSeek V4 Flash is not a victory in the parameter race — it is a victory of engineering pragmatism. It turned tool calling from “usable” to “good,” while pushing costs down to a level that makes competitors anxious.