Bottom Line

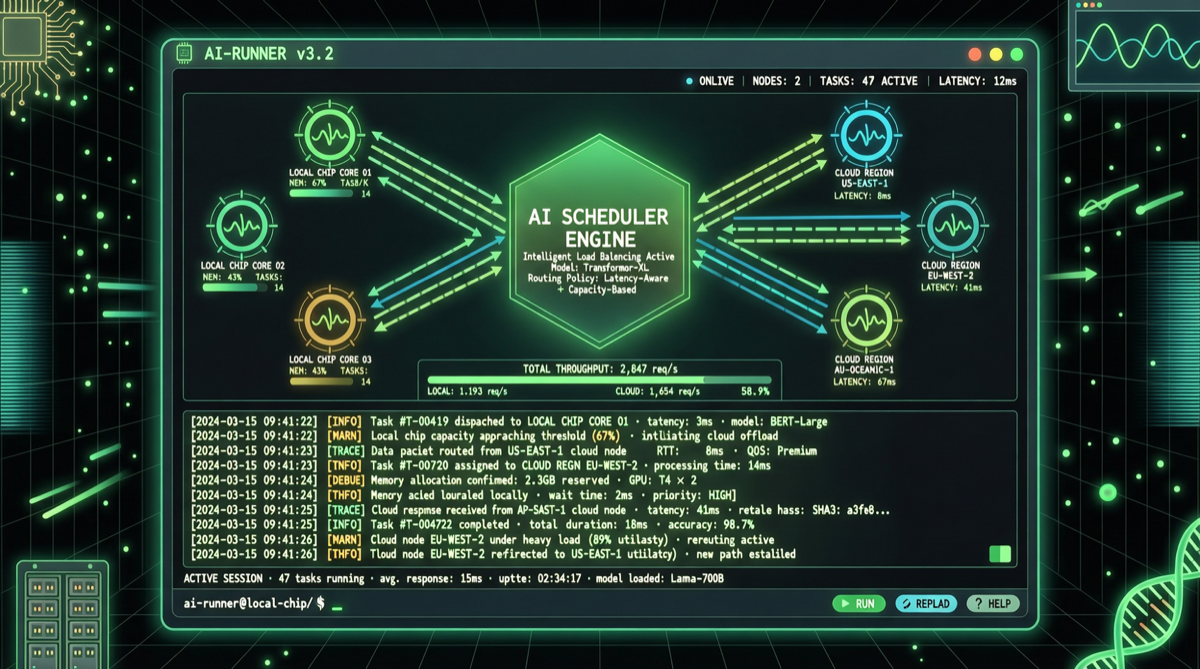

Google released Gemini CLI v0.40.0 in late April, adding intelligent routing support for local Gemma models. This isn’t just a feature update — it’s a complete local+cloud hybrid AI architecture: simple tasks handled by local Gemma for free, complex tasks auto-routed to cloud Gemini paid API.

Architecture

User Input → Task Complexity Assessment

↓

┌─────┴─────┐

Simple Task Complex Task

↓ ↓

Local Gemma Cloud Gemini

(Free/zero latency) (More capable)Experience Impact:

| Scenario | Path | Cost | Latency |

|---|---|---|---|

| File read/search | Local Gemma | Free | < 1s |

| Simple code completion | Local Gemma | Free | < 1s |

| Complex code refactoring | Cloud Gemini | API cost | 2-5s |

| Multi-step reasoning | Cloud Gemini | API cost | 5-10s |

Why This Matters

1. Cost Structure Revolution: For daily CLI users, most interactions are simple tasks. Local Gemma processing could reduce cloud API calls by 60-80%.

2. Privacy: Code and file contents never leave the developer’s machine.

3. Offline Capability: Basic AI assistance works without internet.

Comparison

| Tool | Local Model Support | Smart Routing | Free Tier | Open Source |

|---|---|---|---|---|

| Gemini CLI v0.40 | ✅ Gemma | ✅ Auto routing | Local free | ❌ |

| Claude Code | ❌ | ❌ | Limited | ❌ |

| Cursor | Partial | ❌ | Limited | ❌ |

| OpenClaw | ✅ Fully local | ✅ Configurable | Fully free | ✅ |

Getting Started

npm install -g @google/gemini-cli

gemini setup-local-model

gemini config set routing-strategy auto

gemini statusHardware Requirements

| Model | RAM/VRAM | Recommended |

|---|---|---|

| Gemma 3 1B | 2GB RAM | Any modern laptop |

| Gemma 3 4B | 4GB RAM | 8GB+ RAM device |

| Gemma 3 12B | 12GB VRAM | Desktop with GPU |

Action Items

- Heavy CLI users: Upgrade to v0.40 immediately for significant API cost reduction.

- Privacy-sensitive teams: Use local Gemma for all daily tasks, cloud only for deep analysis.

- Tool evaluators: Include smart routing as an evaluation criterion — the future is about which tool’s routing strategy saves the most money.