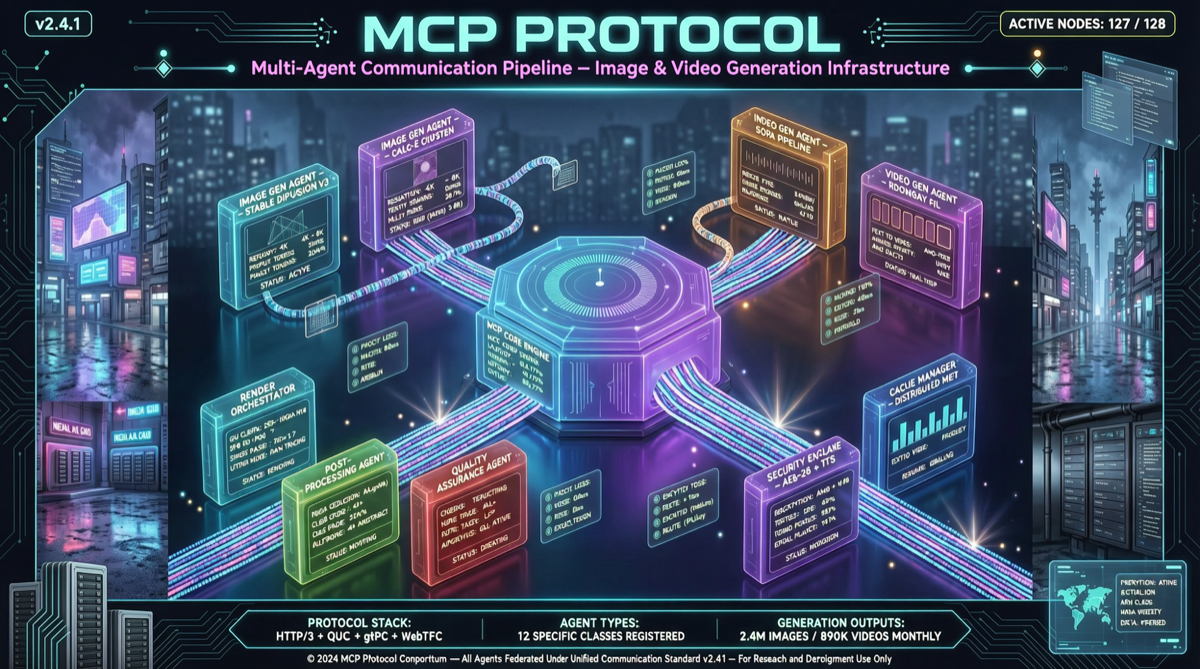

On April 28, Higgsfield officially released its MCP (Model Context Protocol) server. This release means AI agents can now directly call top-tier image and video generation models without requiring humans to manually switch tools.

The Problem

The current AI agent ecosystem faces a structural gap: coding agents (Claude Code, Codex) excel at text and code but must interrupt their workflow to generate visual content. Higgsfield MCP bridges this gap.

Core Capabilities

Higgsfield MCP provides agent-side access to:

- Seedance 2.0: Video generation

- GPT Image 2.0: Image generation

- Other top image and video models

Compatibility

Supported agent frameworks: OpenClaw, Hermes Agent, NemoClaw, and any MCP-protocol client.

Quick Start

# Install Higgsfield MCP Server

# Refer to Higgsfield official installation docs

# Configure MCP in Claude Desktop

# Add Higgsfield MCP endpointCommunity feedback shows it takes only minutes from installation to generating the first visual content. Fast generation with no queuing is a key advantage.

Industry Significance

MCP is becoming the de facto standard for AI tool interconnection. Anthropic reports MCP SDK monthly downloads at 97 million, a 970x growth in 18 months, with over 17,000 active servers. Higgsfield’s entry extends MCP’s reach from text/code tools to multimodal generation.