Conclusion

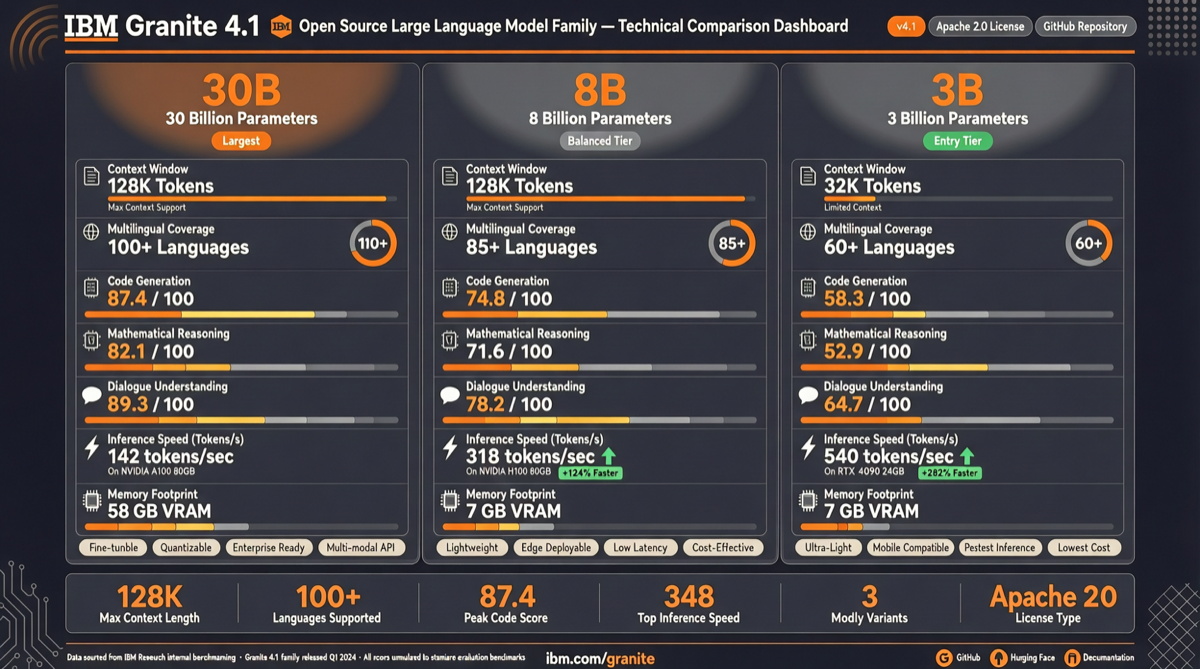

IBM released the Granite 4.1 series on April 29, 2026, comprising three non-reasoning models at 30B, 8B, and 3B parameters, all under Apache 2.0 license. On the Artificial Analysis Intelligence Index, Granite 4.1 30B scores 15, 8B scores 12, and 3B scores 9 — placing them at the level of Qwen3 and Gemma3 among open-source models.

The standout feature is token efficiency: compared to peer non-reasoning models, Granite 4.1 completes the same tasks with fewer tokens. The 8B version achieves the best balance between token efficiency and intelligence.

Test Dimensions

Intelligence Index Benchmarking

| Model | Parameters | Artificial Analysis Score |

|---|---|---|

| Granite 4.1-30B | 30B | 15 |

| Granite 4.1-8B | 8B | 12 |

| Granite 4.1-3B | 3B | 9 |

The 30B version’s score of 15 reaches the level of mainstream mid-size open-source models. The 8B version’s score of 12 places it in the first tier among small models.

Token Efficiency

The Granite 4.1 series excels in token efficiency. Compared to same-class non-reasoning models, it uses fewer tokens to complete the same tasks. This translates to lower inference costs and faster responses in real-world deployment.

The 8B version achieves the best balance in the “token efficiency vs. intelligence” trade-off, making it ideal for scenarios that need to balance performance and cost.

Coding Capability and FIM Support

Granite 4.1 supports FIM (Fill-In-the-Middle), a core capability for code completion. Developers can insert completions within existing code, suitable for IDE integration and coding assistants.

The Apache 2.0 license means enterprises can use it commercially for free without license concerns. This is especially critical for enterprise scenarios requiring local deployment and high data privacy.

Deployment Friendliness

The 3B version suits edge devices and low-power scenarios, the 8B version works for single-GPU deployment, and the 30B version fits production environments needing higher intelligence. The three versions cover the full deployment spectrum from edge to data center.

Weights & Biases Inference provides Day-0 support, enabling direct inference testing and observation on the W&B platform.

Selection Guidance

- Enterprise commercial/private deployment: Full Granite 4.1 series under Apache 2.0, no commercial restrictions — the first choice for IBM ecosystem enterprises

- Code completion/IDE integration: 8B version + FIM support, best balance of efficiency and intelligence

- Edge/low-resource scenarios: 3B version for resource-constrained environments, 9-point intelligence handles basic tasks

- Cost-effectiveness seekers: The 8B version’s token efficiency advantage delivers higher output at the same cost