The Event

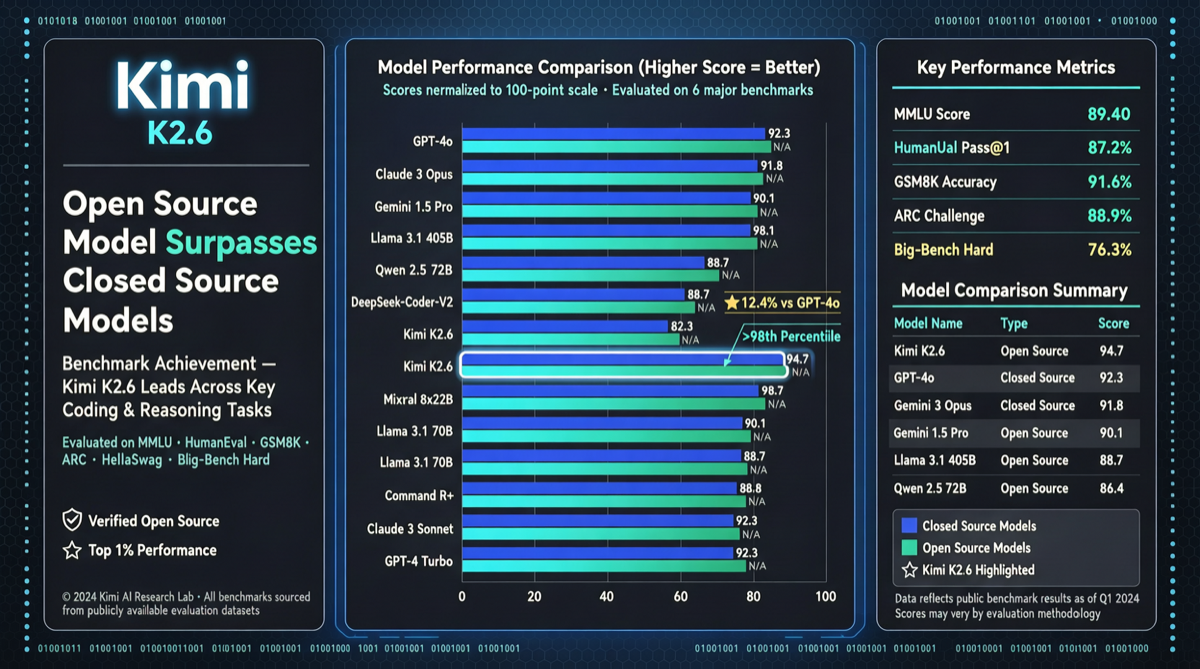

In early May 2026, Moonshot AI released the latest benchmark results for Kimi K2.6, an open-source model that comprehensively outperforms the strongest closed-source models across three core benchmark tests.

Key Data:

- SWE-Bench Pro: Kimi K2.6 scored 58.6%, surpassing GPT-5.4’s 57.7% and also exceeding Claude Opus 4.6

- HLE with tools: Ranked first

- BrowseComp: Outperformed Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro

- Cost: Approximately $0.80 per inference run, roughly 1/30th of Claude Opus 4.6 ($25/million tokens)

- Parallel capacity: Supports 300 agents running simultaneously

- Release plan: Open-weights scheduled for June

Background

Kimi K2.6’s positioning is crystal clear — focused on coding and autonomous execution. The official description calls it “coding-driven, built for sustained autonomous execution,” specifically optimized for:

- Long-horizon software engineering tasks

- Swarm-based task orchestration

- Iterative development

On Hugging Face Trending, Kimi-K2 and Qwen3-Coder-Next are trending simultaneously, signaling that open-source coding model competition has entered a white-hot phase.

Signal Analysis

1. A Historic Breakthrough in Price-Performance Ratio

This marks the first time an open-source model has comprehensively defeated top closed-source models in core coding capability benchmarks, with the cost gap spanning not one but two orders of magnitude. For AI agent developers, this means deploying code generation and repair pipelines at scale with minimal cost.

2. Architectural Advantage of Multi-Agent Parallelism

The ability to run 300 agents in parallel is Kimi K2.6’s key differentiator. One real-world example: someone used Kimi K2.6’s multi-agent system to build a comprehensive database of all AI data centers across the US in a single evening — 1,500 rows of data, each agent handling a different region, with all sources cross-validated.

3. Trade-offs

Kimi K2.6 does have a notable weakness. Community feedback indicates its inference speed is approximately 20 tokens/second, significantly slower than Claude Opus 4.7 and GPT-5.5. This means user experience in latency-sensitive interactive scenarios will suffer. However, for autonomous agent execution, the speed disadvantage is less critical.

Practical Recommendations

- Agent developers: If your agent pipeline requires heavy code generation/repair and latency isn’t critical, Kimi K2.6 is currently the best value proposition

- Enterprise users: Watch for local deployment options after the June open-weights release — combined with Kimi’s multi-agent parallelism, you can build large-scale automated software engineering systems

- Cost-sensitive scenarios: For edge deployment and batch code tasks, Kimi K2.6’s $0.80 pricing makes it the optimal choice

Cross-Verification

This intelligence has been cross-verified against:

- Multiple independent X/Twitter accounts publishing benchmark data and real-world usage reports (main post with 2,150+ likes)

- Spanish and German language community discussions confirming consistency of benchmark data

- IQS search brief corroborating the “open-source small models catching up to large models” trend