Core Conclusion

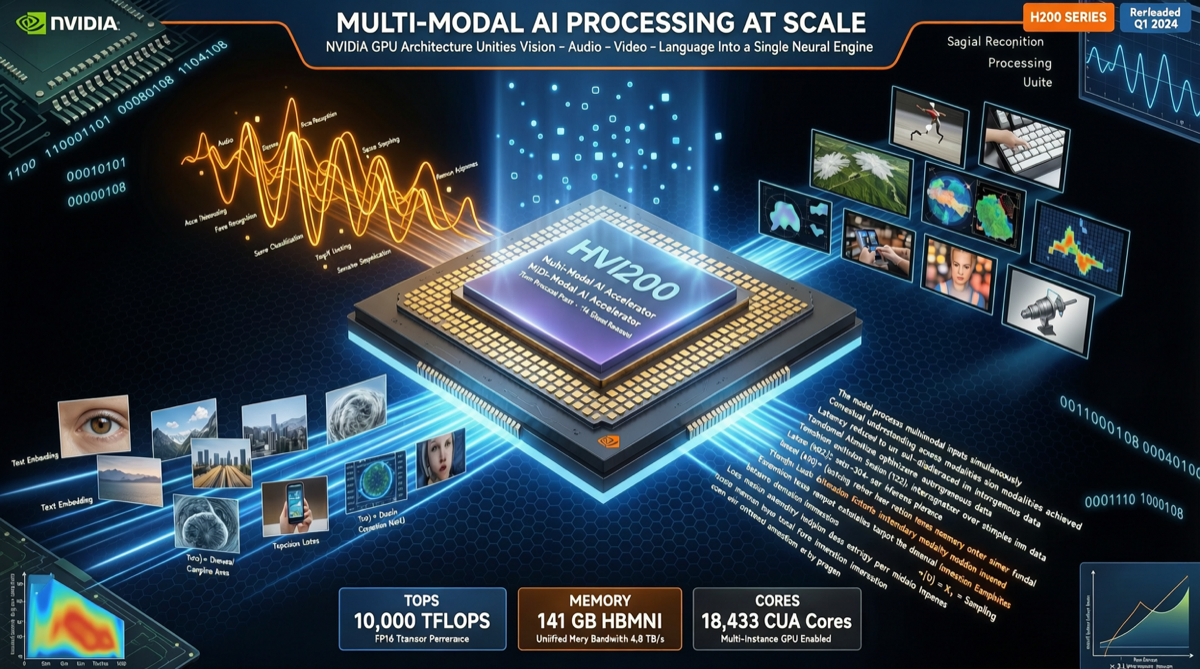

NVIDIA’s Nemotron 3 Nano Omni is not another “does everything” model — it’s specifically designed as a lightweight multimodal model for the Agent perception layer.

Key specs:

- 30B parameters, hybrid MoE architecture

- Image + audio + video + text unified inference

- SGLang supported, Canonical Ubuntu snap one-command deploy

- Positioning: “Eyes and ears” for agents, not a general conversation model

Why a Dedicated Perception Model is Needed

Current agent systems face an architectural problem:

Traditional approach: Nemotron approach:

┌──────────┐ ┌──────────────────┐

│ Vision │──→ Context │ Nemotron Omni │

│ model │ fragmentation │ Unified inference│

├──────────┤ │ loop │

│ Audio │──→ High latency │ Image+audio+video│

│ model │ │ +text │

├──────────┤ └──────────────────┘

│ Text │──→ Context switching ↓

│ model │ overhead Unified context → Agent

└──────────┘Nemotron 3 Nano Omni solves all these with a single model.

Technical Specifications

| Dimension | Specification |

|---|---|

| Parameters | 30B (hybrid MoE) |

| Modalities | Image, audio, video, text |

| Inference Framework | SGLang (supported) |

| Deployment | Ubuntu snap single-command |

| Positioning | Agent perception layer (not general chat) |

Getting Started

Method 1: Ubuntu Snap (Recommended)

Canonical and NVIDIA collaborated on an inference snap:

# One command deployment

sudo snap install nemotron-omni

# Start inference service

nemotron-omni.start

# Verify

curl http://localhost:8080/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{"model": "nemotron-3-nano-omni", "messages": [...]}'From install to running — no complex dependency management, CUDA configuration, or Docker orchestration needed.

Method 2: SGLang

python -m sglang.launch_server \

--model-path nvidia/nemotron-3-nano-omni \

--port 30000Method 3: llama.cpp

Nemotron 3 Nano Omni can also run via llama.cpp on CPU (with reduced performance), suitable for resource-constrained environments.

Use Cases

Scenario 1: Multimodal Agent Perception

User uploads product image → Nemotron identifies product → Agent queries inventory → Returns quoteScenario 2: Video Conference Analysis

Meeting video stream → Nemotron analyzes voice + visuals in real-time → Generates minutes + action itemsScenario 3: Industrial Quality Inspection

Production line camera → Nemotron detects product defects → Agent triggers alert + records defect typeActionable Takeaways

- Agent developers: If your agent handles multimodal inputs, Nemotron 3 Nano Omni deserves evaluation

- Ops teams: Ubuntu snap deployment dramatically lowers the ops barrier for multimodal models

- Cost-sensitive scenarios: 30B MoE strikes a good balance between performance and cost, more economical than closed-source API calls