NVIDIA’s “Reference Answer”

The focus of AI foundation model competition is shifting from “who has more parameters” to “whose agents run better.”

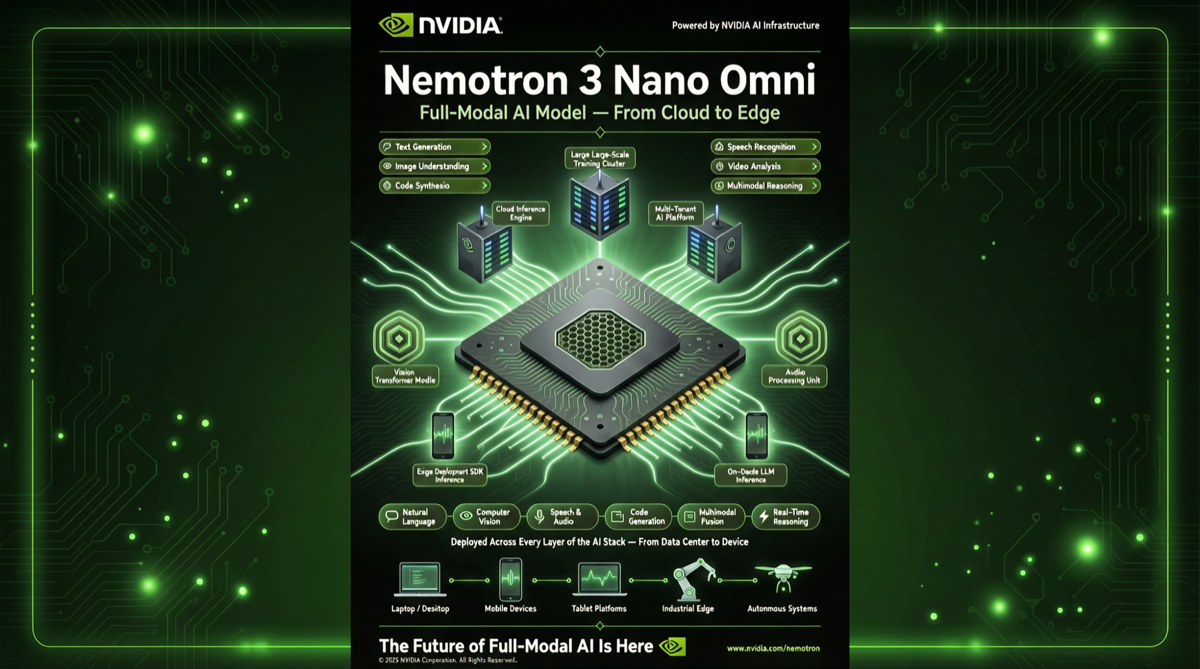

On April 29, NVIDIA released the Nemotron 3 series of open models, with the most notable being the Nano Omni version—a multimodal (text, image, audio, video) open-source model designed for AI agent applications.

This is not NVIDIA’s first model release, but the timing and positioning of Nemotron 3 are highly worth analyzing.

Why Now?

Key shifts in the AI model market during Q2 2026:

-

Agents become the main battlefield: OpenAI, Google, and Chinese companies are all accelerating AI agent deployment. Model “ceilings” are high enough; competition shifts to “how to run efficiently in real applications.”

-

Edge deployment demand explodes: More scenarios require running AI agents locally or at the edge—industrial control, robotics, smart homes, autonomous driving. These scenarios have strict requirements for latency, privacy, and cost.

-

Inference cost pressure: As agent interaction rounds increase, single-inference costs accumulate into massive operational expenses. Market demand for “small but powerful” models has surged dramatically.

Nemotron 3 Nano Omni is NVIDIA’s response to all three trends.

Core Specifications and Technical Highlights

| Feature | Details |

|---|---|

| Model Scale | Nano-level (positioned as “lightweight and efficient”) |

| Multimodal | Unified understanding and generation across text, image, audio, and video |

| FP8 Inference | Deep optimization for Hopper (H100/H200) and Blackwell (B100/B200) FP8 inference |

| Consumer GPU | Compatible with RTX 5090 and other consumer graphics cards |

| Edge Platform | Compatible with Jetson Thor robotics platform |

| Open Source | Open weights, commercial use supported |

FP8 Inference: 9x Efficiency Gain

Nemotron 3’s core technical breakthrough is FP8 (8-bit floating point) inference optimization. Compared to traditional FP16/BF16 inference:

- Throughput increase of ~9x: FP8 precision compression significantly reduces computation and VRAM usage

- Controllable accuracy loss: Through NVIDIA’s proprietary quantization calibration technology, accuracy loss in FP8 is below 2% for most tasks

- Significant power reduction: For edge deployment (e.g., Jetson Thor), FP8 means longer battery life and lower thermal requirements

Hardware Compatibility Matrix

| Platform | Support | Typical Scenario |

|---|---|---|

| H100/H200 (FP8) | Deep optimization | Cloud-scale agent services |

| B100/B200 (FP8) | Deep optimization | Next-gen cloud inference |

| RTX 5090 | Compatible | Personal workstation / edge inference |

| Jetson Thor | Compatible | Robotics / edge devices |

This compatibility matrix design is clear: from cloud to edge, from data center to consumer GPUs, Nemotron 3 runs everywhere.

Strategic Intent Analysis

NVIDIA’s release of the Nemotron 3 series is fundamentally doing one thing: defining the “reference architecture” for AI agent applications.

This is similar to NVIDIA’s Drive platform in autonomous driving—by providing reference models, they push the entire ecosystem to build around their hardware and software stack.

Specifically:

-

FP8 promotion: Demonstrating FP8’s practical results through open-source models drives developers and enterprises to adopt FP8 as the standard inference format, driving next-gen GPU sales.

-

Ecosystem lock-in: When developers build agent applications on Nemotron 3, they naturally prefer NVIDIA hardware (from H100 to RTX 5090 to Jetson Thor) for deployment.

-

Open vs. closed source balance: Open-source models lower adoption barriers, but optimal training and fine-tuning performance still requires NVIDIA hardware acceleration—a shrewd commercial strategy.

Comparison with Competitors

| Model | Features | Positioning |

|---|---|---|

| Nemotron 3 Nano Omni | FP8 optimized + multimodal + edge-compatible | Multimodal agent reference implementation |

| DeepSeek V4 | 1.6T params + million-token context | General capability flagship |

| Kimi K2.6 | Trillion-param coding model + agent clusters | Programming/Coding agent |

| MiMo-V2.5 | 310B multimodal agent + 1M context | Multimodal agent |

Nemotron 3’s unique advantage is deep hardware-software co-optimization. Other models may be stronger on pure software metrics, but Nemotron 3 has a significant advantage in “actual deployment efficiency.”

Industry Significance

For developers: If you need to deploy multimodal AI agents locally or at the edge, Nemotron 3 Nano Omni + RTX 5090 is one of the most viable solutions today. No cloud API needed, data stays local, latency controllable at millisecond level.

For enterprises: The 9x efficiency improvement from FP8 inference means the same GPU budget can support 9x the agent interactions. For enterprises deploying AI agents at scale (customer service, data analysis, industrial inspection), this is worth serious evaluation.

For the open-source community: NVIDIA’s continued open-sourcing of the Nemotron series provides researchers and entrepreneurs with a high-quality foundation. Combined with training frameworks like Microsoft’s Agent Lightning, the open-source agent ecosystem infrastructure is rapidly maturing.

Primary sources:

- NVIDIA Developer Blog - NVIDIA

- Nemotron 3 Series Release - NVIDIA

- Nemotron 3 Efficiency Analysis - Toutiao