Core Conclusion

In the “Learn to Build AI Agents in 90 Days” GitHub trending list, Pipecat is listed as the first recommended project — “powering most production voice agents you’ve actually used.”

Core selling points:

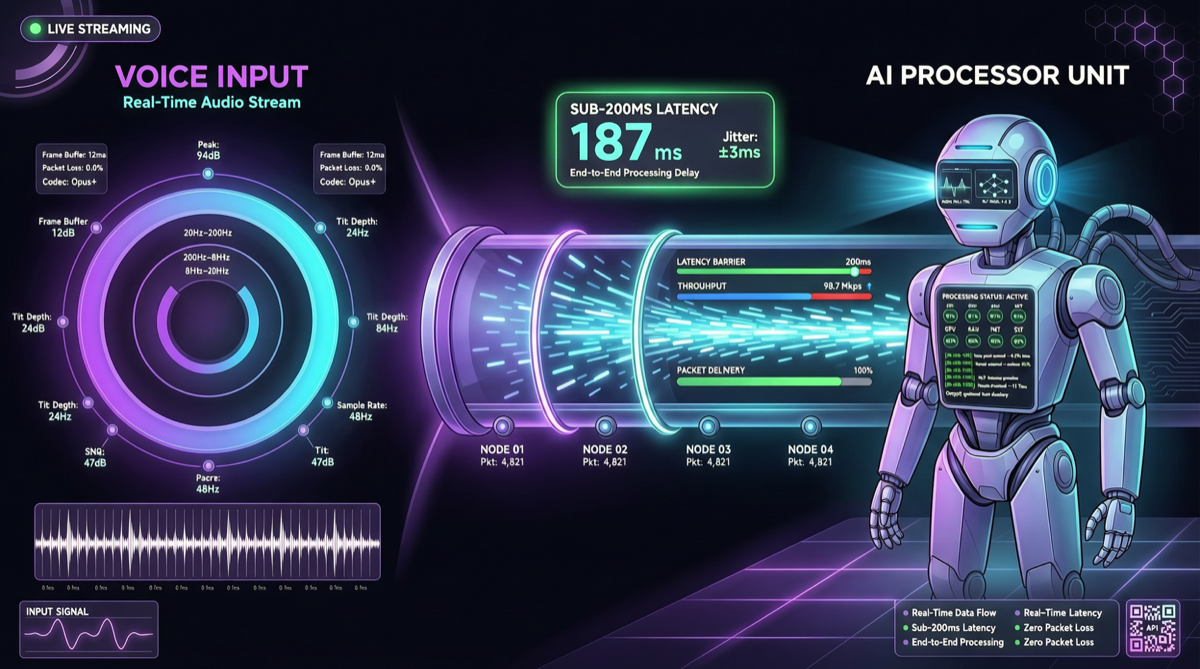

- <200ms end-to-end latency: The complete chain from user speech to AI response controlled within 200ms

- Production-grade: Not a demo, but a framework designed for actual deployment

- Python native: Developer-friendly for Python developers

- Multimodal pipeline: Supports streaming processing pipelines for voice, text, and images

What Is Pipecat

Pipecat is a real-time voice AI framework, focused on building low-latency voice conversation agents. Its core architecture is a “pipeline” system that chains speech input → speech recognition → LLM inference → speech synthesis → speech output into a single streaming processing chain.

Architecture Overview

User Speech → VAD (Voice Activity Detection) → STT (Speech-to-Text) → LLM → TTS (Text-to-Speech) → User Hears

↑ ↓

└──────────────────── Streaming Processing ─────────────────────────────────────┘Key design decisions:

- Full-chain streaming: Each stage processes in real-time, no need to wait for the previous stage to fully complete

- VAD-driven: Only activates downstream processing when user speech is detected, saving compute resources

- Model agnostic: STT, LLM, and TTS stages can independently choose different providers

Core Components

| Component | Function | Supported Providers |

|---|---|---|

| VAD | Detects when the user is speaking | Silero, WebRTC |

| STT | Speech-to-text | Whisper, Deepgram, Google STT |

| LLM | Conversation reasoning | OpenAI, Anthropic, Groq, local models |

| TTS | Text-to-speech | ElevenLabs, Cartesia, OpenAI TTS, Coqui |

| Transport | Transport protocol | WebSocket, Daily.co, LiveKit |

Competitor Comparison

| Framework | Language | Latency | Real-Time Voice | Production Ready | Learning Curve |

|---|---|---|---|---|---|

| Pipecat | Python | <200ms | ✅ Core focus | ✅ | Medium |

| LiveKit Agents | Python/JS | <300ms | ✅ | ✅ | Low |

| Vocode | Python | <400ms | ✅ | ✅ | Low |

| Twilio Autopilot | - | >500ms | Limited | ✅ | Low |

| LangChain Voice | Python | >500ms | ✅ (plugin) | Experimental | High |

Pipecat’s advantage lies in latency control and pipeline flexibility. <200ms latency means the conversation experience approaches real human conversation (average human conversation response latency is about 200-300ms).

Quick Start

Installation

pip install pipecat-aiMinimal Example

from pipecat.pipeline.pipeline import Pipeline

from pipecat.pipeline.runner import PipelineRunner

from pipecat.services.openai import OpenAILLMService

from pipecat.transports.services.daily import DailyTransport

# Configure transport layer (using Daily.co)

transport = DailyTransport(

room_url="https://your-room.daily.co",

token="your-token",

bot_name="Pipecat Bot"

)

# Configure LLM

llm = OpenAILLMService(model="gpt-5.4", api_key="your-key")

# Build pipeline

pipeline = Pipeline([

transport.input(), # Receive audio

llm, # LLM inference

transport.output() # Send audio reply

])

# Run

runner = PipelineRunner()

await runner.run(pipeline)Custom STT + TTS

from pipecat.services.deepgram import DeepgramSTTService

from pipecat.services.elevenlabs import ElevenLabsTTSService

stt = DeepgramSTTService(api_key="dg-key")

tts = ElevenLabsTTSService(api_key="11labs-key", voice_id="Rachel")

pipeline = Pipeline([

transport.input(),

stt, # Speech-to-text

llm, # Conversation reasoning

tts, # Text-to-speech

transport.output()

])Typical Use Cases

| Scenario | Configuration Suggestion | Estimated Latency |

|---|---|---|

| Customer service bot | GPT-5.4 + ElevenLabs | ~150ms |

| Language companion | Local model + Coqui TTS | ~180ms |

| Voice assistant | Groq + Cartesia TTS | ~120ms |

| Meeting summary | Deepgram STT + Claude | N/A (non-real-time) |

Cost Estimation

For a voice agent with 1,000 calls/day averaging 5 minutes each:

| Component | Provider | Monthly Cost (estimated) |

|---|---|---|

| STT | Deepgram | ~$150 |

| LLM | GPT-5.4 | ~$500 |

| TTS | ElevenLabs | ~$200 |

| Transport | Daily.co | ~$100 |

| Total | ~$950/month |

If using DeepSeek V4 Pro (discounted price) instead of GPT-5.4, LLM costs can be reduced by approximately 90%, bringing total cost down to ~$500/month.

Action Recommendations

- Voice Agent developers: If you’re building real-time voice conversation applications, Pipecat is currently the most mature option in the Python ecosystem.

- Existing LangChain users: Pipecat’s pipeline concept differs from LangChain — it’s designed for streaming real-time scenarios. If your application needs low-latency voice interaction, consider migration.

- Cost control: STT and TTS costs are often underestimated. Plan usage estimates early in the project. Deepgram and Cartesia offer good cost-performance ratios worth attention.

- Local deployment: Combined with Whisper.cpp (STT) and Coqui TTS (speech synthesis), Pipecat can run completely locally, suitable for scenarios with high data privacy requirements.