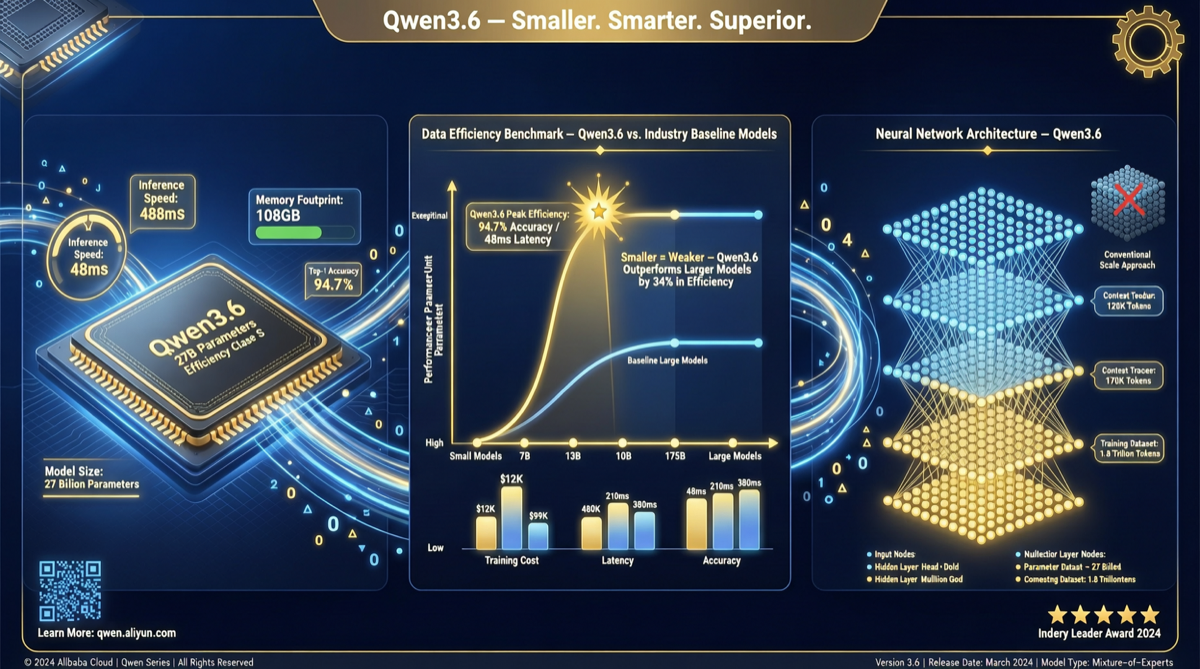

In the AI model race, there’s been a default assumption: more parameters = more capability. But the latest Intelligence Index data is breaking this assumption.

Core Data

Qwen3.6 27B scored 1414 Elo on the GDPval-AA benchmark. The significance:

| Model | Parameters | GDPval-AA Elo |

|---|---|---|

| Qwen3.6 27B | 27B | 1414 |

| DeepSeek V4 Flash (Reasoning, High Effort) | 284B (1.6T MoE) | 1414 |

| Meta Muse Spark | Undisclosed | 1414 |

| Qwen3.5 27B | 27B | 1157 |

| Gemma 4 26B | 26B | ~1350 |

Key conclusion: Qwen3.6 27B achieves the same score as DeepSeek V4 Flash with less than one-tenth the parameters. Compared to Qwen3.5 27B, it surged by 257 Elo.

What 257 Elo Means

In the Intelligence Index system, a 257-point gain roughly equals crossing a full model generation:

- GPT-4 to GPT-4o improvement: ~150-200 Elo

- Claude 3 Haiku to Sonnet: ~100-150 Elo

- Qwen3.5 to Qwen3.6: 257 Elo = exceeds one generation leap

And this was achieved with unchanged parameters (still 27B). The improvement comes entirely from training methods, data quality, and architecture optimization — not parameter stacking.

Intelligence Index Open Weights Leaderboard

Among open-weight models under 150B total parameters, Qwen dominates:

| Rank | Model | Intelligence Index |

|---|---|---|

| 🥇 | Qwen3.6 27B | 46 |

| 🥈 | Qwen3.6 35B A3B | 43 |

| 🥉 | Qwen3.5 27B | 42 |

| 4 | Gemma 4 31B | 39 |

| 5 | Llama 4 series | ~35 |

Qwen takes the top three spots. This isn’t coincidence — Alibaba’s Tongyi team has formed a methodological advantage in small-parameter efficiency optimization.

Why This Matters

1. Inference Cost Revolution

27B model inference costs roughly 1/10 of a 284B model. If capability is comparable:

- Self-deployment barrier drops significantly (consumer GPUs can run it)

- API call costs drop by an order of magnitude

- Edge deployment shifts from “impossible” to “feasible”

2. Open Source Ecosystem Turning Point

When 27B open-weight models match hundreds-of-billions-parameter closed models, the “only big tech can train good models” narrative starts collapsing.

3. Impact on Chinese Model Landscape

Qwen’s efficiency lead means: with the same compute budget, Qwen runs faster, cheaper, and at larger scale. This is a decisive advantage in mass-market and edge scenarios.

Action Items

- If you’re selecting models: For non-extreme performance needs, Qwen3.6 27B may be the best cost-performance option

- If you’re doing edge deployment: 27B is currently the largest “top-tier” model that can run on a single RTX 4090 (24GB) with INT4 quantization

- If you’re tracking open-source trends: Qwen3.6’s training methodology is worth deep study — it represents the “better without more parameters” technical direction

The next phase of the parameter race isn’t “who’s bigger” — it’s “who’s more efficient.” Qwen3.6 27B has already answered.