The “Trainable” Revolution for AI Agents

For a long time, AI agents faced a core contradiction: easy to build, hard to optimize.

You can quickly assemble an agent using LangChain, CrewAI, or any orchestration framework—define tools, write prompts, connect to an LLM API. But when the agent’s performance falls short, optimization options are limited: prompt engineering, adjusting tool-calling logic, or simply switching to a different base model.

These are all “manual tuning” methods. They cannot systematically improve performance through data-driven approaches like traditional machine learning models.

Today, Microsoft Research Asia open-sourced the Agent Lightning framework, attempting to solve this problem at its root.

Core Concept: Zero-Intrusion Reinforcement Learning

Agent Lightning’s design philosophy can be summarized in one sentence: Don’t touch your agent code, but make it stronger.

Traditional reinforcement learning for training agents requires:

- Modifying the agent’s internal architecture to expose training interfaces

- Defining reward functions deeply coupled with the agent’s decision loop

- Extensive engineering改造 to support training

Agent Lightning’s breakthrough lies in its external observation-feedback optimization architecture:

[Agent] ← Runs normally, no modifications needed

↑

[Agent Lightning Framework]

├─ Observes agent's tool call sequences and outputs

├─ Computes reward signals based on task results

├─ Generates new behavioral policies via policy optimization algorithms

└─ Injects optimized policies into the agent's inference loopThis means:

- Zero lines of your agent code need to change

- Just define “what good behavior looks like” (reward function)

- The framework handles observation, training, and policy injection end-to-end

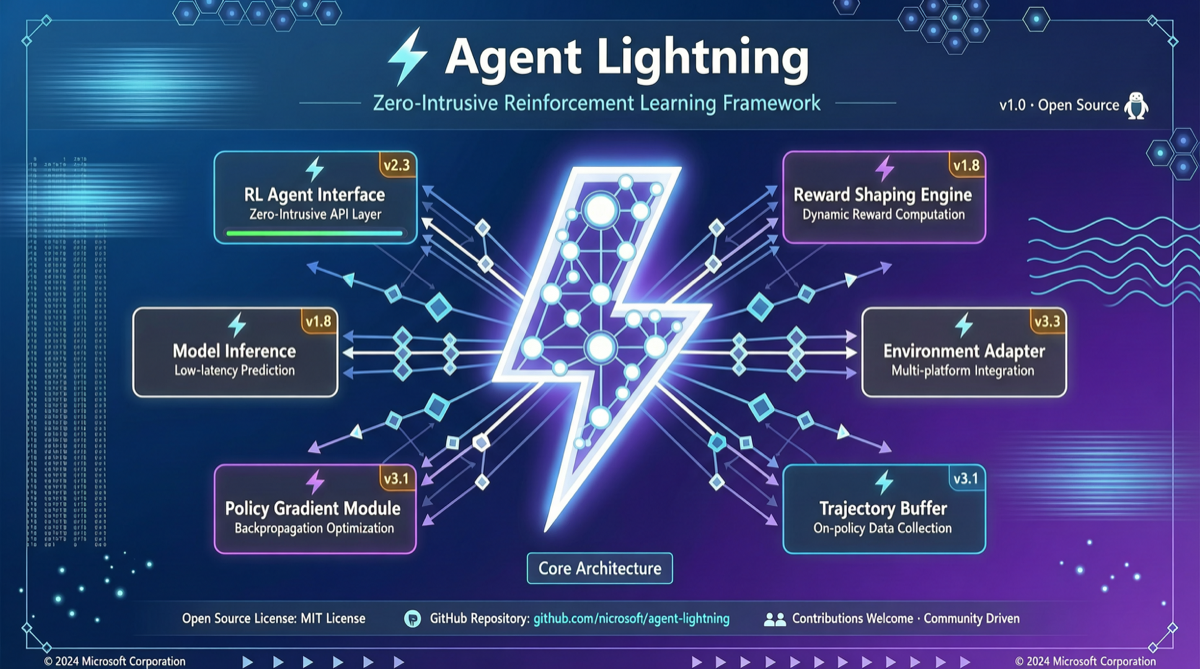

Technical Architecture

| Component | Function |

|---|---|

| Observer | Intercepts all agent-environment interactions, records state-action-result sequences |

| Reward Engine | Pluggable reward computation engine, supports result-level rewards (task success/failure) and process-level rewards (tool call efficiency, path quality) |

| Trainer | Policy optimizer based on PPO/GRPO and other RL algorithms, compatible with vLLM, Megatron-LM inference backends |

| Strategy Injector | Injects trained policies as “behavioral guidance” into the agent without modifying agent source code |

Why This Matters

1. Lowering the barrier for agent optimization

Currently, only teams with RL engineering capabilities can systematically optimize agents. Agent Lightning turns this into a “configure a reward function and you’re done” tool—similar to how ImageNet pretrained models transformed computer vision.

2. Solving the “last mile” problem

Base model capabilities are rapidly improving, but agent performance depends on “how well you use that capability.” Agent Lightning can multiply agent performance on specific tasks through RL training without changing the base model.

3. Catalyst for the open-source ecosystem

The open-source release means anyone can:

- Train specialized agents for their business scenarios

- Share trained strategies (similar to pretrained models on HuggingFace)

- Compare optimization effects across different agent architectures on unified benchmarks

Applicable Scenarios

Agent Lightning is particularly suited for:

- Complex workflow agents: Multi-step reasoning and multi-tool calling scenarios (e.g., code generation, data analysis), where result-level rewards (does the code pass tests) are naturally suited for RL optimization

- Customer service/dialogue agents: Dialogue quality and user satisfaction metrics serve as reward signals for continuous optimization

- Autonomous execution agents: Systems like OpenClaw and Hermes Agent that need to make autonomous decisions in environments can train better behavioral strategies through environmental feedback

Relationship with Other Frameworks

Agent Lightning is not an agent orchestration framework—it’s an agent training framework. Its relationship with LangChain, CrewAI, Dify, etc. is complementary, not competitive:

LangChain / CrewAI / Dify → Build the Agent

↓

Agent Lightning → Train and Optimize the Agent

↓

Production Deployment → Continuous Feedback Collection → Iterative TrainingQuick Start

git clone https://github.com/microsoft/Agent-Lightning

cd Agent-Lightning

pip install -e .

# Configure your Agent and Reward Function

# Start training

lightning train --config my_agent_config.yamlIndustry Impact

Microsoft’s open-source timing is critical. The AI industry in H1 2026 is transitioning from “model capability competition” to “agent application competition.” NVIDIA’s Nemotron 3 series, DeepSeek V4 Agent Integrations, and Xiaomi’s MiMo-V2.5 all emphasize agent capabilities.

Agent Lightning provides a critical infrastructure for this transition: moving agents from “manual tuning” into the era of “data-driven training.”

If this framework gains widespread adoption in the open-source community, it could become the “PyTorch” of AI agents—a training infrastructure that defines industry standards.

Primary sources:

- Agent Lightning GitHub - Microsoft

- MSRA Agent Lightning Introduction - MSRA