Core Conclusion

Two events in the 2026 AI model market, combined, will completely rewrite the industry landscape:

Event 1: DeepSeek V4 at 1/20th the cost approaching top-tier models

- NIST/CAISI evaluation: DeepSeek V4 is the “strongest Chinese AI model,” performance comparable to GPT-5 from 8 months ago

- API pricing: just 1/20th of Claude Opus 4.7

- Community assessment: “restrained training, fewer hallucinations, more stable for deployment”

Event 2: NVIDIA NIM platform opens Chinese model APIs for free

- MiniMax M2.7, DeepSeek V3.2 and other Chinese models available through NIM for free

- No credit card required, no trial period, no expiration

- Just a free API Key for immediate access

The signal from these two events combined is clear: AI models are transforming from “expensive commodities” to “free infrastructure.”

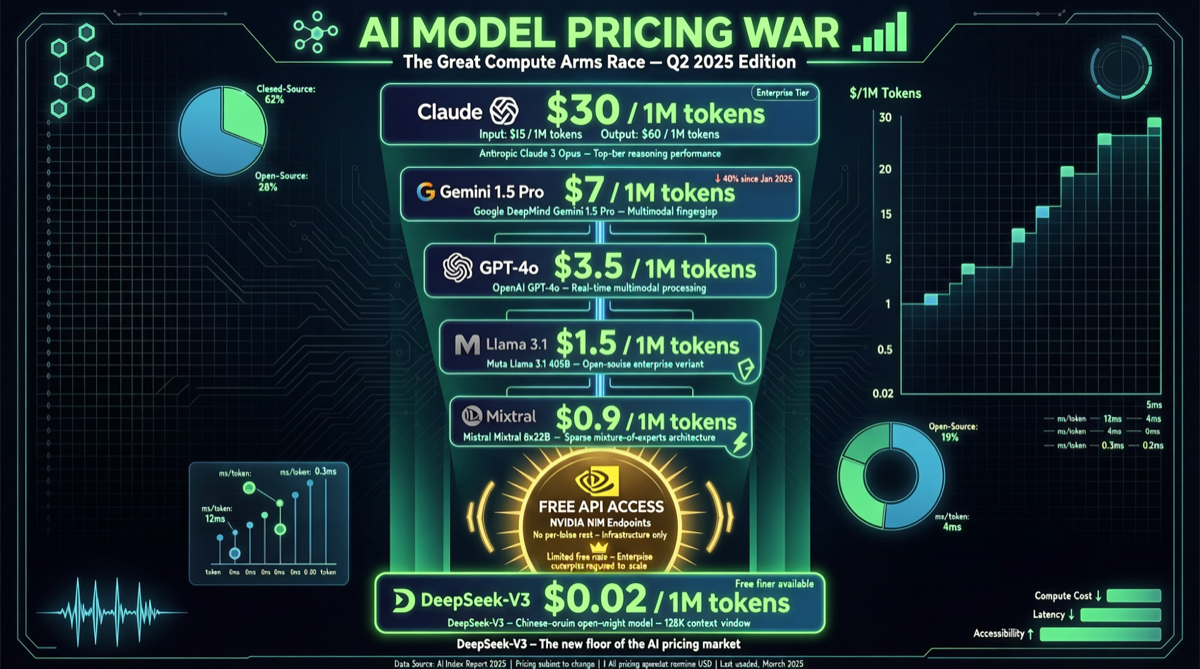

Cost Comparison Overview

| Model | Positioning | Relative Cost (vs Opus 4.7) | Performance Tier |

|---|---|---|---|

| Claude Opus 4.7 | Top-tier programming/engineering | 1.0x (baseline) | ★★★★★ |

| GPT-5.5 | Top-tier Agent capabilities | ~0.8x | ★★★★★ |

| Gemini 3.1 Ultra | 2M context multimodal | ~0.7x | ★★★★☆ |

| DeepSeek V4 | Strongest Chinese model | ~0.05x (1/20) | ★★★★☆ |

| DeepSeek V4-Flash | Volume/savings | ~0.02x | ★★★☆☆ |

| MiniMax M2.7 (NIM free) | Chinese MoE model | Free | ★★★★ |

| DeepSeek V3.2 (NIM free) | GPT-4 level | Free | ★★★★ |

Impact Analysis

Impact on Startups

A vivid comparison: if Uber used DeepSeek instead of Claude, their 2026 AI budget would last 7 years instead of only 4 months.

This means:

- Startups can directly use top-tier model capabilities, no longer limited by API costs

- AI features are no longer a “cost center” — can be boldly integrated into products

- The competitive focus shifts from “can we use AI” to “how to use AI to differentiate”

Impact on Large Model Vendors

| Vendor | Facing Pressure | Possible Response |

|---|---|---|

| Anthropic | Opus 4.7 high pricing hard to sustain | May introduce lower-priced version or strengthen differentiation |

| OpenAI | GPT-5.5 faces cost-effectiveness challenge | Strengthen Agent ecosystem and toolchain |

| Gemini needs to prove unique value | Highlight 2M context and multimodal advantages | |

| Chinese models | Must further reduce costs or improve performance | Price war may intensify |

Developer Selection Guide

Based on latest market dynamics, 2026 model selection recommendations:

| Scenario | Recommended | Reason |

|---|---|---|

| Writing code / fixing bugs | Claude Opus 4.7 | Programming capability still strongest |

| Multi-step reasoning / Agent | GPT-5.5 | Most mature Agent capabilities |

| Long document analysis | DeepSeek V4 (1M tokens) | Crushing cost-effectiveness |

| Volume / daily tasks | DeepSeek V4-Flash or NIM free models | Cost approaching zero |

| Product prototype validation | NVIDIA NIM free API | Zero-cost idea validation |

| Voice / video generation | MiniMax M2.7 (NIM free) | Free + multimodal |

NVIDIA NIM Strategic Intent

NVIDIA offering Chinese model APIs for free seems charitable, but has other calculations:

- Promoting NIM platform: getting more developers accustomed to NVIDIA inference infrastructure

- Locking in ecosystem: once developers build applications on NIM, migration costs are high

- GPU sales: free API compute is backed by NVIDIA GPUs — users ultimately still need to buy hardware

- Geopolitical balance: finding a “neither side offended” position in the US-China AI competition

Landscape Assessment

The 2026 AI model market is experiencing a “smartphone moment”:

- Before 2007, smartphones were luxury items

- After 2007, smartphones became infrastructure

- AI models are following the same path — from “expensive per-token service” to “freely available resource”

The winner is not “the company with the strongest model” but “the company that best uses model combinations.”

Action Recommendations

- Individual developers: Apply for NVIDIA NIM free API immediately — zero-cost AI app prototyping

- Startups: Use DeepSeek V4-Flash for 80% of daily tasks, only use Opus/GPT for critical scenarios — costs can be reduced by 90%+

- Large enterprises: Build a multi-model routing layer (Model Router), automatically selecting optimal model per task — this is the core competency of 2026

- Investors: Watch the “model routing/orchestration” track — when models become commodities, orchestration capability is the real moat

Conclusion: The AI model price war has only just begun. When the best models become nearly free, the real competition will shift to “who can build the best products with these models.”