Two Important Signals

This week, two tightly connected events in AI hardware:

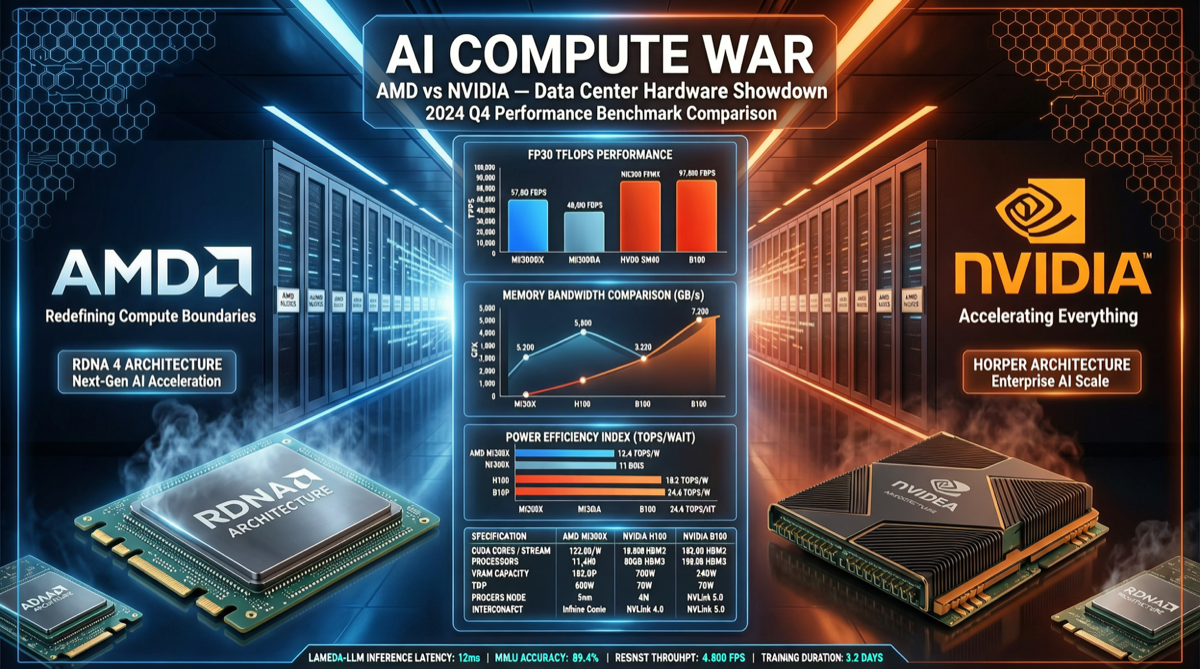

- AMD announces Advancing AI 2026 for July 23 in San Francisco. Lisa Su says “we’re probably two years into a 10-year AI cycle”

- SemiAnalysis publishes DeepSeek V4 Pro benchmarks: GPU throughput varies 40x+ under identical interactivity conditions

Together, they reveal a trend: AI computing race is shifting from “having it” to “using it well”.

DeepSeek V4 Pro GPU Benchmarks

| GPU Architecture | Model | Throughput (tok/s/GPU) | Relative | Use Case |

|---|---|---|---|---|

| Blackwell | B300 | 8,075 | 1.0x | Large-scale production |

| AMD CDNA4 | MI355X | 6.99 | 0.02x | Cost-effective inference |

| Hopper | H200 | 186 | 0.023x | Existing clusters |

Key Findings

Blackwell B300’s advantage isn’t just numerical. 8,075 tok/s/GPU means:

- Single card serves thousands of concurrent users

- Ultra-low latency for real-time applications

- Significantly better TCO at scale

AMD MI355X positioning needs reassessment. 6.99 tok/s/GPU under same interactivity is dramatically lower than B300:

- AMD’s strategy may be cost-per-compute, not absolute performance

- MI355X suits latency-insensitive batch processing

- MI400 at Advancing AI 2026 will be crucial

AMD Advancing AI 2026 Preview

- When: July 23, 2026

- Where: San Francisco

- Expected: MI400 series chips

- CEO statement: “We’re probably in year two of a 10-year AI cycle”

Market Landscape

| Vendor | Current Flagship | Next Gen | Software Ecosystem | Market Trend |

|---|---|---|---|---|

| NVIDIA | B200/GB200 | B300/Rubin | CUDA moat | ⬆️ |

| AMD | MI300X/MI355X | MI400? | ROCm catching up | ➡️ |

| Intel | Gaudi 3 | Falcon Shores | oneAPI | ➡️ |

| Huawei | Ascend 910C | Ascend 910D | CANN | ⬆️ (China) |

Developer Impact

Compute Procurement

- For peak performance: Blackwell B300 is the best, but price and supply are barriers

- For cost-efficiency: AMD MI355X has cost advantage in batch processing

- If you have H200: Keep using, evaluate next generation later

Deployment Strategy

DeepSeek V4’s MoE architecture (37B active parameters) reduces single-card requirements:

- Small batch: H200 sufficient

- Large-scale service: Need B300-level throughput

- Local deployment: AMD Ryzen AI Max 395 (128GB unified memory, June release) runs 200B MoE

Investment & Market Judgment

Short-term (3-6 months)

- NVIDIA Blackwell’s lead won’t be challenged

- AMD needs MI400 to show dramatic performance improvement

- Open-source/low-cost models accelerate inference hardware diversification

Mid-term (6-18 months)

- AI compute market moving from “NVIDIA monopoly” to “multi-vendor competition”

- Chinese chips (Ascend) expanding domestic market share

- Consumer AI PCs may become new compute entry points

For Startups

- Don’t lock into one hardware vendor: Model architecture diversity (MoE, quantization, distillation) means different hardware suits different use cases

- Focus on inference cost, not training cost: For most applications, inference consumes far more compute than training

- Consider model-hardware co-optimization: Hardware-optimized deployment may beat “general optimal models”

AMD Advancing AI 2026 will be a key indicator for H2 compute landscape.