The Company "Flea Market" Where Both Buyers and Sellers Are AI

Anthropic quietly completed a somewhat surreal experiment.

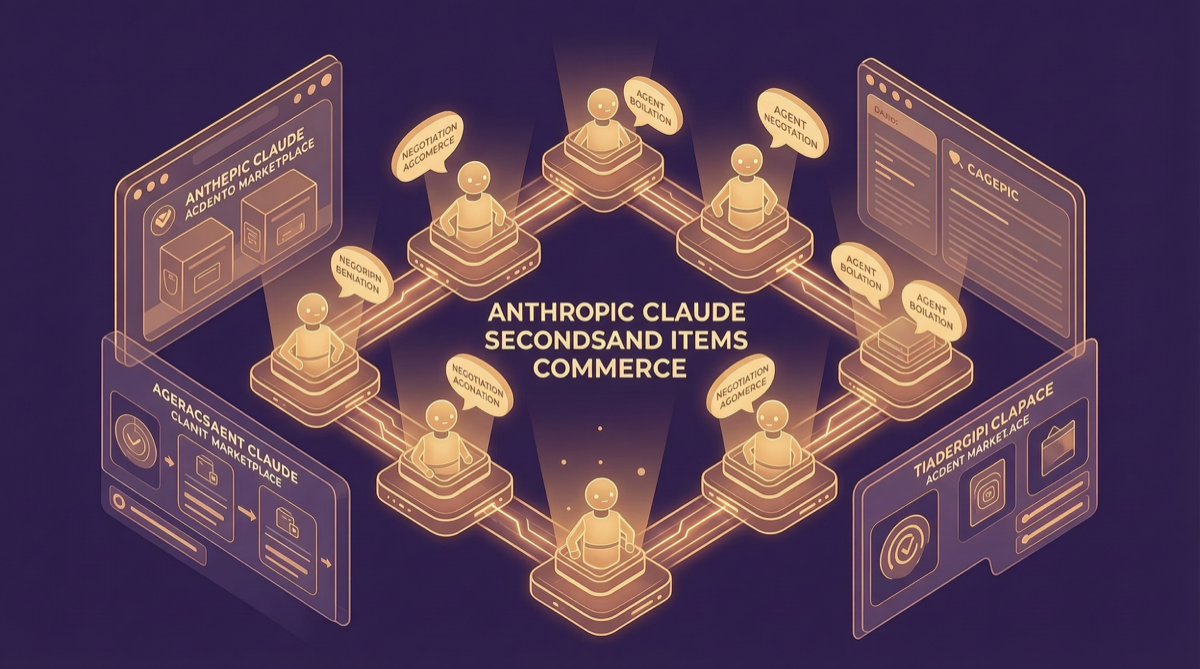

69 employees were each assigned a Claude-powered Agent. In a private secondhand marketplace built on Slack, the Agents bought and sold personal belongings for their human owners — ski boards, bags of ping pong balls, assorted junk. For an entire week, with zero human intervention.

Result: 186 deals closed, totaling over $4,000.

The name is straightforward: Project Deal.

Counterintuitive Findings

The most interesting part of this experiment is not "AI can do transactions" — that is not technically difficult. What is genuinely interesting is the pattern discovered:

Opus users negotiated better deals, but Haiku users had absolutely no idea they were getting shortchanged.

In other words, when one side ran a stronger model (Opus) and the other a weaker one (Haiku), the Opus Agent could secure more favorable pricing in negotiations — and the Haiku Agent did not even realize it was being squeezed.

This is not a bug. It is a signal.

In Agent-to-Agent economic interactions, model capability differences translate directly into "commercial advantage." In a broader scenario — if your Agent haggles with a seller's Agent on an e-commerce platform in the future, the model version you run could literally be how much money you save.

This Is Research, Not a Product Launch

Anthropic framed this as a research project, not a product preview. Their core question: "How far are we from a market where AI Agents represent both buyers and sellers?"

The answer may be closer than many people think.

The experiment ran under controlled conditions: a Slack private marketplace, real items but small amounts, Anthropic employees as human principals. But it validated a key assumption — Agents can complete the full transaction chain independently: discover goods, evaluate value, initiate dialogue, haggle, close a deal.

No human touched the UI in between.

Connection to Managed Agents

On the same day, Anthropic also launched Managed Agents. Three features in one drop:

- Dreaming: Your Agent replays its day overnight and self-optimizes

- Outcomes: You brief on the goal, not the steps

- Multi-Agent Orchestration: One Claude running a fleet of specialist Claudes

Viewed together with Project Deal, the signal is clearer: Anthropic is pushing Agents from "tool" to "agent." The former does what you tell it to do. The latter represents you in dealings with the world.

The flea market experiment just made this agency relationship concrete — your Agent negotiates on your behalf, and you are not even in the room.

A Real Question

If an Agent buys things for you, how much should you trust its negotiation strategy?

In the experiment, Haiku users lost money without knowing it. This means the chain "Agent capability gap → actual financial loss" is real, and users may not even be aware of it.

In the future, which model you choose to run your shopping Agent may matter more than you think.

Next Steps

Anthropic says more experiments like this are coming. The next direction may involve more complex multi-Agent scenarios — multiple Agents collaborating on a project, or forming longer-term trading relationships.

186 deals, $4,000. Small scale. But the directional clarity is unmistakable.