A post on X with 319 likes and 45 retweets reveals what’s happening in the local AI space in 2026: five tools combined, completely free, outperforming most companies’ paid solutions.

What Happened

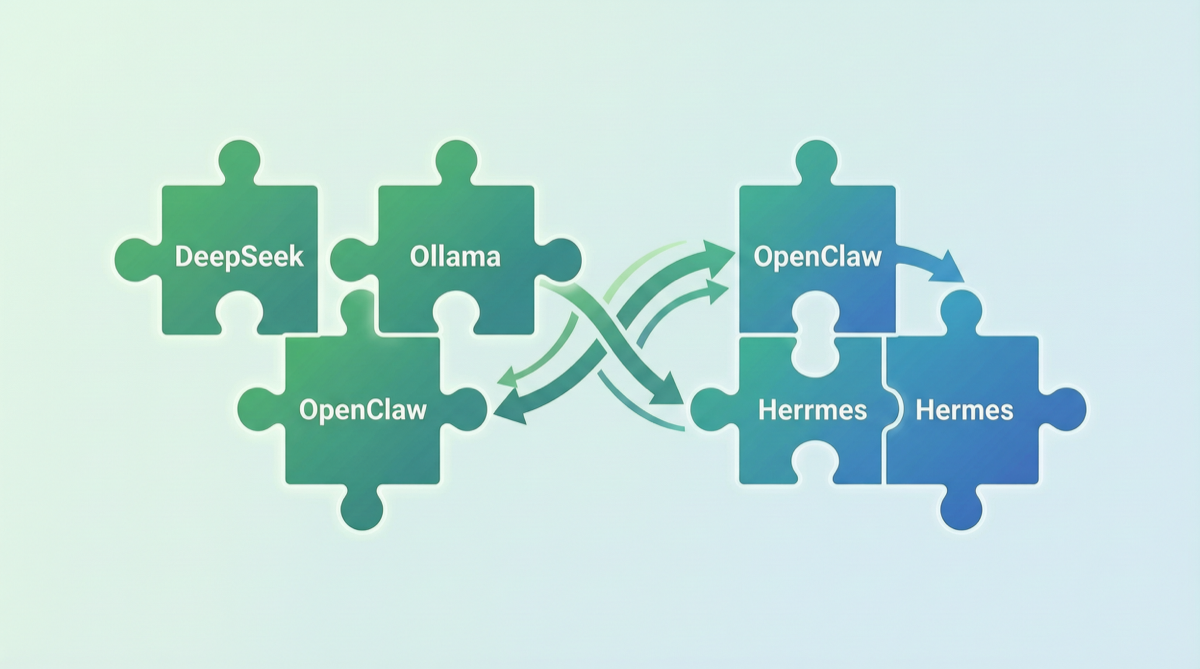

This weekend someone assembled a local AI suite using:

- DeepSeek V4 Flash — inference engine (284B params, MoE, API pricing ~1/166 of GPT-5.5)

- Ollama — local model management (one-command model download and run)

- OpenClaw — 24/7 local Agent daemon (auto task execution, file ops, web browsing)

- Hermes Agent — intelligent Agent orchestration (model routing, skill management, workflow automation)

- Claude Code — coding assistant CLI (can work alongside local models)

The core logic: open-source models provide intelligence, open-source frameworks provide capabilities, local hardware provides compute — together, the cost is zero.

Component Breakdown

DeepSeek V4 Flash: The Cost-Performance King

| Param | V4-Flash | V4-Pro |

|---|---|---|

| Total Params | 284B | 1.6T |

| Active Params | ~28B | ~37B |

| Context Window | 128K | 1M |

| Architecture | MoE | MoE |

| License | Apache 2.0 | Apache 2.0 |

Ollama: Model as a Service

ollama run deepseek-v4-flash

ollama run qwen3.6:27b &OpenClaw: 7×24 Local Agent

- Runs in background, listens to task queue

- Auto-executes file ops, code modifications, web browsing

- Supports scheduled tasks

- v4.24 sets DeepSeek V4 Flash as default for new users

Hermes Agent: The Orchestration Layer

- Model routing: auto-selects model by task complexity

- Skill management: auto-discovers and loads tool skills

- Workflow automation: combines tools for complex tasks

- LM Studio integration: native support added May 1

Setup Steps

# Step 1: Install Ollama + models

curl -fsSL https://ollama.com/install.sh | sh

ollama pull deepseek-v4-flash

ollama pull qwen3.6:27b

# Step 2: Deploy OpenClaw

npm install -g openclaw

openclaw config set model deepseek-v4-flash

openclaw daemon start

# Step 3: Configure Hermes Agent

hermes init

hermes connect ollama --auto-discover

hermes config set routing.strategy "auto-complexity"Cost Comparison

| Solution | Monthly Cost | Coverage | Data Privacy |

|---|---|---|---|

| GPT-5.5 API | $50-500+ | Full | ❌ Data leaves region |

| Claude Opus 4.7 API | $80-800+ | Best coding | ❌ Data leaves region |

| This stack (local) | $0 (existing hardware) | 90% scenarios | ✅ Fully local |

| This stack + cloud fallback | $10-50 | 100% scenarios | Mostly local |

Limitations

- Hardware threshold: V4-Flash needs at least 24GB VRAM (RTX 3090/4090 level)

- Coding quality gap: Still not quite at Claude Opus 4.7 level for complex refactoring

- OpenClaw learning curve: System prompt management takes time

- Not a silver bullet: Good enough for most scenarios, but extreme performance needs still require cloud APIs

Action Advice

| Your Situation | Recommendation |

|---|---|

| Have RTX 3090/4090 | Build immediately, DeepSeek V4 Flash + Ollama is lowest startup cost |

| Tight budget but need AI | Local stack + occasional cloud fallback, under $20/month |

| Enterprise, data-sensitive | Local stack naturally meets data residency requirements |

| Need extreme coding quality | Keep Claude API as supplement, use local stack for daily tasks |