Verdict

DeepSeek V4 is the closest open-source model to the frontier, approaching GPT-5.4 / Opus 4.5+ level within 0.2 points on coding and reasoning benchmarks, at 1/7 to 1/9 the API price. Its positioning is clear: deliver “good enough” frontier capability at minimal cost, not chase SOTA.

Best for budget-conscious teams doing prototyping and batch inference; not recommended for scenarios requiring极限 performance — it trails GPT-5.5 and Opus 4.7 by roughly 4-5 months of technical generation gap.

Test Dimensions

Architecture & Scale

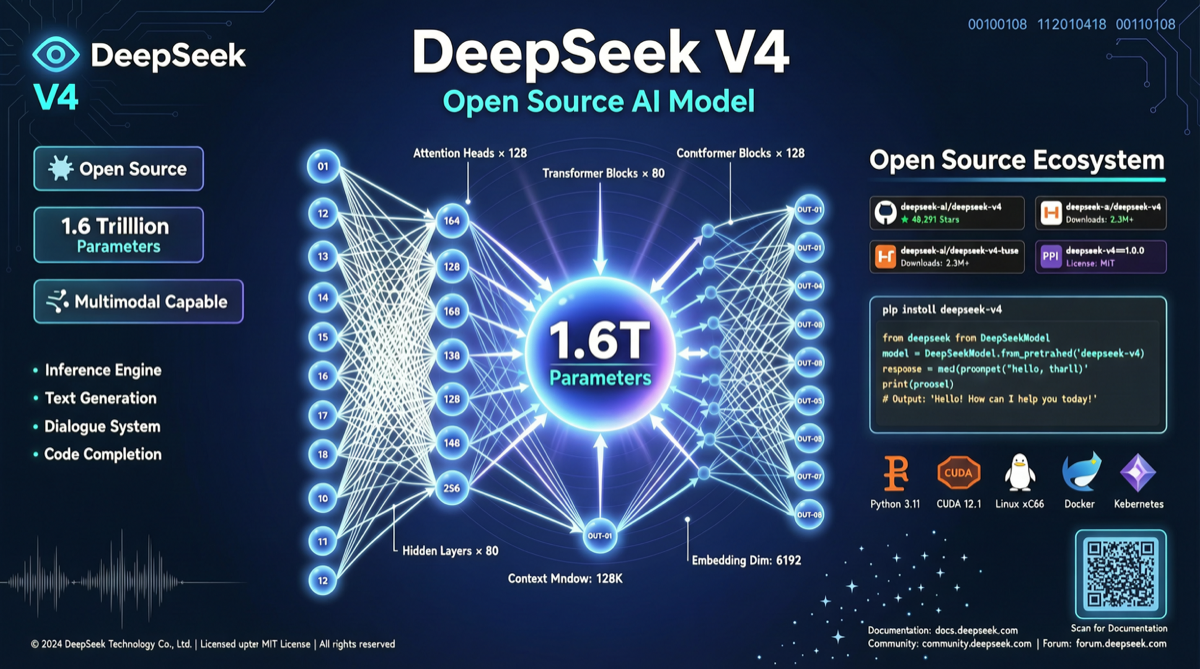

DeepSeek V4 uses a Mixture-of-Experts (MoE) architecture with 1.6 trillion total parameters, 1 million token context window, and support for 50+ languages. It is the first large-scale model trained almost entirely on Huawei Ascend chips — demonstrating that China can produce competitive frontier models under compute constraints.

DeepSeek V4 Pro further enhances agentic coding capabilities, scoring 70.98 on Chinese domestic evaluations, surpassing all other domestic open-source models.

Benchmark Results

| Benchmark | DeepSeek V4 | GPT-5.5 | Claude Opus 4.7 | Gemini 2.5 Pro |

|---|---|---|---|---|

| SWE-bench Pro | ~58% | 58.6% | 64.3% | ~55% |

| Terminal-Bench 2.0 | ~75% | 82.7% | ~70% | ~72% |

| AIME 2025 | ~90% | ~95% | ~93% | ~92% |

| MRCR @ 1M | ~50% | 74% | 32.2% | ~60% |

On coding tasks, V4 is in the same tier as GPT-5.4 / Opus 4.5+, but still visibly behind GPT-5.5 and Opus 4.7. Math reasoning is solid, near the top tier. Long-context retrieval is usable but less reliable than GPT-5.5.

Real-World Usage

Community feedback:

- Chinese language excellence: As a domestic model, Chinese understanding and generation quality clearly outperforms most international competitors

- Higher hallucination rate: Evaluations note V4’s hallucination rate reaches 86% on factual QA — a verification layer is recommended in production

- Inference speed: MoE architecture means activated parameters are far smaller than total, giving better latency than dense models at similar scale

- Deployment threshold: Open weights can be deployed locally, but the full 1.6T parameter model requires a multi-GPU cluster; distilled smaller versions are more suitable for single machines

Pricing

DeepSeek V4 API pricing: $3.48/MTok output vs Opus 4.7 at $25/MTok and GPT-5.5 at $30/MTok. The 7-9x price gap is its biggest differentiator. DeepSeek V4 Pro’s full Artificial Analysis Index run costs only $1,071 — one-fifth of Opus 4.7.

Recommendations

China-based teams: Prioritize. Strong Chinese, flexible deployment, extremely low cost, and unaffected by US export controls.

Cost-sensitive batch tasks: DeepSeek V4 is optimal. Document processing, batch summarization, simple code generation — its capability is sufficient.

极限 performance scenarios: Not recommended yet. GPT-5.5 and Opus 4.7 remain clearly ahead for complex agent orchestration, large-scale code refactoring, and high-precision reasoning.

Academic research: Apache 2.0 license allows free use and modification — an excellent research base.