Bottom Line

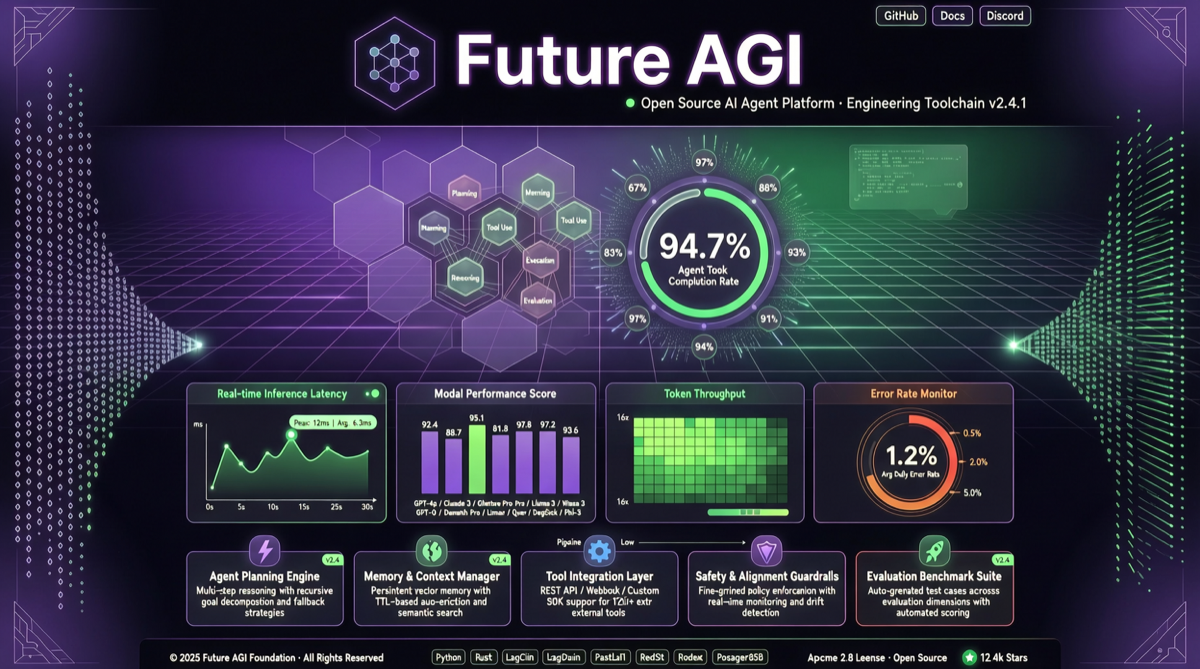

Future AGI has open-sourced its complete end-to-end Agent engineering and optimization platform (Apache 2.0). This isn’t a trimmed-down community version — it includes UI, backend, simulation engine, evaluation system, optimization loop, observability, and guardrails. For teams pushing Agents to production, this is the most complete integrated open-source solution available.

Platform Architecture

Future AGI splits the platform into 6 independently installable modules:

| Module | Installation | Core Capability |

|---|---|---|

| future-agi | docker compose up -d | Main repo, full self-hostable platform |

| traceAI | pip install fi-instrumentation-otel | Zero-config OTel tracing for 50+ AI frameworks |

| ai-evaluation | pip install ai-evaluation | 50+ eval metrics + guardrail scanners |

| agent-opt | pip install agent-opt | 6 prompt optimization algorithms |

| simulate-sdk | pip install agent-simulate | Voice Agent simulation (LiveKit + Silero VAD) |

| agentcc | pip install agentcc | Gateway client, 100+ LLM providers |

What Happened

Core Capabilities

🧪 Simulation: Test thousands of multi-turn conversations (text + voice) against realistic personas and adversarial inputs before launch.

📊 Unified Evaluation: 50+ metrics in one API call — groundedness, tool-use accuracy, PII detection, custom rubrics.

🛡️ Guardrails: 18 built-in protection rules + 15 vendor adapters, supporting inline protection and standalone deployment.

👁️ Observability: OpenTelemetry-native tracing for LangChain, LlamaIndex, CrewAI, DSPy, and 50+ frameworks.

🎛️ Gateway: OpenAI-compatible gateway, 100+ providers, 15 routing strategies.

🔁 Auto-Optimization: 6 prompt optimization algorithms — GEPA, PromptWizard, ProTeGi — learning from production traces.

Why It Matters

1. The “Swiss Army Knife” of Agent Engineering

Current Agent toolchains are fragmented:

- LangSmith / Langfuse for tracing

- Braintrust / LangSmith for evaluation

- NeMo Guardrails / Guardrails AI for safety

- Manual scripts for prompt optimization

Future AGI integrates all of this into one self-hostable platform under Apache 2.0.

2. Self-Optimization Is the Differentiator

The 6 prompt optimization algorithms are the most imaginative part:

- Production traces auto-collected → evaluation system scores → optimization algorithms iterate prompts → new prompts auto-deployed

- From “manual prompt tuning” to “system auto-evolving prompts”

Actionable Advice

Who Should Pay Attention

- Teams pushing Agents to production: Need a complete loop from simulation to evaluation to optimization

- Voice Agent developers: simulate-sdk is a rare open-source voice Agent simulation solution

- Multi-model routing scenarios: agentcc gateway supports 100+ providers and 15 routing strategies

- Teams avoiding SaaS lock-in: Full self-hosting + Apache 2.0

How to Get Started

# Quick start the full platform

git clone https://github.com/future-agi/future-agi

cd future-agi

docker compose up -d

# Or use the evaluation module standalone

pip install ai-evaluation- GitHub:

github.com/future-agi - Cloud trial:

futureagi.com

Caveats

- Currently in nightly release; stable version not yet available

- Many modules; start with one (e.g., traceAI) before integrating all

- Optimization algorithm effectiveness depends on your specific Agent scenario