What Happened

Google’s Gemini CLI v0.40.0, released May 1, 2026, introduces a key feature: experimental local Gemma model support + smart routing architecture.

This isn’t simple “local inference” — it’s Google’s redesign of AI workflow cost structure.

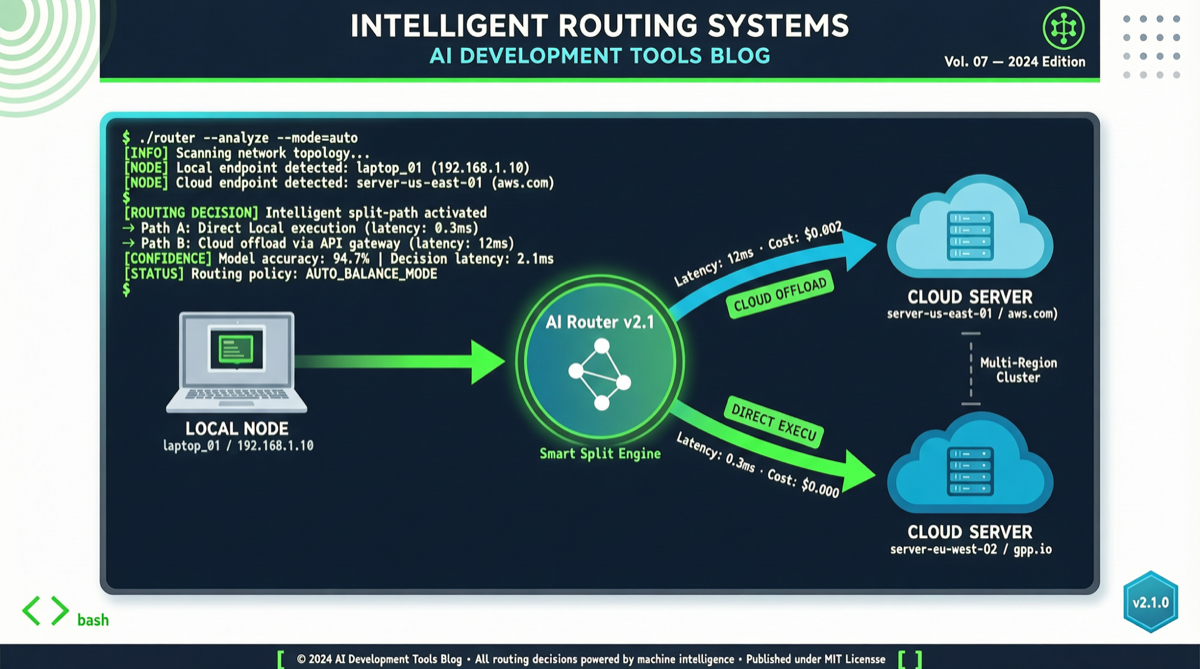

Smart Routing Architecture

Your request → CLI judges complexity

├── Simple tasks (code completion, file search, formatting)

│ → Local Gemma (zero API cost, <1s response)

│

└── Complex tasks (code refactoring, architecture design, multi-step reasoning)

→ Cloud Gemini (API billing, full reasoning)Gemma 4 26B A4B: Consumer Hardware Efficiency

- 26B total parameters, only ~4B activated per request

- Multi-instance concurrent inference on a single laptop

- Handles 10 concurrent prompts smoothly

- Fully local, zero API cost

Cost Optimization

| Task type | Daily count | Pure cloud | Smart routing | Savings |

|---|---|---|---|---|

| Code completion | 50 | $2.50 | $0 | $2.50 |

| File search | 30 | $1.50 | $0 | $1.50 |

| Bug analysis | 5 | $1.00 | $1.00 | $0 |

| Architecture design | 2 | $2.00 | $2.00 | $0 |

| Daily | 87 | $7.00 | $3.00 | $4.00/day |

Monthly savings: ~$88 per developer.

Getting Started

npm install -g @google/[email protected]

gemini-cli model download gemma-4-26b-a4b

gemini-cliRoadmap

- Full local execution: Future versions support all tasks locally

- More model choices: Beyond Gemma 4

- Enterprise deployment: Local model management and security policies