Core Conclusion

The AI industry in 2026 is undergoing a silent but profound architectural transformation: from “pick the best model” to “pick the right model for each task”.

The driving factor is simple — model costs have plummeted. The API call costs for mainstream models like GPT-5.5, Claude Sonnet 4.6, Qwen 3.6, DeepSeek V4, and Gemini 3 Flash have dropped 40-80% compared to the same period in 2025.

Cost Reduction Data

| Model | 2025 Input Price ($/M tokens) | 2026 Input Price ($/M tokens) | Reduction |

|---|---|---|---|

| GPT-5.5 | $15.00 | $7.50 | 50% |

| Claude Sonnet 4.6 | $8.00 | $3.00 | 62.5% |

| Qwen 3.6 Max | $5.00 | $1.50 | 70% |

| DeepSeek V4 Pro | $3.00 | $0.60 | 80% |

| Gemini 3 Flash | $2.50 | $0.35 | 86% |

Cost is no longer the only constraint in model selection. This means you can call multiple models simultaneously without losing control of the bill.

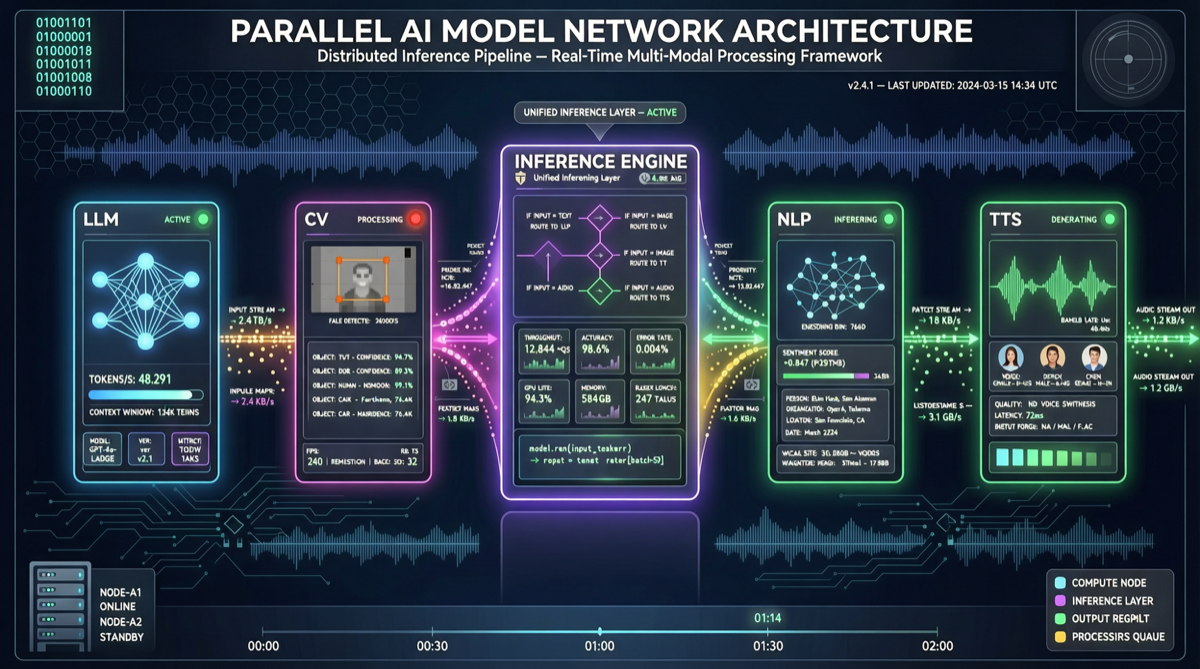

Multi-Model Parallel Architecture: The 2026 Standard Practice

User Request

│

▼

┌─────────────┐

│ Task │ ← Lightweight model (Gemini Flash / Qwen 3.6B)

│ Classifier │ Cost: $0.0003/call

│ (Router) │

└──────┬──────┘

│

┌────┼────┬──────────┐

▼ ▼ ▼ ▼

Code Creative Data Daily

Analysis Chat

│ │ │ │

▼ ▼ ▼ ▼

GPT-5.5 Claude Qwen 3.6 Gemini

5.5 Opus 35B MoE Flash

4.7 $15.00 $1.50 $0.35

$7.50 /M /M /M

/MKey Insight: The Router itself only needs an ultra-lightweight model (negligible cost) — it classifies the task type and routes the request to the most cost-effective model.

Cost Comparison: Single Model vs Multi-Model Routing

Based on 10,000 daily calls:

| Approach | Model Configuration | Daily Cost | Monthly Cost |

|---|---|---|---|

| Pure Opus | All Opus 4.7 | $150 | $4,500 |

| Pure Sonnet | All Sonnet 4.6 | $30 | $900 |

| Multi-Model Routing | 80% Flash + 15% Sonnet + 5% Opus | $12 | $360 |

The multi-model routing approach saves 92% compared to pure Opus, while maintaining overall quality with less than 5% degradation since complex tasks are still handled by Opus.

Tool Stack

| Tool | Purpose | Cost |

|---|---|---|

| LiteLLM Proxy | Unified API interface + routing | Open source, free |

| LangGraph | Multi-agent orchestration | Open source, free |

| MCP Server | Standardized tool calling | Open source, free |

| PromptLayer | Call tracking + cost analysis | Free tier available |

Getting Started Steps

- Connect LiteLLM Proxy: unify multiple model APIs into a single endpoint

- Define routing rules: assign models by task type (coding/creative/analysis/chat)

- Set up fallbacks: automatically switch to backup models when the primary fails

- Monitor cost distribution: use PromptLayer to track call ratios and expenses per model

Business Judgment: If your team is still “using one model for everything”, start migrating to a multi-model architecture now. After Q2 2026, single-model architecture will no longer be cost-competitive.