Bottom Line

Qwen team released Qwen-Scope 🔭 on April 30 — an open-source sparse autoencoder (SAE) toolkit for the Qwen model family. It extracts 81,000 features across all 64 layers of Qwen3.5-27B, enabling the open-source community to directly manipulate internal model representations for the first time, rather than relying solely on indirect prompt engineering.

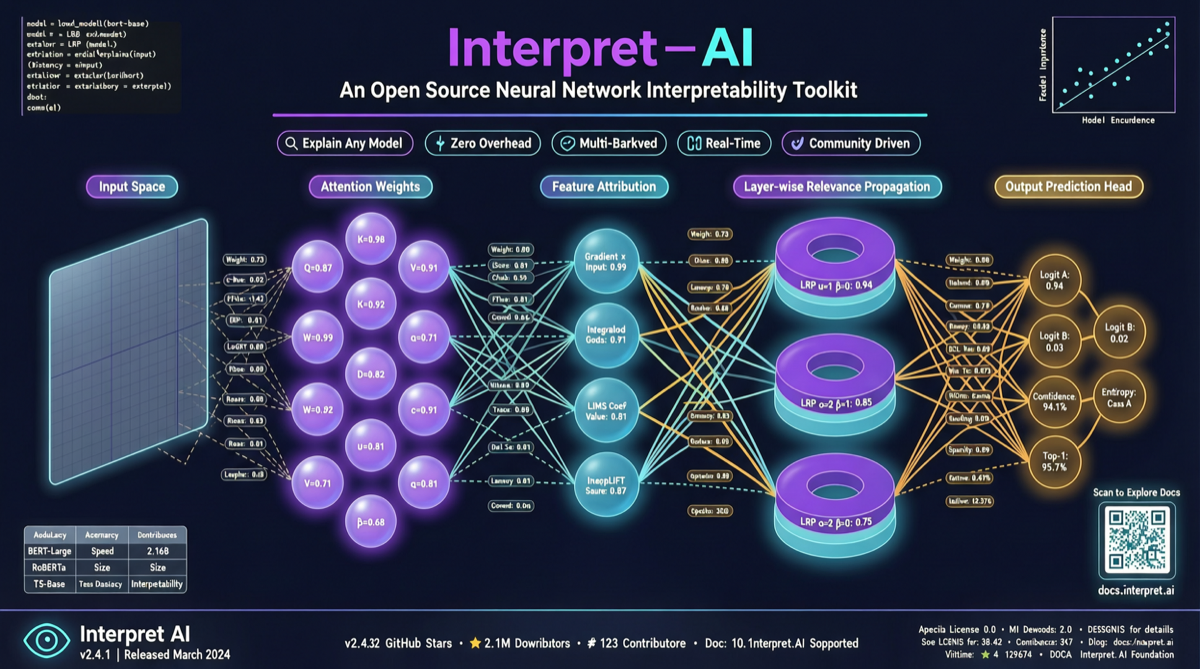

This marks a shift of open-source model interpretability tools from “academic toy” to “engineering-ready.”

What Qwen-Scope Does

| Dimension | Data |

|---|---|

| Target Model | Qwen3.5-27B |

| SAE Features | 81,000 |

| Layer Coverage | All 64 layers |

| Core Capabilities | Inference steering + Data classification + Mechanistic analysis |

| Distribution | Open-source, downloadable from Hugging Face |

| Innovation | Direct internal feature manipulation, bypassing prompt engineering |

Three practical use cases:

-

Inference Steering: Guide output direction by directly modifying internal feature vectors, bypassing the uncertainty of prompt engineering. Want the model to be more “creative” or more “conservative”? Adjust in feature space directly.

-

Data Classification: Use SAE-extracted features to classify training/inference data, helping understand activation patterns across different inputs.

-

Mechanistic Analysis: Researchers can trace how specific concepts (e.g., “safety,” “mathematical reasoning”) are represented within the model, providing empirical tools for AI safety research.

Why This Matters

Model interpretability has long been a core bottleneck in AI safety. While Anthropic has been advancing SAE research (interpretability analysis of Claude), it has remained largely in “research paper + limited open-source” territory. Qwen’s move to open-source the complete SAE toolchain with 81k features far exceeds the scale of any prior open-source SAE project.

Meanwhile, Qwen3.6 27B just scored 46 on the Artificial Analysis Intelligence Index, becoming the new open-weights leader under 150B parameters. Qwen-Scope further strengthens Qwen’s positioning in the dual tracks of “open-source + interpretability.”

Landscape

| Model/Team | Interpretability Openness | Characteristics |

|---|---|---|

| Qwen-Scope | Full open-source, 81k features | Engineering-ready, supports inference steering |

| Anthropic SAE Research | Papers first, partial code | Methodological leader, toolchain not open |

| OpenAI | Essentially closed | Internal research only |

| Google DeepMind | Partial papers | Academic-oriented |

The open-source model camp is building a new competitive moat: not who has the most parameters, but who can “open up” their model for the community to use.

Action Items

- Researchers: Download Qwen-Scope weights from Hugging Face and reproduce feature analysis and steering experiments on Qwen3.5-27B.

- Safety Engineers: Use SAE features to analyze model “safety boundaries” — which inputs trigger specific safety/unsafe representations.

- Developers: Watch the inference steering capability — it may become a new paradigm replacing prompt engineering.

Qwen-Scope isn’t just another tool release. It’s a substantive step forward on the open-source community’s path to “understanding what happens inside AI.”