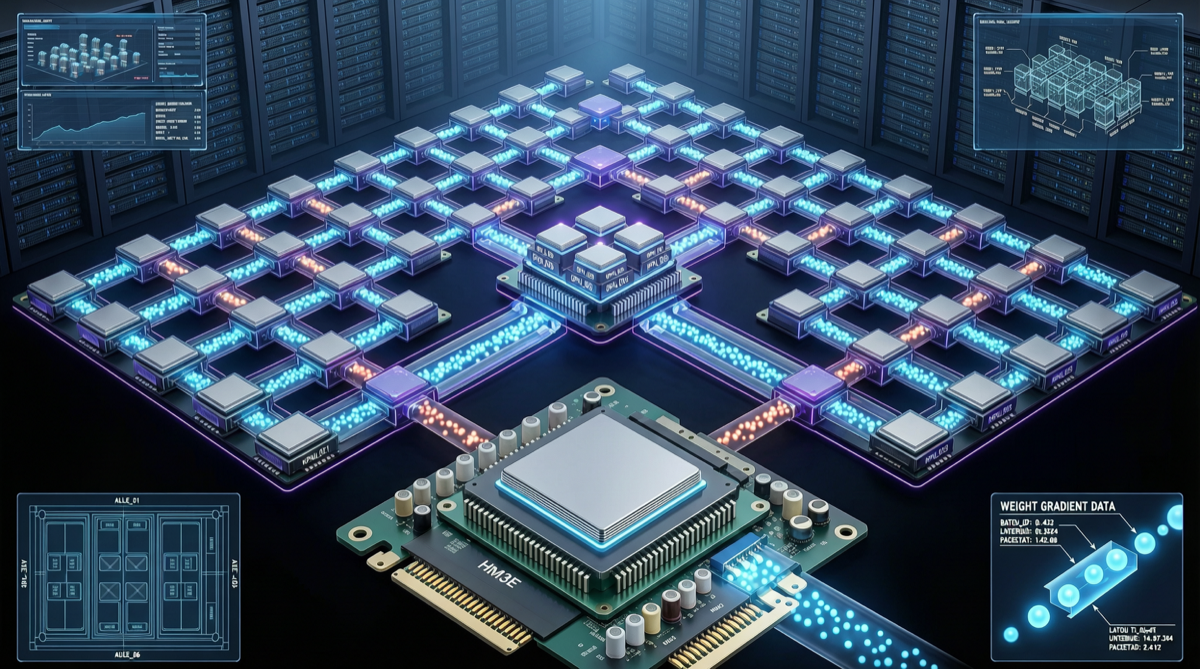

Training a trillion-parameter reinforcement learning model — the hardest part isn't forward inference. It's how to sync updated weights to all nodes.

On April 29, 2026, the LMSYS team published a technical blog post introducing a new weight update approach: RDMA-based peer-to-peer (P2P) transfer, as a supplement to traditional NCCL broadcast.

The title is straightforward: "Updating 1T parameters in seconds." Not an exaggeration — it actually achieves second-level sync.

Why NCCL broadcast isn't enough

In large-scale distributed RL training, weight synchronization directly impacts training efficiency. The traditional approach uses NCCL's broadcast operation — one node updates weights, then broadcasts to all other nodes.

The problem: when models reach trillion-parameter scale, broadcast becomes a bottleneck. All nodes wait for one node to finish sending before starting the next round. This is the classic limitation of tree-based broadcast.

The P2P approach changes the logic: each node only communicates with the nodes it needs, not full broadcast. Using RDMA (Remote Direct Memory Access) to bypass CPU and OS, transferring data directly between GPU memory.

Think of it like this: NCCL broadcast is one person speaking on stage while everyone waits; P2P is everyone exchanging information with their neighbors simultaneously, no queue required.

What this means in practice

Per LMSYS's description, the approach has several characteristics:

Compatible with all major open-source models: Not a custom optimization for one specific model, but a framework-level capability in SGLang. This means DeepSeek-V4, Qwen series, and other open-source MoE models can all use it directly.

Supplement, not replacement: P2P isn't trying to replace NCCL broadcast — it offers another option. Different network topologies and cluster scales favor different approaches. Users can choose based on their actual hardware environment.

Second-level sync: Weight updates for trillion-parameter models compressed from minutes to seconds. For RL training, this means each iteration's time is dramatically shortened, directly improving training efficiency.

Where this matters

RLHF has become the standard post-training process for large models. From GPT-4 to Claude to Qwen, almost every frontier model has used RL. But RL training infrastructure has been a bottleneck — especially when models reach trillion-parameter scale.

Existing open-source RL training frameworks like Miles and veRL mostly rely on NCCL for weight synchronization. As cluster scales grow and model parameters increase, NCCL's broadcast mode shows clear performance limitations.

LMSYS's P2P approach offers a different path. It's not a company's internal optimization — it's open-source infrastructure. Any team using SGLang for large-scale RL training can use it directly.

SGLang's positioning

SGLang has become a core component of large model inference and training infrastructure. From Day-0 DeepSeek-V4 support to GB300 inference optimization to now P2P weight transfer, SGLang's positioning is increasingly clear: the infrastructure layer for open-source models.

This trend is interesting. Open-source model capabilities are approaching closed-source, but infrastructure has been a gap. SGLang is filling that gap.

The specific technical details of P2P weight transfer — RDMA usage patterns, topology optimization strategies, fault tolerance mechanisms — aren't fully expanded in the blog post. But for teams already using SGLang for RL training, this update means direct performance improvement without waiting for a paper publication.

Sources: