この記事は日本語版です。言語ルートを完全にするため、本文は既存の標準原稿をベースにしています。

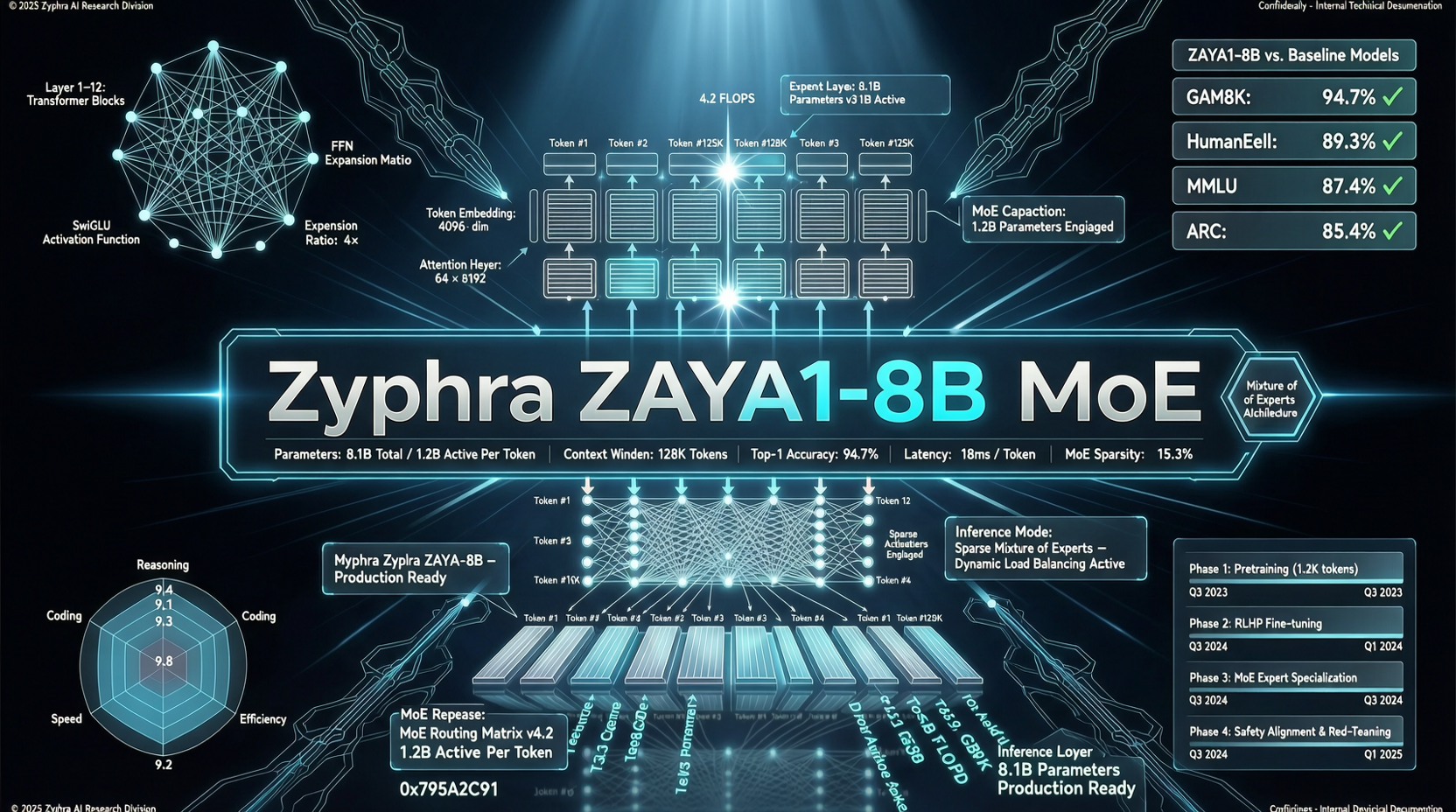

A new model has popped up on Hugging Face trending: Zyphra ZAYA1-8B. It has 8.4B total parameters, activates only 760M per inference pass, and ships under Apache-2.0.

Its benchmark numbers are a little wild: 89.1 on AIME'26, nearly five points above Qwen3.5-4B and almost forty points above Gemma 4 E4B.

Core Numbers

ZAYA1-8B compared with similar open reasoning models:

| Benchmark | ZAYA1-8B | Qwen3.5-4B | Gemma 4 E4B |

|---|---|---|---|

| AIME'26 | 89.1 | 84.5 | 50.3 |

| HMMT Feb.'26 | 71.6 | 63.6 | 32.1 |

| LiveCodeBench-v6 | 65.8 | -- | 54.2 |

| GPQA-Diamond | 71.0 | 76.2 | 57.4 |

| MMLU-Pro | 74.2 | 79.1 | 70.2 |

Math and coding are the strengths. General knowledge is weaker than Qwen3.5-4B, but with only 760M active parameters, the efficiency is hard to ignore.

The Small-MoE Efficiency Bet

ZAYA1-8B's pitch is intelligence efficiency: get close to bigger-model ability with very few active parameters.

760M active parameters means:

- Runs on far more hardware: laptops, phones, and small edge devices become plausible

- Very low inference cost: token cost and latency drop sharply compared with dense models

- Good fit for test-time compute: cheap inference makes repeated sampling and verification more practical

This is the opposite direction from large reasoning models that rely on huge models plus test-time compute. Zyphra is betting that if each inference is cheap enough, repeated reasoning can make up for some single-pass weakness.

Who Is Zyphra?

Zyphra is a smaller AI company and has not had the same visibility as Qwen or DeepSeek. But the ZAYA1-8B technical report is serious, and the benchmark comparisons are reasonably transparent.

Apache-2.0 also matters. This is meant to be used, forked, and distributed, not locked inside a hosted platform.

What To Watch

Community evaluation is still early. The model has a modest number of likes and downloads on Hugging Face, so the next signals matter:

- Can the community reproduce the official benchmarks?

- How does it behave outside math and coding?

- How does it compare with Qwen3.6-35B-A3B?

If ZAYA1-8B's math and coding scores hold up, it could become a serious option for edge reasoning.

Main sources:

- Zyphra ZAYA1-8B Hugging Face page

- Zyphra technical report