Bottom Line

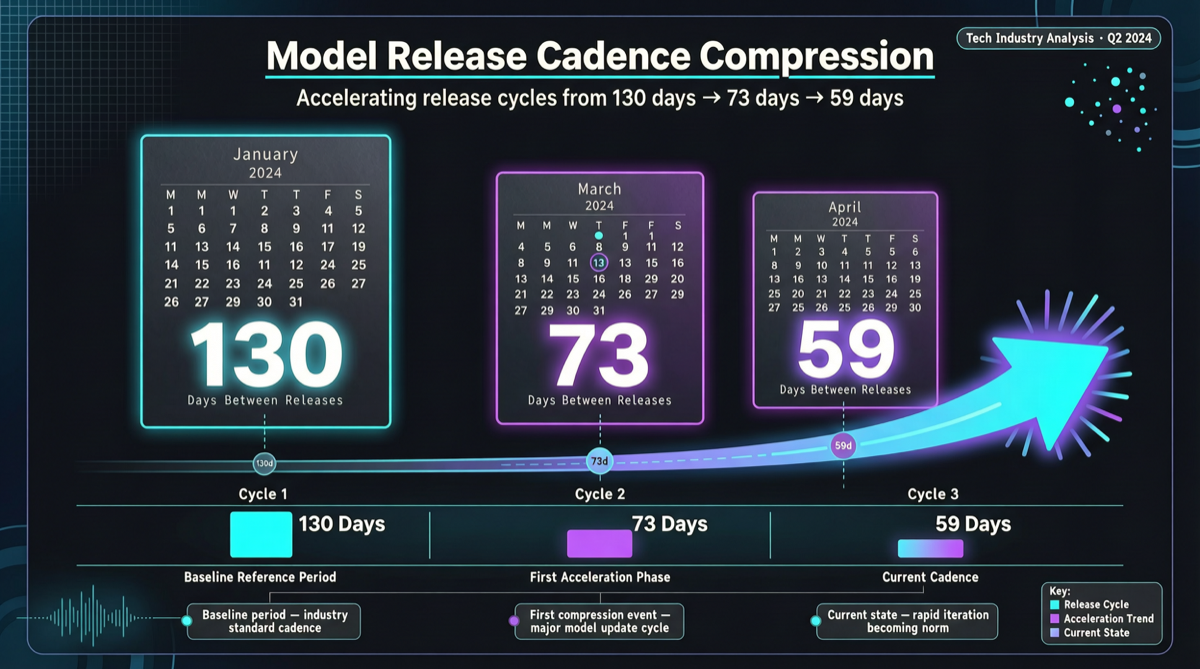

Claude’s release intervals are compressing at a stunning pace:

| Version Iteration | Days Between |

|---|---|

| Sonnet 4 → 4.5 | 130 days |

| Opus 4.5 → 4.6 | 73 days |

| Opus 4.6 → 4.7 | 59 days |

Release interval shortened by 55% in 4 months. This is not linear improvement — it is exponential acceleration.

Data Analysis

Release Cadence Change

2025 Q4 ← Sonnet 4

│ 130 days

2026 Q1 ← Sonnet 4.5 / Opus 4.5

│ 73 days

2026 Q2 ← Opus 4.6

│ 59 days

2026 Q2 ← Opus 4.7 (current)From 130 days to 59 days, the release cycle has shortened by more than half. If this trend continues linearly, the next version’s interval could be only around 40 days.

Horizontal Comparison: Other Models’ Release Cadence

| Model Series | Current Release Interval | Trend |

|---|---|---|

| Claude (Anthropic) | ~59 days | Accelerating ⬇️ |

| GPT (OpenAI) | ~90 days | Relatively stable |

| Gemini (Google) | ~60 days | Accelerating ⬇️ |

| Qwen (Alibaba) | ~45 days | Fast iteration |

| DeepSeek | ~60 days | Stable |

Claude’s acceleration is not an isolated phenomenon, but the 59-day pace is already approaching the fastest in the industry.

Impact on Engineering Practices

1. Model Version Pinning Strategy Needs Restructuring

If your production environment pins Claude model versions:

| Past | Present |

|---|---|

| Annual model upgrade planning | ❌ Not sufficient |

| Semi-annual evaluation | ⚠️ May miss important updates |

| Quarterly evaluation + on-demand upgrade | ✅ Recommended approach |

2. Regression Testing Frequency Must Increase

Each model iteration may bring:

- Capability improvements (new features, more accurate)

- Behavioral changes (output format, reasoning patterns)

- Potential regressions (capability degradation in some scenarios)

Recommendation: Build an automated regression test suite that runs automatically after each model update.

3. Necessity of Multi-Model Routing Strategies Rises

When a single model’s iteration speed becomes too fast, multi-model routing becomes key to risk reduction:

Request enters

├── Stable tasks → Fixed version model (verified)

├── New feature exploration → Latest version model

└── Critical path → Dual model cross-verificationCompetitive Landscape Interpretation

Why Is Anthropic Accelerating?

- Competitive pressure: OpenAI GPT-5.5, Google Gemini 3.2 Flash, Qwen3.6 all releasing densely

- Technical maturity: Training infrastructure and evaluation processes are now standardized, reducing iteration costs

- Market demand: Enterprise expectations for AI capabilities continue to rise

Impact on Other Players

- OpenAI: GPT series needs to maintain at least the same iteration speed

- Google: Gemini’s acceleration trend is synchronized with Claude

- Chinese models: Qwen already maintains a ~45-day iteration rhythm, in the lead

Action Recommendations

For teams using Claude API:

- Incorporate model version evaluation into quarterly engineering meetings

- Establish automated testing processes for new versions

- Consider multi-model routing architecture to reduce single-model upgrade risk

For entrepreneurs choosing tech stacks:

- Don’t assume any model version will remain stably available for more than 3 months

- Abstract your LLM call layer to minimize the cost of switching models

- Evaluate the value of open-source models as a “stability anchor” — iteration rhythm is under your own control