A Key Detail Most People Missed

Recently, a rumor about Kimi K3 has been spreading in the Chinese AI community:

“Kimi K3 is reportedly planned for a Q3 release, with a parameter scale exceeding 2.5 trillion; internal experiments have already tested context lengths far beyond 1 million tokens, but it remains uncertain whether the 1M context will be opened to users. The main bottleneck limiting Kimi’s rollout of 1M context is not technology, but computing resources.”

Pay attention to that last sentence — the bottleneck is not technology, but computing power.

This might be the most easily misunderstood, yet most decisive turning point in the 2026 LLM competition.

Technical Feasibility vs. Commercial Viability

From a technical standpoint, 1 million token context is no longer a question of “can it be done.”

DeepSeek V4 Flash/Pro already supports 1M context, and Kimi K3’s internal experiments have also successfully run 1M+ tokens. Multiple open-source projects are also experimenting with ultra-long context.

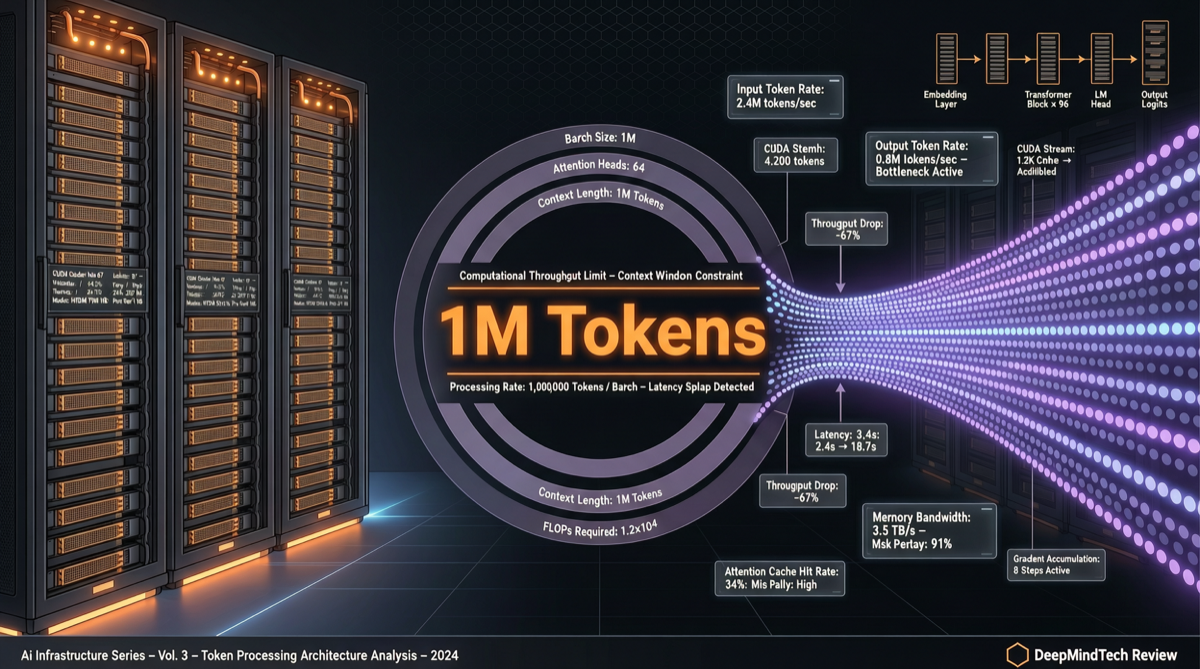

The real challenge is: when 1 million tokens flood into the model, how much computing power is needed to serve a single inference?

Rough estimates:

- KV Cache for 1M tokens at FP16 precision requires approximately several GB of VRAM

- A complete 1M context inference on an A100 may take tens of seconds to several minutes

- If 10,000 users simultaneously initiate 1M context requests, the required GPU cluster scale is astronomical

This is why Kimi (Moonshot AI), despite having proven the technology internally, still hesitates to open it to users — computing costs would eat all profits.

Computing Chips in Different Players’ Hands

In this 1M context race, the computing power resources held by each player vary enormously:

DeepSeek: Owns its own intelligent computing clusters + partnerships with multiple computing providers. V4 Flash/Pro already supports 1M context. Its confidence comes from strong model efficiency optimization — the same context length requires less computing power from DeepSeek.

Moonshot AI (Kimi): Has received substantial funding, but is still catching up in computing infrastructure. This is also why K3’s 1M context is “internally tested” but “uncertain whether to open.”

Alibaba (Qwen): Backed by Alibaba Cloud’s computing infrastructure, theoretically the most capable of providing large-scale 1M context services. But Qwen’s strategy focuses more on model efficiency and multi-scenario adaptation rather than purely pursuing context length.

Zhipu AI (GLM): Has accumulated expertise in long context, but computing scale is a constraining factor.

Why Does This Matter?

Because 1M context isn’t just about “reading more” — it redefines what AI can do:

- Complete codebase analysis: Read an entire project’s source code at once for global refactoring

- Long document understanding: An entire book, legal contract, or financial report, analyzed in one pass

- Multi-turn dialogue memory: Interaction history with AI no longer needs to be “truncated” or “compressed”

- Data analysis: Massive structured data input at once, drawing conclusions directly

When a model is the first to provide 1M context at an affordable price, it gains a structural advantage in these scenarios — not because the model is smarter, but because it can “see” more information.

Three Battlefields in the Computing Race

Extending from the Kimi K3 rumor, the computing race in the 2026 LLM industry concentrates on three levels:

1. Training Computing: The Parameter Scale Ceiling

Kimi K3’s 2.5 trillion parameters means the computing power required for training is astronomical. This isn’t about “buying a few more cards” — it requires systematically building full-stack capabilities from chips to clusters.

2. Inference Computing: The Deciding Factor for Service Costs

The service cost of 1M context determines who can commercialize at scale. DeepSeek has reduced inference costs through model architecture optimization (MoE, sparsification, etc.), which may be the key reason it opened 1M context faster than competitors.

3. Edge Computing: The Future of Local Deployment

Qwen 3.6’s outstanding performance in local models shows another parallel path: fitting a sufficiently powerful model into consumer-grade hardware. This isn’t the 1M context route, but it may be a more practical “good enough” strategy.

Signals for the Industry and Investors

- Computing power is the real moat. Model architectures can be imitated, papers can be reproduced, but computing infrastructure requires time and capital accumulation.

- 1M context will be a watershed. Companies that can afford it will gain differentiated advantages; those that can’t will be forced to compete on “good enough” context lengths.

- Q3 is a critical window. If Kimi K3 launches on schedule in Q3 and opens 1M context, Moonshot AI will prove its computing infrastructure has reached a new level. If delayed or scaled back, it suggests the computing bottleneck is more severe than external observers expected.

The LLM competition has shifted from “whose paper is stronger” to “whose computing is sufficient.” This isn’t a sexy narrative, but it’s the key to determining the winner.