Core Conclusion

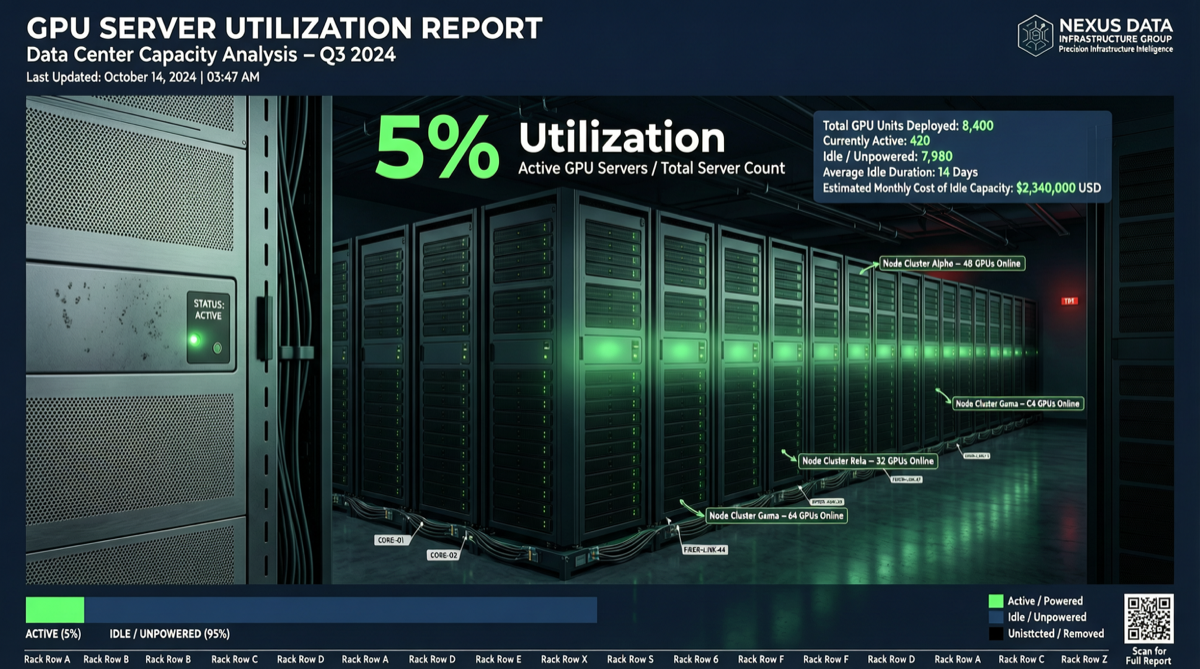

Cast AI’s analysis of approximately 23,000 Kubernetes clusters reveals a shocking fact: enterprise GPU average utilization is only 5%. In other words, 95% of GPU compute sits idle. Meanwhile, CPU utilization is at 8% and memory at 20%.

This is not an anomaly from a small sample—it is systematic waste across the entire industry.

Data Overview

Resource Utilization Comparison

| Resource Type | Average Utilization | Idle Ratio | Waste Level |

|---|---|---|---|

| GPU | 5% | 95% | Extreme |

| CPU | 8% | 92% | Extreme |

| Memory | 20% | 80% | Severe |

Why Does This Happen?

Fear-Based Provisioning: Enterprises are afraid of missing GPU allocations, afraid of performance bottlenecks, and afraid of complaints from business teams, so they massively overprovision. This mindset is similar to toilet paper panic buying during the pandemic—not because of need, but because of “fear of running out.”

Key Findings Breakdown

1. What Does 5% GPU Utilization Mean?

Assuming an enterprise purchases 100 H100 GPUs at approximately $30-40/hour. At 5% utilization:

- Effective compute: equivalent to 5 H100s running at full speed

- Wasted compute: equivalent to 95 H100s idling

- Annual waste cost: approximately $2.5-3.2 million

This does not include the accompanying CPU, memory, network, cooling, and other infrastructure costs.

2. New CPU-GPU Imbalance

Another overlooked trend: GPU performance is improving far faster than CPU. This means the CPU supporting resources required per unit of AI compute are lagging behind. Labs are competing directly with hyperscale cloud providers for x86 CPU capacity, further driving up overall costs.

3. Multiple Resources Idle Simultaneously

GPU, CPU, and memory are all at low utilization simultaneously, indicating the problem is not a configuration error in a single resource, but a systematic failure in overall resource planning methodology.

Why It Matters

Direct Impact on Enterprises

- Cost Black Hole: 95% of multi-hundred-million-dollar GPU budgets is pure waste

- Competitiveness Decline: With the same budget, efficient enterprises can achieve 20x the actual compute of inefficient ones

- Environmental Impact: Idle GPUs still consume electricity and generate carbon footprint

Industry-Level Signals

| Signal | Meaning |

|---|---|

| GPU shortage is an illusion | True demand is far lower than surface demand |

| Cloud provider GPU pricing power may weaken | When enterprises realize waste, procurement strategies will change |

| Resource optimization tool market explosion | Auto-scaling, mixed-workload scheduling, GPU time-sharing will become essential |

Action Recommendations

Enterprise CTO/Technical Leaders

- Immediately audit GPU utilization: Use Prometheus + NVIDIA DCGM to monitor actual GPU usage

- Implement GPU time-sharing (MIG): Split single GPUs into multiple instances to improve concurrent utilization

- Introduce auto-scaling strategies: Dynamically adjust GPU allocation based on actual load, not static allocation

- Establish cost accountability: Include GPU utilization in team KPIs

AI Engineers

- Batch inference over real-time inference: Merge multiple inference requests to improve GPU throughput

- Model quantization and distillation: Use smaller models to meet business needs, reducing GPU dependency

- Use inference optimization frameworks: vLLM, TensorRT-LLM and other frameworks can significantly improve GPU utilization

Investors/Analysts

- Focus on resource optimization sector: GPU optimization platforms like Cast AI, Run:ai, Volcon AI are highlighting value

- Beware of compute narrative bubbles: GPU purchase volume does not equal AI capability; utilization is the key metric

- Find “20x efficiency gap” enterprises: Companies that can achieve 20x compute efficiency with the same budget will gain enormous competitive advantage

Landscape Judgment

The turning point for compute waste may be approaching.

When the first enterprises achieve “completing the same AI tasks at 1/20 the cost” through optimization, the industry will have to face this problem. This is not a technology upgrade issue—it is a fundamental shift in management methodology.

At the same time, this also provides a huge opportunity for AI startups: Whoever can help customers increase GPU utilization from 5% to 50% holds the entrance to the trillion-dollar compute market.