Key Conclusion

Claude Opus 4.7 accomplished a task previously thought to require human researchers: implementing an AlphaZero-style self-play pipeline from scratch.

- Completed in just 3 hours on consumer hardware

- Achieved 7/8 wins against the Pascal Pons professional solver in Connect Four (as first-mover)

- Other tested frontier Coding Agents did not exceed 2/8

This is not an “AI can play chess” demo — it is an end-to-end loop of Agent autonomous research → algorithm implementation → model training → result validation.

Experiment Details

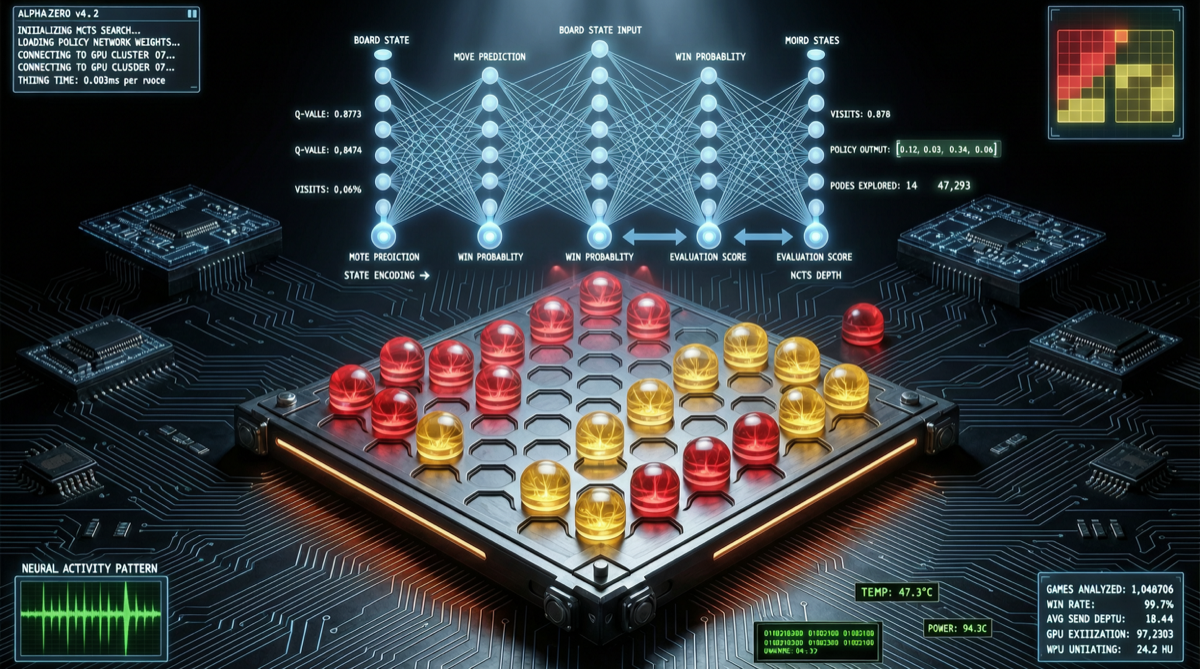

Task: Implement AlphaZero-style self-play reinforcement learning pipeline, training a Connect Four player from scratch.

AlphaZero’s core approach:

- No human game records — learning purely through self-play

- Uses MCTS (Monte Carlo Tree Search) + neural network for position evaluation

- Bootstrapping: better model → stronger opponent → better training data → better model

Claude Opus 4.7’s performance:

- Completed the full process from algorithm understanding, code writing, debugging to training in 3 hours

- Achieved 7 wins out of 8 as first-mover against Pascal Pons solver

- Pascal Pons solver is a mathematically proven perfect solver — Claude’s victory means it found the optimal strategy with first-mover advantage

Comparison results:

| Agent | Connect Four Win Rate (vs Pascal Pons) | Completion Time |

|---|---|---|

| Claude Opus 4.7 | 7/8 (first-mover) | 3 hours |

| Other frontier Coding Agents | ≤ 2/8 | Did not complete in time |

Why This Result Matters

1. Autonomous Research Capability

AlphaZero implementation requires cross-domain knowledge: reinforcement learning, Monte Carlo search, neural network training, game theory. Claude Opus 4.7 demonstrated the ability to understand complex algorithms → implement autonomously → debug and optimize.

2. Gap from Traditional Coding Agents

Other Coding Agents achieved at most 2/8 win rate on the same task — a significant gap. This shows capability differentiation among Coding Agents has emerged.

3. Consumer Hardware Feasibility

The experiment was completed on consumer hardware, not cloud GPU clusters. AlphaZero-level self-play training is moving from “requires million-dollar compute” to “individual developers can play.”

Action Recommendations

- Researchers: Watch for self-play methods migrating to other domains (optimization problems, program synthesis)

- Agent developers: Claude Opus 4.7 demonstrates the capability ceiling of “research-grade agents” — use as a benchmark

- Investors: Agent platforms with autonomous research and end-to-end delivery capabilities may form new technical moats

- General users: Short-term no need for panic — this demonstrates Claude’s capability on specific research tasks, not general software engineering ability