In late April 2026, community developers comparing the latest official prompt guides from OpenAI and Anthropic discovered that GPT-5.5 and Claude Opus 4.7 take nearly opposite approaches to prompt requirements.

GPT-5.5: Don’t Tell Me How, Tell Me What

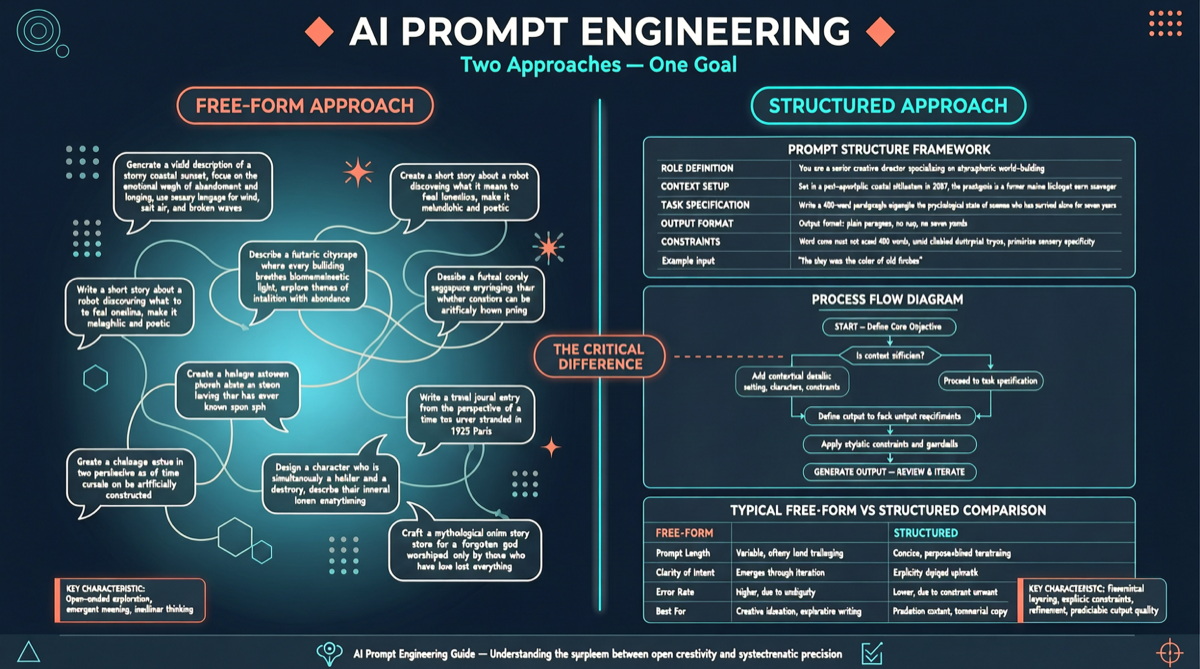

OpenAI’s GPT-5.5 prompt guide recommends:

- Describe your desired end result directly, without explaining the implementation path

- Reduce step-by-step instructions — the detailed prompts that worked before now constrain the model and make responses rigid

- Give the model freedom — let it decide the reasoning path and tool combination to achieve the goal

This design philosophy assumes GPT-5.5’s reasoning ability is strong enough that it doesn’t need humans to手把手 teach it “how” to do things.

Claude Opus 4.7: Please Specify Every Step

Anthropic’s Claude Opus 4.7 prompt guide takes the opposite approach:

- Structured instructions over free-form descriptions — decompose tasks into clear steps, rules, and constraints

- Define output formats in detail — use examples and templates to规范 output structure

- Explicitly define boundaries — telling the model what NOT to do is as important as telling it what to do

Why This Divergence Exists

The difference in prompt philosophies reflects fundamentally different judgments about model capability boundaries and reliability:

- OpenAI believes GPT-5.5 has crossed the threshold where “detailed instructions are needed for complex tasks” — stronger reasoning means less prompt engineering

- Anthropic believes even the strongest models need structured guidance and clear boundaries in production, high-risk scenarios to ensure output consistency and safety

Practical Implications

For Agent developers, this means prompt templates for different models require different strategies. A prompt that works well on GPT-5.5 may underperform when directly ported to Claude, and vice versa.