Bottom Line First

The release of lambda/hermes-agent-reasoning-traces dataset may be one of the most important infrastructure updates in the AI Agent space in 2026. It enables developers and researchers to observe, analyze, and optimize AI agent reasoning processes at scale for the first time.

Before this, agent debugging was basically “read logs, guess the cause.” Now, with standardized reasoning trace datasets and analysis toolchains, agent development is moving from “craftsmanship” to “engineering.”

What Happened

Dataset Contents

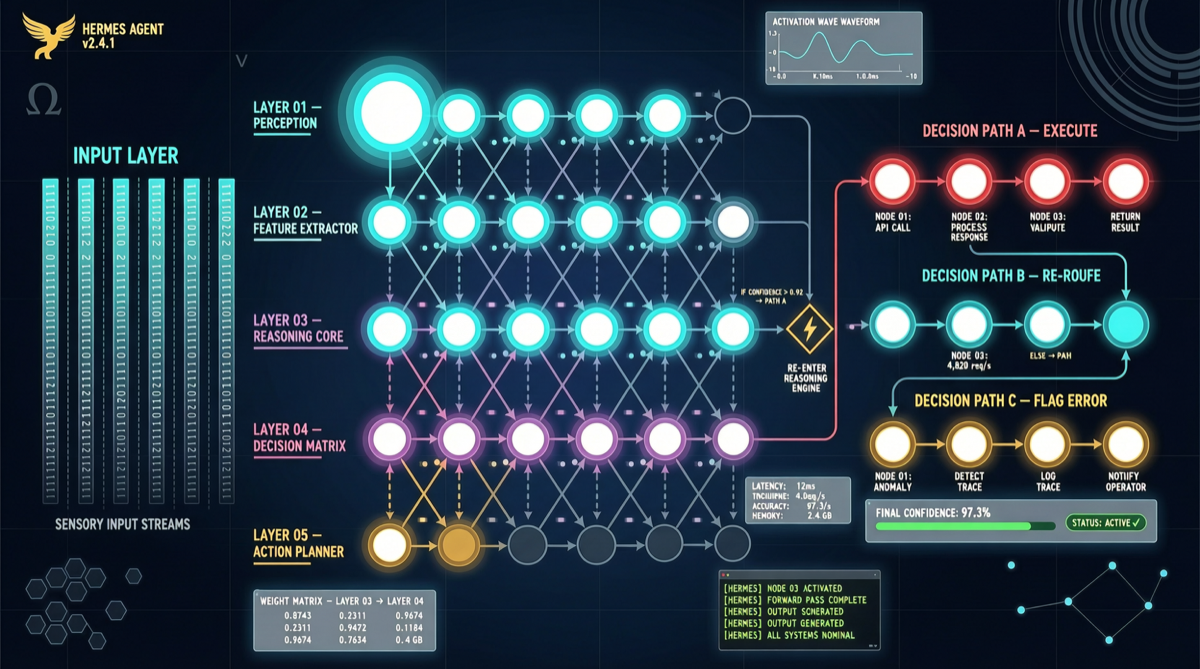

Based on Hermes Agent runtime data, the dataset includes complete reasoning traces from agents processing various tasks:

Each reasoning trace includes:

├── User input (task description)

├── Agent's thinking process (reasoning steps)

├── Tool call sequence

│ ├── Call parameters

│ ├── Return results

│ └── Agent's understanding of results

├── Intermediate decision points

│ ├── Alternative options

│ ├── Selection rationale

│ └── Evaluation of rejected options

├── Final output

└── Execution result evaluationAccompanying Toolchain

| Tool | Function | Output |

|---|---|---|

| Parser | Converts raw traces to structured data | Standardized reasoning step sequences |

| Analyzer | Identifies reasoning patterns and common errors | Statistical reports + pattern classification |

| Visualizer | Converts reasoning process to graphics | Decision trees / flowcharts |

| Fine-Tuning Pipeline | Optimizes models using trace data | Improved reasoning strategies |

Why It Matters

1. Agent Debugging Finally Has a “Data Foundation”

Before: Agent errors → read logs → guess → modify prompt → retry → guess again

Now: Agent errors → query trace dataset → find similar cases → analyze failure patterns → targeted optimization

This is analogous to software development evolving from “print debugging” to “professional profilers.”

2. Reasoning Quality Can Be Quantified and Compared

Researchers can now:

- Measure reasoning depth: How many reasoning steps does an agent average?

- Identify reasoning defects: Which task types cause reasoning breakdowns?

- Compare different models: How do reasoning paths differ for the same task?

3. Fine-Tuning Agent Reasoning Strategies Is Now Possible

- “Teach” agents better reasoning using high-quality traces

- Fine-tune reasoning strategies for specific task domains

- Enable agents to learn from failure

Key Difference from LLM CoT Data

| Dimension | LLM CoT Data | Agent Reasoning Traces |

|---|---|---|

| Scope | Single reasoning process | Multi-step, multi-tool, cross-session |

| Interaction | Pure text reasoning | Includes tool calls and result feedback |

| Time Span | Seconds | Minutes to hours |

| Decision Types | Next token generation | Tool selection, result judgment, strategy adjustment |

Quick Start

git clone https://github.com/lambda/hermes-agent-reasoning-traces

cd hermes-agent-reasoning-traces

jupyter notebook analysis.ipynbLandscape Assessment

2024: Read logs and guess (Primitive era)

2025: Simple trace recording (Pre-observability)

2026: Standardized reasoning traces + analysis tools ← We are here

2027: Real-time reasoning monitoring + automatic root cause analysis

2028: Agent self-diagnosis + self-repairCore Judgment: Reasoning trace data for agents is like log data for traditional software. Without observability, there’s no engineering. This dataset is a key step toward AI agent engineering.