What Happened

The LangChain team recently published a set of benchmark data that sent shockwaves through the AI Agent community:

Same model GPT-5.2-Codex, zero parameter changes, only the Agent Harness layer was swapped — Terminal-Bench score jumped from 52.8% to 66.5%, a net gain of 13.7 percentage points. Ranking surged from outside the Top 30 directly into the Top 5.

Even more critically, LangChain released another observation:

“Models and harnesses CO-EVOLVE. The model gets better at specific tool patterns and feedback loops. The harness gets better at extracting the model’s capabilities.”

This means: models and scaffolding are co-evolving. Models are getting better at specific tool-calling patterns and feedback loops, while harnesses are getting better at squeezing every drop of capability from the model.

Why 13.7 Points Matters More Than Model Upgrades

For the past 18 months, the industry narrative has been dominated by “who released the bigger model.” LangChain’s data dropped a counter-narrative bomb:

| Dimension | Traditional Approach | What LangChain Reveals |

|---|---|---|

| Performance source | Model parameters and training data | Harness design carries equal weight |

| Optimization path | Wait for model updates | Change your own scaffolding |

| Competitive moat | Compute/data | Engineering architecture |

| Cost structure | Pay for stronger models | Pay for better design |

What is Terminal-Bench

Terminal-Bench is a benchmark measuring AI Coding Agents’ ability to complete tasks in real terminal environments. Unlike SWE-bench (code repair), Terminal-Bench evaluates the Agent’s full-process capabilities in command-line environments: environment setup, dependency installation, debugging, file operations — much closer to a real developer’s daily work.

The leap from 52.8% to 66.5% means the Agent went from “frequently getting stuck halfway” to “capable of independently completing most terminal tasks.”

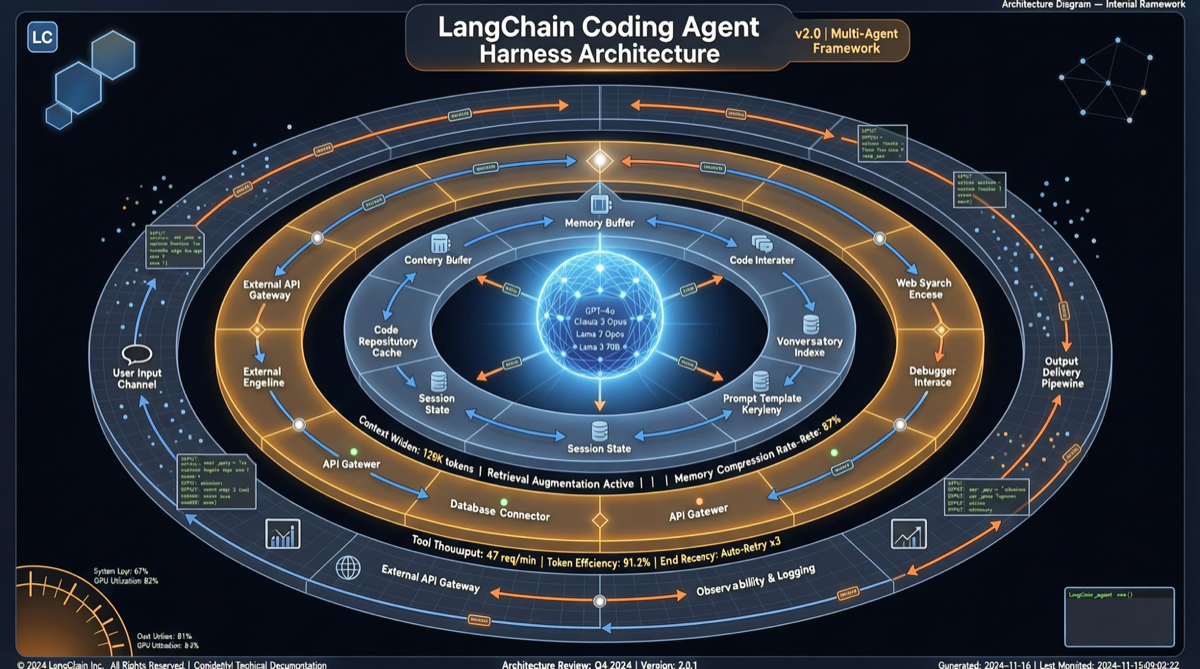

What Exactly Changed in the Harness

Based on LangChain’s public hints and industry analysis, core improvements concentrated on three layers:

1. Context Management Strategy

- Dynamic compression: no longer simple truncation, but intelligent retention of critical context

- Tool call history layering: recent = detailed, older = summarized

- Filesystem awareness: automatically identifies which file states need persistence

2. Tool Call Orchestration

- Parallel tool calls: multiple independent operations execute concurrently

- Failure retry logic: differentiated recovery strategies for different error types

- Tool chain composition: atomic operations composed into composite tools

3. Feedback Loop Design

- Self-correction mechanism: Agent self-checks before outputting

- Incremental validation: instant checks after each step, not one final batch verification

- Error learning: failure cases converted into constraints for next execution

Industry Impact: Harness as Competitiveness

This data is reshaping the competitive logic of the AI Agent industry:

For Model Vendors

If the same model performs 13+ points differently under different harnesses, simply advertising “top benchmark scores” is losing meaning. Models are becoming commodities; the harness is where differentiation lives.

For Agent Frameworks

The competitive focus for LangChain, CrewAI, Dify, OpenClaw, Hermes Agent, and similar frameworks is shifting. Whoever designs a better harness can make “the same model” achieve top-tier results.

For Developers

You don’t need to wait for the next model release to improve your Agent’s capabilities — optimizing your harness design may deliver bigger performance leaps. This is the most actionable insight of 2026.

Core Principles of Harness Engineering

Based on LangChain’s data and industry practice, here are verified harness design principles:

| Principle | Description | Effect |

|---|---|---|

| Context-aware compression | Retain context by importance, not time | Reduces critical information loss |

| Tool pattern alignment | Harness structure aligned with model training environment | Unlocks pre-trained capabilities |

| Layered memory | Short-term detailed + mid-term summary + long-term index | Breaks context window limits |

| Failure as data | Error output converted into next constraint | Continuous self-improvement |

| Minimal intervention | Only intervene in model decisions when necessary | Preserves model reasoning ability |

Landscape Assessment

LangChain’s 13.7-point experiment is not an isolated result, but a microcosm of a trend:

The second half of 2026 will see AI Agent competition shift from a model parameter arms race to a harness architecture engineering race.

This opens a window of opportunity for smaller teams — you don’t need to train large models, you just need to design better harnesses. As LangChain demonstrated, a good harness can make a “non-top-tier” model achieve top-tier performance.

Action Recommendations

- If your Agent underperforms, don’t swap the model first — audit your harness design, optimizing context management, tool orchestration, and feedback loops one by one

- Focus on model-harness fit — different models have different tool-calling preferences; harnesses need targeted design

- Build a harness evaluation system — test your harness systematically like you test models, comparing different designs under the same model

- Consider open-source harness solutions — LangChain’s approach suggests harness patterns may become the next open-source battleground

The harness era has arrived. Models provide the capability ceiling; the harness determines how much of it you can reach.