A Shift That Is Already Happening

In May 2026, a post on social media sparked widespread discussion:

“AI model selection in 2026 has changed. It is no longer about ‘picking the strongest one’ but ‘choosing the best fit for each task’: Coding/bug fixing → Claude Opus 4.7 Multi-step reasoning/Agent → GPT-5.5 Long document analysis → DeepSeek V4-Pro (1 million tokens) High volume/cost saving → DeepSeek V4-Flash In the multi-model routing era, knowing how to orchestrate matters more than knowing how to use.”

The core insight of this post is: the model market has shifted from a “single champion” to “scenario-based division of labor.”

Why Is This Shift Happening?

1. Capability Gap Shrinks, Price Gap Widens

When Kimi K2.6 and DeepSeek V4 Pro’s Intelligence Index scores approach GPT-5.5 (within 6-8 points), but cost only 1/10 of the price, “using the most expensive” is no longer a rational choice.

| Scenario | Recommended Model | Monthly Cost (Heavy Use) |

|---|---|---|

| Code generation/review | Claude Opus 4.7 | $150-300 |

| Complex reasoning chains | GPT-5.5 | $200-500 |

| Long document summarization | DeepSeek V4 Pro | $20-40 |

| Batch Agent tasks | DeepSeek V4 Flash | $5-15 |

| Chinese long context | Kimi K2.6 | $15-30 |

Completing a comprehensive workflow that includes code review, document analysis, and batch processing with a single GPT-5.5 could cost $500+/month, while multi-model routing can keep it under $100.

2. Differentiation Maturation of Domestic Models

In 2025, domestic model competition was still at the “whose benchmark score is higher” stage. But by 2026, each company has formed a clear differentiated positioning:

- Zhipu GLM-5.1: Stable performance in vertical scenarios like invoice processing and structured data extraction, subscription at $80/month with unlimited calls

- Moonshot Kimi K2.6: 1 million token context + Agent Swarm architecture, 300 sub-Agent parallel processing, ideal for large-scale Agent collaboration

- DeepSeek V4 Pro: 1.6T MoE + million-level context, best inference cost-performance, now adapted to Huawei Ascend chips

- MiniMax M2.7: Self-evolution mechanism, showing unique advantages in Agent workflows

- ModelBest MiMo V2.5 Pro: 1T MoE + MIT open-source license, zero barrier for commercial use

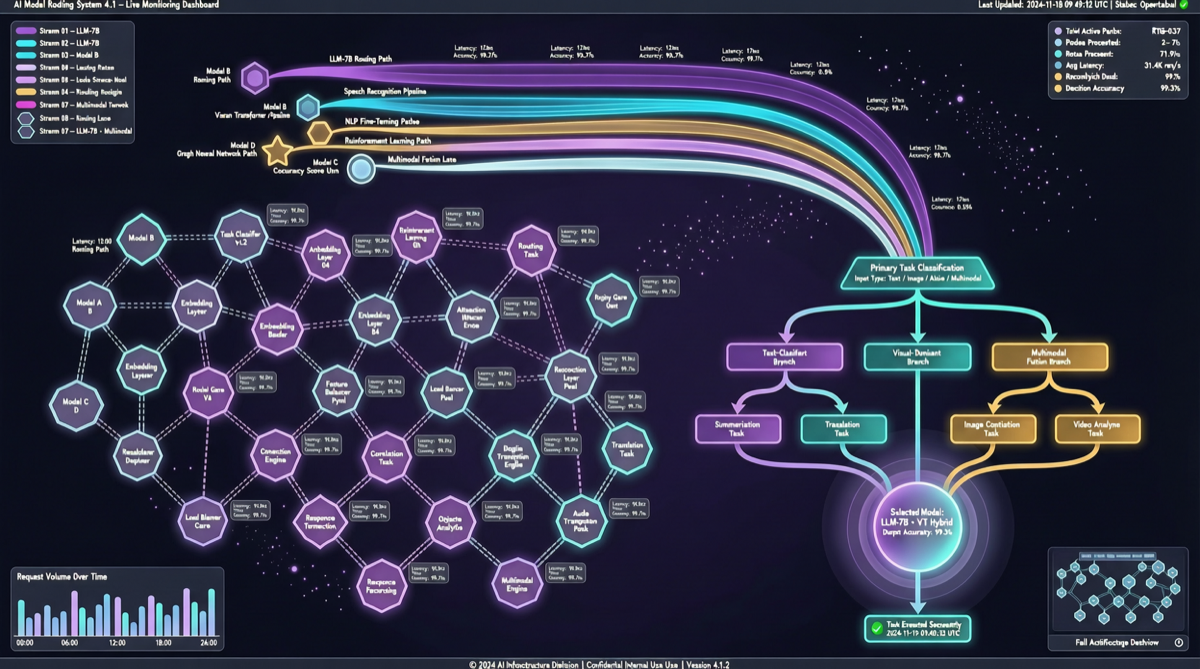

3. Agent Frameworks Make Multi-Model Orchestration Simple

The maturation of open-source frameworks like OpenClaw and Hermes Agent has made multi-model routing no longer an engineering challenge. Developers simply specify the model for different tasks in a configuration file, and the framework automatically handles routing, fallback, and cost optimization.

In Practice: A Typical Developer’s Daily Routing

9:00 AM — Code Review

├── Task: Review code quality for 15 PRs

├── Model: Claude Opus 4.7

├── Reason: Best code understanding depth, lowest false positive rate

└── Cost: ~$8

11:00 AM — Requirements Analysis

├── Task: Analyze 200-page product requirements document, extract key constraints

├── Model: DeepSeek V4 Pro (1 million token context)

├── Reason: Long document capability + low cost

└── Cost: ~$3

2:00 PM — Batch Agent Tasks

├── Task: Generate automated tests for 50 repositories

├── Model: DeepSeek V4 Flash

├── Reason: High throughput + free tier covers it

└── Cost: ~$0

4:00 PM — Complex Reasoning

├── Task: Design system architecture plan, requires multi-step reasoning

├── Model: GPT-5.5

├── Reason: Best coherence in multi-step reasoning chains

└── Cost: ~$12

8:00 PM — Chinese Content Generation

├── Task: Generate Chinese version of product documentation

├── Model: Kimi K2.6

├── Reason: Chinese context understanding + long context

└── Cost: ~$2

Daily total: ~$25

Single model (GPT-5.5) for equivalent tasks: ~$120+

Savings: approximately 80%How to Build Your Own Multi-Model Routing?

Step 1: Inventory Your Task Types

| Task Type | Key Requirement | Suitable Models |

|---|---|---|

| Code generation/review | Code understanding depth | Claude Opus 4.7, DeepSeek V4 Pro |

| Multi-step reasoning | Reasoning chain coherence | GPT-5.5 |

| Long document processing | Large context window | DeepSeek V4 Pro, Kimi K2.6 |

| Batch Agent | Cost + throughput | DeepSeek V4 Flash, Kimi K2.6 |

| Chinese content | Chinese context | Kimi K2.6, GLM-5.1 |

| Daily conversation | Response speed | DeepSeek V4 Flash, GLM-5.1 |

Step 2: Choose a Routing Tool

- OpenClaw: Supports automatic multi-model switching, configurable fallback strategies

- Hermes Agent: Desktop-level Agent platform, natively supports multi-model routing

- LiteLLM: Open-source proxy layer, unified API interface, automatic routing to optimal model

- Custom routing: Simple if-else or rule engine to distribute by task type

Step 3: Monitor and Optimize

Multi-model routing is not a one-time configuration. Weekly review recommended:

- Actual token consumption per model vs budget

- Task completion rate (did choosing the “cheaper” model lead to repeated retries?)

- User satisfaction feedback (in some scenarios, the capability gap is perceptible)

Landscape Assessment

Multi-model routing is not a “money-saving trick” — it is a paradigm shift.

When the model market moves from “one champion takes all” to “multiple vendors cooperating by division of labor,” the biggest winner is not any single model vendor, but developers who know how to orchestrate models.

AI competitiveness in 2026 is no longer about what model you use, but whether you can choose the right model, at the right time, for the right task.

Action Items

- Individual developers: Start with DeepSeek V4 Flash’s free tier, gradually add Claude or GPT for critical scenarios — monthly costs can be kept under $20

- Enterprise teams: Establish a model routing strategy document, defining default models, fallback models, and cost caps for each scenario

- Tool selection: Prioritize Agent frameworks that support multi-model routing (OpenClaw, Hermes Agent) to avoid being locked into a single vendor