Core Conclusion

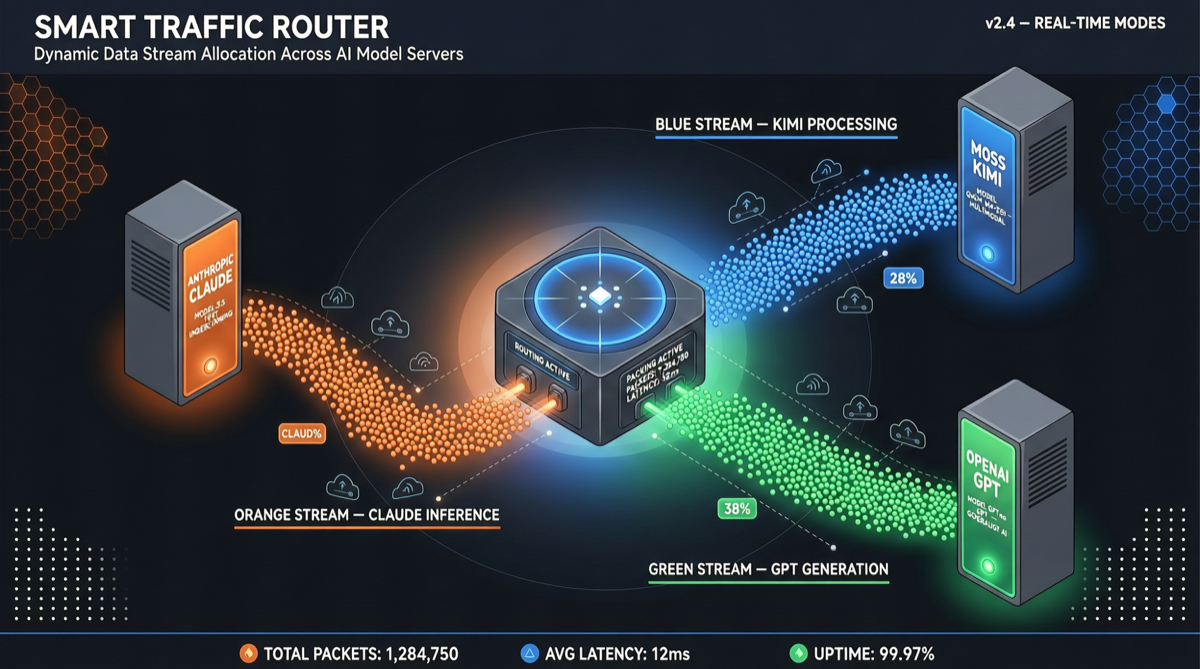

Multi-model routing is no longer theoretical — a developer has already validated its feasibility in a real production environment. By intelligently routing different tasks to the most suitable model, monthly API costs dropped from $500+ to under $100 while maintaining output quality.

This isn’t “settling for cheaper models” — it’s using the right model for each job: Claude for code, Kimi for long documents, GPT for multi-step reasoning — each task finds the model with the best price-performance ratio.

Why Build a Router?

The Single-Model Trap

Most developers’ approach is “pick the strongest model and use it for everything.” This has three problems:

| Problem | Manifestation | Consequence |

|---|---|---|

| Overconsumption | Using Opus 4.7 for simple text classification | Spending 10x the money for 1x the work |

| Capability mismatch | Using GPT-5.5 for code generation | Quality inferior to Claude |

| Single dependency | Connecting to only one model’s API | One outage means total paralysis |

Core Routing Logic

Task arrives → Type identification → Capability need assessment → Model selection → Output → Quality check

↓ (if quality fails)

Upgrade to stronger model and retryActual Routing Strategy

This Developer’s Routing Rules

| Task Type | Primary Model | Fallback Model | Reason |

|---|---|---|---|

| Code generation/Debug | Claude Opus 4.7 | GPT-5.5 | Claude leads in coding ability |

| Long document analysis | Kimi K2.6 | DeepSeek V4-Pro | Kimi excels in long context understanding |

| Multi-step reasoning/Agent | GPT-5.5 | Claude Opus 4.7 | GPT has stronger tool calling and planning |

| Simple chat/Translation | Kimi K2.6 (free) | Qwen3.6-Plus | Lowest cost option |

| Creative writing | Claude Opus 4.7 | GPT-5.5 | Claude’s writing style is more natural |

| Data analysis | DeepSeek V4-Pro | GPT-5.5 | Best price-performance for long context analysis |

Cost Comparison

Assuming 10,000 tasks processed monthly:

| Approach | Monthly Cost | Average Quality |

|---|---|---|

| All Claude Opus 4.7 | ~$500 | 95/100 |

| All GPT-5.5 | ~$400 | 92/100 |

| Multi-model routing | ~$85 | 94/100 |

Key numbers: The routing approach costs only 17% of the single Claude approach, with nearly identical quality. Savings come from:

- 40% of tasks (simple chat/translation) routed to free/low-cost models

- 30% of tasks (long documents) routed to more cost-effective Kimi

- Only 30% of high-value tasks used Opus 4.7

How to Build Your Own Router

Minimum Viable Version

class ModelRouter:

ROUTING_RULES = {

"code": {"primary": "claude-opus-4-7", "fallback": "gpt-5.5"},

"long_context": {"primary": "kimi-k2.6", "fallback": "deepseek-v4-pro"},

"reasoning": {"primary": "gpt-5.5", "fallback": "claude-opus-4-7"},

"simple": {"primary": "kimi-k2.6", "fallback": "qwen3.6-plus"},

}

def route(self, task_type: str, prompt: str, budget: str = "normal"):

rule = self.ROUTING_RULES.get(task_type, self.ROUTING_RULES["simple"])

model = rule["primary"] if budget == "normal" else rule["fallback"]

return self.call_model(model, prompt)Advanced: Automatic Quality Detection

def execute_with_fallback(self, task_type, prompt):

# Try primary model first

result = self.route(task_type, prompt)

# Quality check (can be simple length check or LLM evaluation)

if not self.quality_check(result):

# Fall back to stronger model

fallback = self.ROUTING_RULES[task_type]["fallback"]

result = self.call_model(fallback, prompt)

return resultAutomatic Task Type Detection

The ideal router doesn’t need manual task type specification — it should auto-detect:

import re

def detect_task_type(prompt: str) -> str:

code_patterns = [r'```', r'def ', r'function ', r'class ', r'import ']

if any(re.search(p, prompt) for p in code_patterns):

return "code"

if len(prompt) > 5000:

return "long_context"

reasoning_patterns = [r'analyze', r'reason', r'compare', r'evaluate', r'why']

if any(re.search(p, prompt) for p in reasoning_patterns):

return "reasoning"

return "simple"Selection Recommendations

When to Use Routing

- ✅ High API usage: Teams spending over $200/month

- ✅ Diverse task types: Mix of code, copywriting, and analysis

- ✅ Some quality tolerance: Not every task needs optimal quality

- ✅ Engineering capability: Can maintain routing logic and fallback mechanisms

When NOT to Use Routing

- ❌ Low API usage: Under $50/month, savings are negligible

- ❌ Extreme quality requirements: Medical, financial scenarios can’t tolerate any quality fluctuation

- ❌ Strict compliance requirements: Some industries can’t have data flow through multiple providers

2026 Trend Judgment

Multi-model routing is evolving from “individual developers’ cost-saving trick” to “enterprise standard architecture.” As model capability gaps narrow (Kimi K2.6 approaching GPT-5.5, DeepSeek V4 approaching frontier models), the logic of model selection will completely shift from “who’s strongest” to “who’s best for this task.”

Next evolution directions:

- Automated routing: No manual rules — let AI decide which model to use

- Dynamic pricing awareness: Router reads real-time API price changes across models

- Quality closed loop: Auto-evaluate quality after each call, continuously optimizing routing strategy