Traditional AI inference stack has a fatal assumption: every request is independent. The agent era shatters this assumption.

The Pain Point

Agent coding sessions typical behavior:

Traditional inference assumption:

Request 1 → Compute → Response 1

Request 2 → Compute → Response 2

Agent coding session reality:

Session: Debugging task in same codebase

├─ Request 1: Read file A

├─ Request 2: Read file A + B (recomputes file A context)

├─ Request 3: Run test + read A + B + C (recomputes again...)

└─ ... 200+ calls, massive token budget wasted on redundant computationAn agent coding session may generate hundreds of API calls, most with context already computed in previous calls. Traditional inference stacks ignore this redundancy.

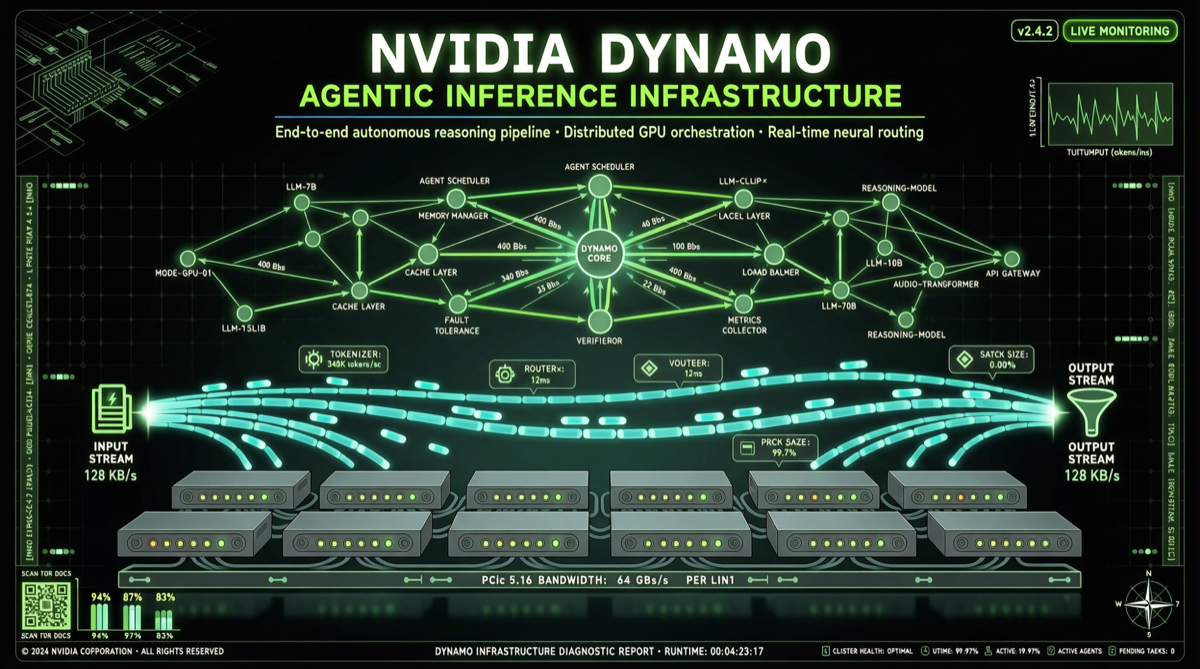

What Dynamo Does

Core Capabilities

| Capability | Problem Solved | Effect |

|---|---|---|

| KV-Aware Routing | Agent requests carry overlapping context | Avoid redundant KV cache computation |

| Context Reuse | Repeated tokens in same session | Significantly reduce per-token cost |

| Smart Scheduling | Multiple concurrent agent requests to GPUs | Improve GPU utilization |

2.7x Performance Improvement

At Google Cloud Next, NVIDIA demonstrated Dynamo achieving 2.7x performance improvement on the same silicon through:

- Eliminating redundant KV cache computation in agent sessions

- Smarter request routing, sending requests with similar context to same GPU

- Optimized batch processing for agent scenarios

Why This Matters

Inference Cost is the Agent Scaling Bottleneck

Agent coding tool API revenue growing faster than expected:

- Codex revenue doubled within a week of GPT-5.5 launch

- API revenue growth 2x faster than any prior release

- Agent tool token consumption is 10-100x traditional chat apps

If inference costs don’t scale linearly with agent usage, agent business model sustainability is challenged.

Redefining “Inference”

Traditional inference (2023-2025):

Input → Model → Output

Focus: Latency, throughput

Agentic inference (2026):

Input → [Session state + Context history + Tool calls] → Model → [Output + State update]

Focus: Context reuse, state management, multi-request coordinationDynamo is the first project to redesign the entire inference stack from “agentic inference” assumptions, not patching existing architecture.

Action Recommendations

If You’re Running Agent Coding Tools

- Evaluate Dynamo optimization potential: Focus on KV cache hit rates

- Test before/after: Compare token consumption and latency for same agent workload with Dynamo

If You’re Building AI Applications

- Include inference optimization in architecture design: Agent apps inference cost model differs completely from traditional apps

- Focus on KV cache strategy: May be the most important inference optimization direction in the agent era

Summary

NVIDIA Dynamo significance is not in doing something new (KV cache reuse, smart routing aren’t new concepts), but in being the first to systematically organize these optimizations under the “agentic inference” paradigm. When AI apps evolve from chatbots to autonomous agents, inference infrastructure must evolve with them.

Sources: