Key Takeaways

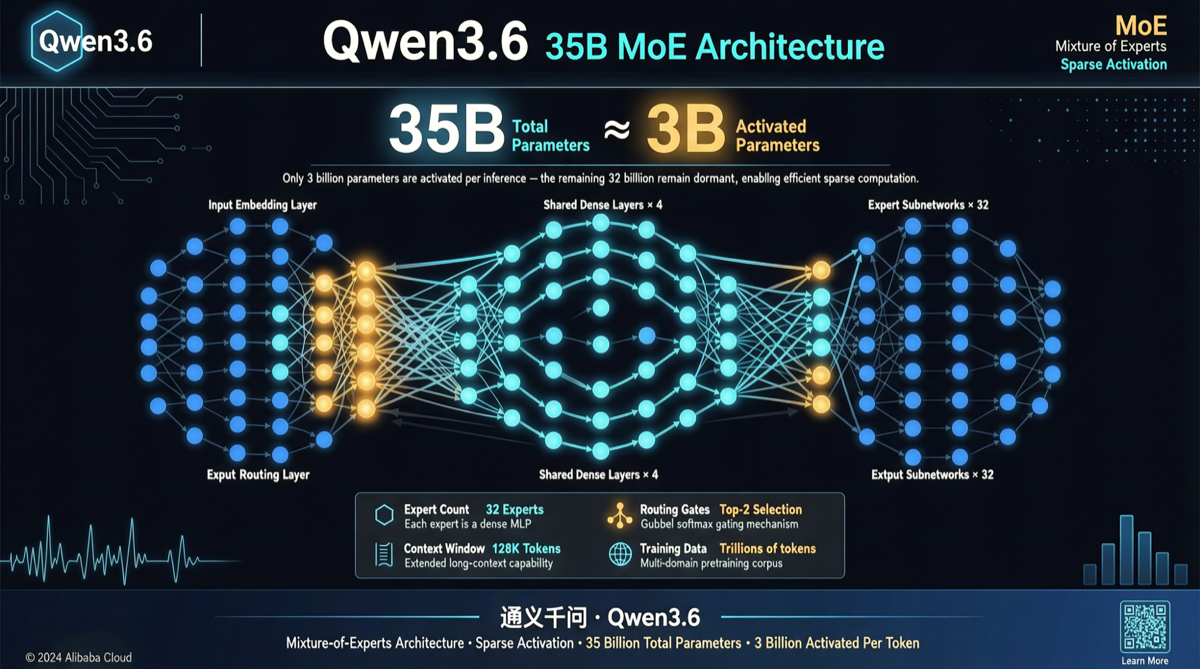

The Qwen team has published Qwen3.6-35B-A3B on Hugging Face, the first open-source variant of the Qwen3.6 series. 35B total parameters with only 3B activated during inference, featuring a hybrid architecture of 256-expert MoE combined with Gated DeltaNet, Apache 2.0 licensed, native 262K context window expandable to 1 million tokens.

| Dimension | Qwen3.6-35B-A3B |

|---|---|

| Total Params | 35B |

| Activated Params | 3B |

| Expert Count | 256 (8 routed + 1 shared activated) |

| Context | 262K native, expandable to 1M |

| License | Apache 2.0 |

| Architecture | Gated DeltaNet → MoE + Gated Attention → MoE |

| Multimodal | Built-in Vision Encoder (Image-Text-to-Text) |

What Happened

Architecture: Hybrid Gated DeltaNet and MoE Design

The core innovation of Qwen3.6-35B-A3B lies in its hybrid attention layout:

10 × [

3 × (Gated DeltaNet → MoE)

1 × (Gated Attention → MoE)

]This is not a simple MoE stacking. It alternately combines linear attention (Gated DeltaNet) and global attention (Gated Attention), with every 3 layers of DeltaNet paired with 1 layer of global attention. DeltaNet handles efficient local context modeling, while the global attention layers ensure long-distance information transmission is not attenuated.

Specific parameters:

- 40 layers, hidden dimension 2048

- Gated DeltaNet: 32 V heads + 16 QK heads, head dimension 128

- Gated Attention: 16 Q heads + 2 KV heads (GQA), head dimension 256

- MoE: 256 experts, activating 8 routed experts + 1 shared expert per call, expert intermediate dimension 512

- Vocabulary size: 248,320 (after padding)

Inference Efficiency: What 3B Activated Params Means

An activation of 3B parameters ranks among the lowest in current open-source MoE models. Comparison:

| Model | Total Params | Activated Params | Activation Ratio |

|---|---|---|---|

| Qwen3.6-35B-A3B | 35B | 3B | 8.6% |

| DeepSeek V4 | 1.6T | 37B | 2.3% |

| Ling-2.6-Flash | 104B | 7.4B | 7.1% |

| Kimi K2.6 | ~1T | ~32B | 3.2% |

The absolute activated parameter count (3B) of Qwen3.6-35B-A3B is significantly lower than other models, which means:

- Single-card deployment: After INT4 quantization, only ~1.5-2GB VRAM needed for the activated portion

- Low-latency inference: Several times faster than 27B dense models like Qwen3.6-27B

- Multi-instance concurrency: Multiple instances can run simultaneously on a single A100, ideal for high-throughput scenarios

Native Multimodal Support

Unlike the text-only Qwen3.6-27B, Qwen3.6-35B-A3B is an Image-Text-to-Text architecture with a built-in Vision Encoder. This means it can directly process image-text mixed inputs without requiring an external vision model. Combined with the 262K native context, it suits complex understanding tasks involving long documents with embedded images.

Two Key Upgrades in the Qwen3.6 Series

The official blog highlights two core improvement directions:

- Agentic Coding Enhancement: Frontend workflow and repository-level reasoning capabilities are significantly improved, meaning longer and more stable tool-calling chains in code Agent scenarios

- Thinking Preservation: A new option to retain reasoning context from historical messages, reducing redundant inference overhead in iterative development — especially critical for multi-turn interactive Agent workflows

Why It Matters

1. Filling the MoE Gap in the Qwen3.6 Lineup

The Qwen3.6 series previously mainly released dense models (like 27B). The 35B-A3B is the first MoE variant, completing a critical piece of the product line:

- 27B dense: For scenarios that don’t need MoE complexity and prioritize stability

- 35B-A3B MoE: Only 3B activated, performance approaching much larger dense models, ideal for cost-sensitive high-concurrency scenarios

- Larger scale: More MoE variants may follow

2. Consumer GPU Friendly

3B activated params + 2048 hidden dimension = extremely low inference barrier. Deployment on consumer GPUs:

# RTX 4090 (24GB) runs it easily

# ~2GB VRAM for activated portion after INT4 quantization

# Remaining VRAM available for KV Cache, supporting long contextThis means individual developers and small teams can deploy a multimodal MoE model at low cost without relying on cloud APIs.

3. Exploration Value of the Hybrid Architecture

The Gated DeltaNet + MoE combination is uncommon in the open-source community. DeltaNet, as a linear attention variant, has natural advantages in long-sequence modeling. Combined with MoE’s sparse computation, it may represent a new efficiency-performance tradeoff paradigm. If benchmark results validate this design’s advantages, other open-source teams will likely follow with similar architectures.

Competitor Comparison

| Model | Total Params | Activated Params | Context | Multimodal | License | Deployment Barrier |

|---|---|---|---|---|---|---|

| Qwen3.6-35B-A3B | 35B | 3B | 262K→1M | ✅ | Apache 2.0 | Consumer GPU |

| Qwen3.6-27B | 27B | 27B | 128K | ✅ | Apache 2.0 | Single 4090 |

| DeepSeek V4 | 1.6T | 37B | 128K | ❌ | MIT | Multi A100 |

| Ling-2.6-Flash | 104B | 7.4B | 256K | ❌ | MIT | Single 4090 |

| MiMo-V2.5-Pro | 1T | 42B | 1M | ❌ | MIT | Multi A100 |

Qwen3.6-35B-A3B’s unique positioning: lowest absolute activated params + native multimodal + Apache 2.0 commercial license.

Actionable Advice

Who Should Pay Attention

- Agent developers: Thinking Preservation directly optimizes efficiency in multi-turn Agent calls

- Cost-conscious deployment teams: 3B activated params means extremely low inference costs and hardware barriers

- Multimodal application developers: Native Image-Text-to-Text architecture, no extra vision model needed

- Long-context users: 262K native, expandable to 1M context window

How to Get Started

pip install transformers accelerate

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen3.6-35B-A3B",

device_map="auto",

torch_dtype="auto"

)Compatible with vLLM, SGLang, KTransformers and other inference frameworks.

Points to Note

- As the first open-source variant of Qwen3.6, community tooling (Ollama support, etc.) may still be in progress

- The cost of 3B activated params is 35B total parameters — full loading still requires some VRAM (requires MoE inference framework with sparse loading support)

- Specific benchmark values should be referenced from the official blog, as the current page is not fully expanded

- Apache 2.0 license allows commercial use but requires compliance with license terms

Primary Sources: