Even as context windows break the million-token barrier, research still shows: the fuller they get, the lower the model’s output quality.

This is known as context rot. For a deeper analysis of this issue, check out our previous article The Real Bottleneck of 1M Token Context Isn’t Technology, It’s Compute — the limitation on large models isn’t just technical, but also computational cost.

Developers face a dilemma: stuff everything in and watch quality degrade, or aggressively trim and risk losing critical information the Agent will need later. For a systematic discussion of AI Agent memory management, see also The “Context Amnesia” Dilemma of AI Agents.

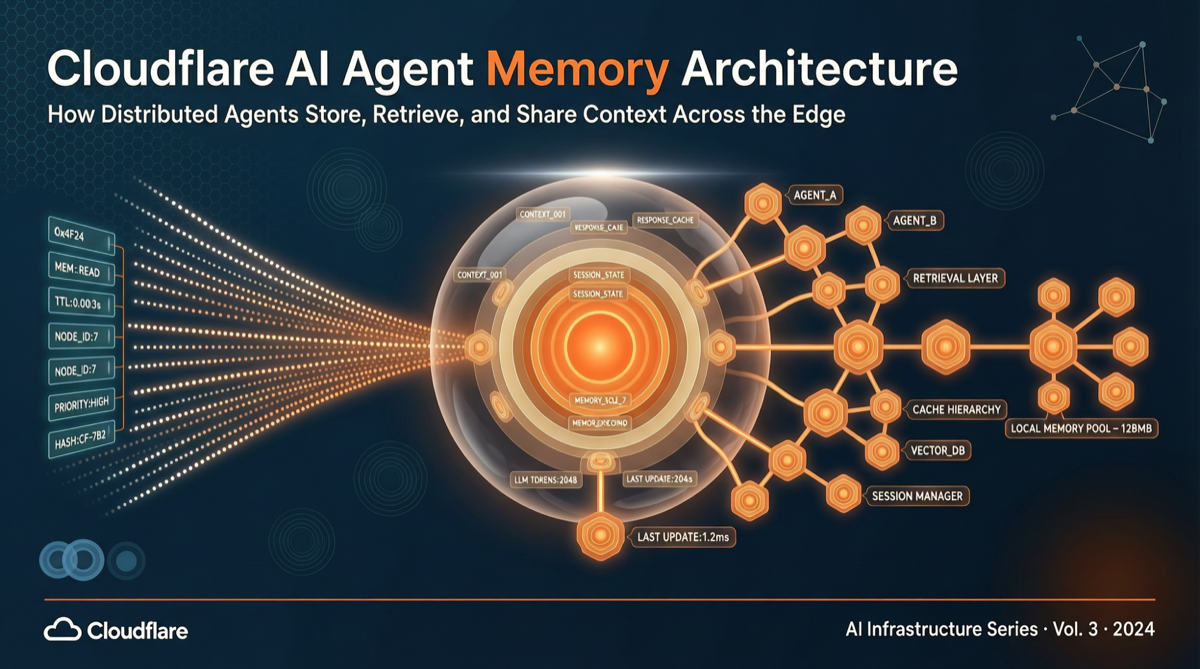

Cloudflare announced a new product during its Agent Week at the end of April: Agent Memory (private beta), attempting to solve this problem at its root. For a more comprehensive overview of the full Agent Week product matrix, see our article Cloudflare Fully Opens Agent Self-Registration. Instead of cramming conversation history wholesale into the context window, Agent Memory extracts structured memories from conversations and retrieves only what’s relevant on demand.

“We built Agent Memory because real workloads on our platform exposed gaps that existing solutions couldn’t fully address. Agents running for weeks or even months, facing real codebases and production systems, need memory that remains useful as it grows, not just memory that performs well on clean benchmark datasets.” —— Tyson Trautmann & Rob Sutter, Cloudflare Engineering Team

Extraction Pipeline: Two-Pass Scan + Eight-Step Validation

Agent Memory’s inbound pipeline is designed with considerable precision.

Each message is first assigned a content-addressed SHA-256 ID, supporting idempotent re-ingestion — sending the same message again won’t generate redundant records.

The extractor runs two parallel channels:

- Broad scan: Chunks at approximately 10K characters, capturing overall semantics

- Detail scan: Focuses on specific values — names, prices, version numbers, etc.

A validator then runs eight checks, and only messages that pass are classified into four memory types:

- Facts: Key-value pairs, indexed by standardized topics

- Events: Timeline-related activity records

- Instructions: Behavioral rules, where new memories overwrite rather than delete old versions

- Tasks: To-do items

By default, Llama 4 Scout (17B MoE) is used for extraction and classification — Cloudflare found that larger models show no significant advantage at this stage.

Retrieval Architecture: Five-Channel Parallel + RRF Fusion

What’s truly interesting is the retrieval side. Agent Memory isn’t a simple vector search — it runs five channels in parallel, then fuses results using Reciprocal Rank Fusion (RRF):

| Channel | Purpose |

|---|---|

| Full-text search | Keyword matching |

| Exact fact key lookup | Directly locate indexed facts |

| Raw message search | Trace back to original conversation text |

| Direct vector search | Semantic similarity |

| HyDE vector search | Generates declarative answers to resolve vocabulary mismatch |

The HyDE (Hypothetical Document Embeddings) channel is particularly noteworthy: it first generates a hypothetical answer, then uses that answer’s embedding vector for retrieval — effectively bypassing vocabulary differences between user queries and stored memories.

The synthesis stage uses Nemotron 3 (120B MoE), with Cloudflare’s testing showing that larger models only provide substantial help during synthesis.

Shared Memory: Team-Level Agent Knowledge Collaboration

A unique capability of Agent Memory is memory sharing. Memory profiles don’t need to be bound to a single Agent — teams can share a memory profile, so that conventions, architectural decisions, and team “tribal knowledge” learned by one engineer’s coding Agent become immediately available to others.

Cloudflare is already using this internally. An agentic code reviewer connected to Agent Memory learned that when a certain pattern had been flagged but the author chose to keep it, it wouldn’t warn about it again next time.

Industry Comparison

The Agent Memory space is getting increasingly crowded:

- Mem0: Managed cloud API supporting vector, graph, and key-value storage

- Zep Graphiti: Temporal knowledge graph tracking fact validity periods

- LangMem: Integrated with LangGraph, but requires self-hosting

- Letta (formerly MemGPT): Hierarchical memory architecture where Agents autonomously manage context

Cloudflare’s differentiation lies in edge distribution and deep integration with its own computing primitives (Durable Objects, Vectorize, Workers AI). For teams already building Agents on Cloudflare, the integration cost is minimal.

Risks to Watch Out For

Independent evaluator Kristopher Dunham highlighted several trade-offs to consider:

- Vendor lock-in: Data can be exported, but the retrieval pipeline itself is not portable. “Exportable means you can extract the raw facts. It doesn’t mean your retrieval pipeline is portable.”

- Uncontrollable extraction quality: Memory extraction relies on secondary models chosen by Cloudflare, with no developer intervention possible

- Recommendation: Critical facts should explicitly call the

remembertool rather than relying on automatic ingestion - Architecture advice: Conversation history and learned facts should be stored separately from the start; context compression should trigger at 60% capacity, not wait until it’s full

Currently in private beta, with pricing not yet announced.

Primary sources: InfoQ - Cloudflare Agent Memory, Cloudflare official blog