Conclusion First

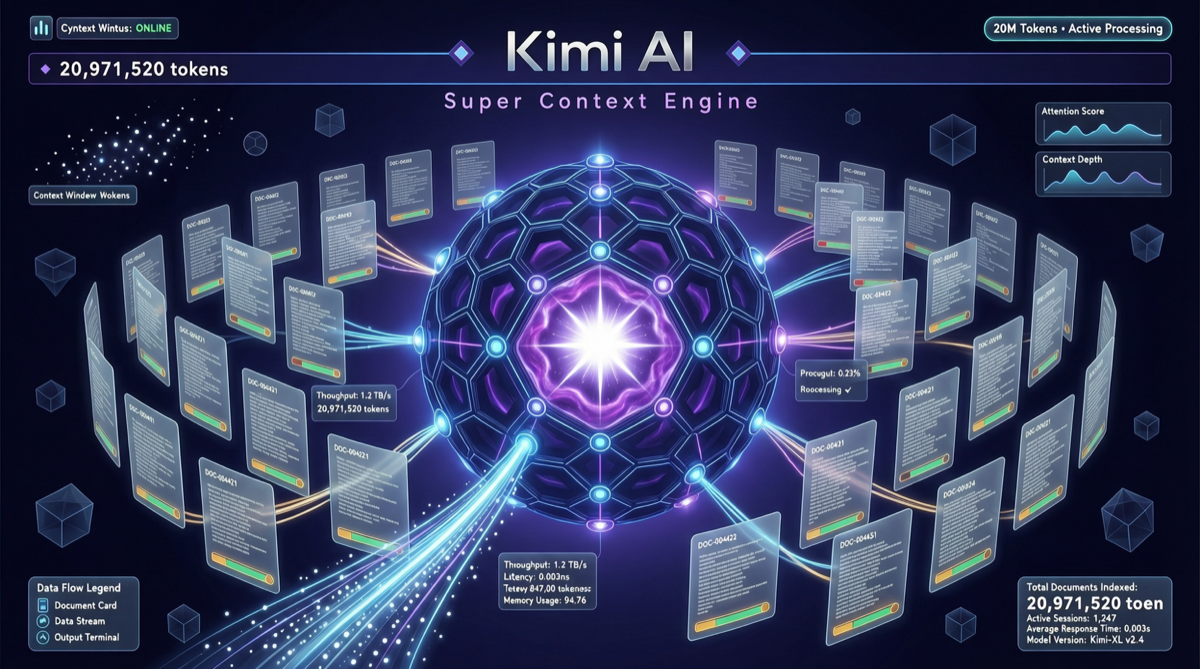

Moonshot AI quietly released the Kimi Super-Context upgrade on April 29, pushing the context window to 20 million tokens — one of the longest publicly available context windows, equivalent to reading 15,000 pages of documents or approximately 15 million Chinese characters at once.

The key point is not the number itself, but Moonshot AI’s breakthrough on the core pain point of “retrieval accuracy at super-long contexts”: maintaining a needle-in-haystack recall rate exceeding 98% across the 20 million token range.

What Does 20 Million Tokens Mean?

| Scenario | Traditional Model Limit | Kimi Super-Context | Practical Significance |

|---|---|---|---|

| Technical manuals | 1-2 at a time | Entire library (~500 books) | No need to split documents |

| Legal case files | Requires summarization | Complete files + case database | Reduces information loss |

| Code repositories | Partial files | Entire mid-size project code | Global architecture understanding |

| Financial analysis | Single report | Multi-year + multi-company comparison | Cross-document reasoning |

Take the legal scenario as an example: a mid-size litigation case typically involves 50,000-100,000 pages of case files. Kimi’s 20 million token window is sufficient to accommodate the entire case file, plus relevant precedent databases and regulations — meaning AI can reason on complete information rather than being forced to compress and summarize as in the past.

Technical Approach: Not “Bigger”, But “Smarter”

Moonshot AI’s technical route has several key differentiators:

1. Hierarchical Attention Architecture Rather than simply expanding the KV cache, it builds a multi-level attention mechanism — high-frequency access areas retain full attention, while low-frequency areas use compressed representations. This keeps memory growth far below linear.

2. Dynamic Context Routing The model automatically selects context processing strategies based on task type:

- Intensive reading mode: full attention for critical passages

- Scanning mode: sparse attention for non-critical areas

- Hybrid mode: alternating between both

3. Retrieval-Augmented Hybrid Approach A built-in retrieval mechanism is still deployed within the 20 million tokens, but it’s not the traditional “retrieve first, then answer” — instead, it’s “retrieve while reasoning” — the model dynamically decides which context needs focused attention during generation.

Comparison with Current Mainstream Model Context Capabilities

| Model | Context Window | Release Date | Core Positioning |

|---|---|---|---|

| Kimi Super-Context | 20M | 2026.04.29 | Ultra-long document analysis |

| Gemini 3.1 Ultra | 2M | 2026.04 | Multimodal long text |

| Claude Opus 4.7 | 1M | 2026.04 | Deep reasoning |

| GPT-5.5 | 128K | 2026.04.23 | General conversation |

| Qwen 3.6 Max | 131K | 2026.03 | Coding + reasoning |

Kimi’s 20M is 10 times Gemini’s 2M and 20 times Claude’s 1M. But context size does not equal actual effectiveness — the key is whether the model’s “attention dilution” problem at ultra-long contexts has been solved. Moonshot AI claims a 98%+ recall rate in Needle-in-Haystack tests, but independent verification results have not yet been published.

Practical Impact for Developers and Enterprises

Scenarios worth trying immediately:

- 📋 Contract review: Input the entire contract library + historical modification records at once, let AI identify risk clause patterns

- 📚 Knowledge base construction: Feed all enterprise technical documents to Kimi, build a “living knowledge base” queryable in natural language

- 🔬 Research literature review: Input all core papers in a field at once, generate a systematic review

Scenarios not yet recommended:

- 🎯 Paragraph-level citation requiring precision (localization accuracy at ultra-long context still fluctuates)

- 💻 Latency-sensitive applications (first-token latency for 20 million tokens is significantly higher than short context)

Competitive Landscape Assessment

Moonshot AI’s strategic intent with this upgrade is clear: in the context length race, Chinese models are competing for global leadership.

But long context is only one dimension of capability. The real competitive dimensions are diverging in three directions:

- Length (Kimi leads)

- Multimodal integration (Gemini leads)

- Reasoning depth (Claude leads)

For users, this is not a question of “which is best” but “which best fits your scenario.” If your work involves processing massive documents, Kimi Super-Context is currently the most noteworthy option.