Deal Data

Meta’s Q1 2026 earnings released on April 29, 2026:

| Metric | Q1 2026 Actual | Market Expectation | Change |

|---|---|---|---|

| Revenue | $56.3B | $59.56B | Below expectations |

| Q2 Revenue Guidance | $58-61B | $59.56B | In line |

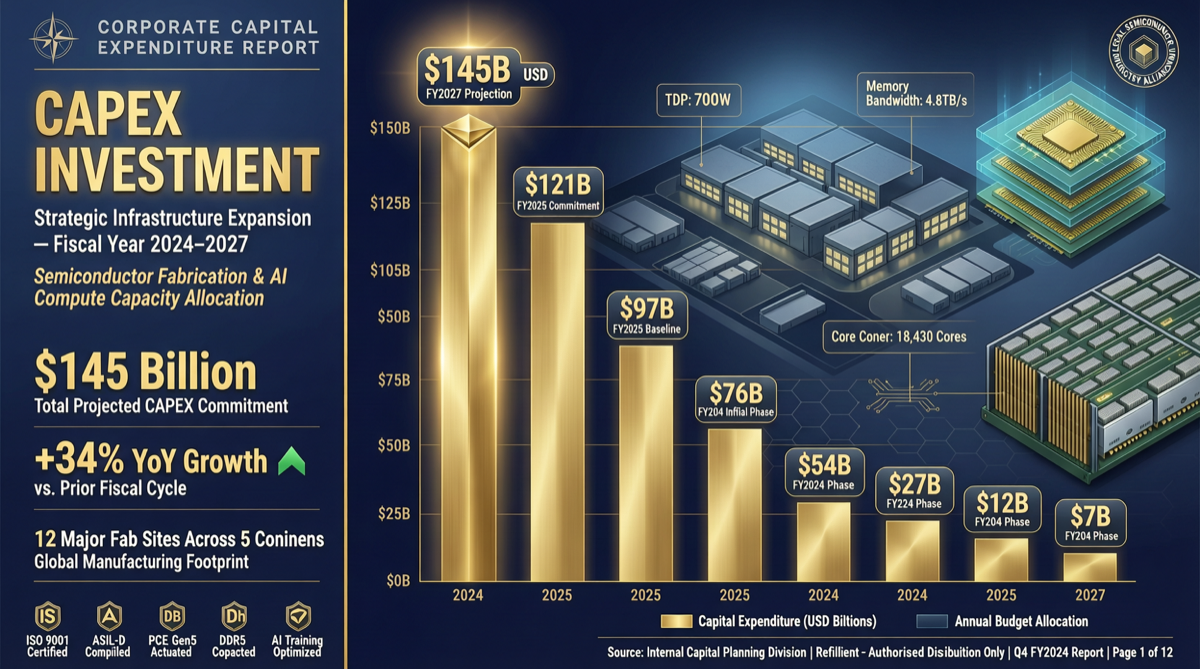

| Full-Year CAPEX | $125-145B | $115-135B | Significantly raised |

| CAPEX Increase | +$10-15B | — | ~10-12% upward revision |

Zuckerberg’s statement on the earnings call:

“Most of that is due to higher component costs, particularly memory pricing.”

This isn’t “we plan to spend more” — it’s “we have to spend more.”

Business Context

Meta’s current AI strategy revolves around three cores:

- Avocado Model: Next-generation foundational model, delayed from March to May due to performance not meeting expectations

- Llama Ecosystem: Open-source strategy continues, Llama 4 Scout (MoE architecture, 10M context) released

- AI Infrastructure: Large-scale GPU cluster investment supporting recommendation systems, ads, Meta AI assistant

Investment Logic: Memory Becoming the AI Race Bottleneck

HBM (High Bandwidth Memory) is the core component of current AI chips. The 2026 supply-demand landscape:

| Factor | Impact |

|---|---|

| Demand explosion | Every major company expanding AI clusters, HBM demand up 200%+ YoY |

| Limited capacity | HBM production line construction takes 18-24 months |

| Technology upgrade | HBM4 ramping up, yield ramp-up phase costs are high |

| Pricing power | Seller’s market, suppliers have strong pricing power |

Impact on the Industry

1. Memory Suppliers’ “Golden Age”

Samsung, SK Hynix, and Micron have unprecedented pricing power in the HBM market.

2. Model Efficiency Gains Importance

When memory costs become the primary variable, model design philosophy must change:

- MoE architecture has advantage — not all parameters need loading into memory each time

- Quantization and compression technologies see increased demand

- Small models + Agent orchestration may be more economical than single ultra-large models

3. Subtle Competitive Landscape Changes

Meta’s CAPEX increase signals: the AI race isn’t slowing down — it’s accelerating.

But Avocado’s delay shows: spending more doesn’t equal faster results. AI infrastructure investment faces significant diminishing marginal returns.

Actionable Advice

| Role | Recommendation |

|---|---|

| AI startups | Consider quantized models or MoE architecture; explore cloud reserved instances |

| Enterprise IT | List memory/storage costs separately in AI budgets |

| Investors | Focus on HBM supply chain (Samsung, SK Hynix, Micron) |

| Developers | Learn model quantization, LoRA fine-tuning to reduce memory footprint |

Landscape Judgment

Meta’s CAPEX increase tells us:

- AI arms race isn’t slowing — despite revenue slightly below expectations, Meta still increases investment

- Bottleneck is shifting — from “insufficient compute” to “memory too expensive,” a structural change

- Efficiency is competitiveness — whoever does more with less memory has the advantage

- Open source may become a differentiator — Llama ecosystem leading in memory efficiency attracts cost-sensitive users