Key Takeaway

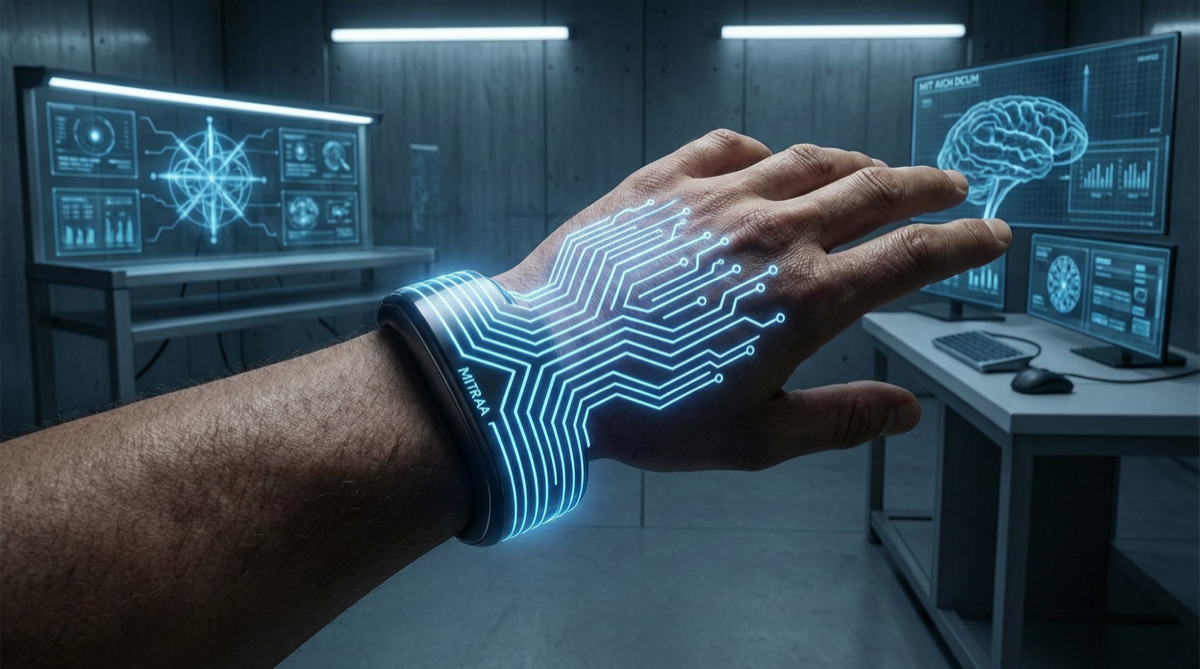

In early May 2026, at the MIT Hard Mode 2026 hackathon, a 6-person team won the “Learn Track” competition. Their project Human Operator is a wearable AI system capable of controlling human hand and wrist movements in real time—using cameras to observe the environment, AI to reason about what the body should do, and electrical pulses to guide muscles into execution.

This is not remote-controlling a robot. It is directly guiding the human body.

Technical Breakdown

System Architecture

┌─────────────┐ ┌─────────────┐ ┌─────────────────┐ ┌──────────┐

│ Camera Input│ → │ AI Vision │ → │ Motion Reasoning│ → │Neuro- │

│ (What you │ │ Understanding│ │ (What you should│ │muscular │

│ see) │ │ (Environment)│ │ do) │ │ Pulses │

└─────────────┘ └─────────────┘ └─────────────────┘ └──────────┘| Component | Function | Tech Stack |

|---|---|---|

| Visual capture | Real-time environment capture from user’s perspective | Wearable camera |

| AI reasoning | Understand scene, identify target, plan actions | Large vision model + motion planning |

| Motion guidance | Convert action commands into muscle stimulation signals | Neuromuscular Electrical Stimulation (NMES) |

| Execution feedback | Sensors detect actual movement completion | Inertial Measurement Unit (IMU) |

Key Technical Points

-

Vision-to-motion mapping: The AI needs to understand “what environment the user is in” and “what action the user should take.” For example, seeing a screw, the AI infers “need to grip a screwdriver and rotate.”

-

Precise muscle control: Guiding specific muscle groups to contract through electrical pulses, achieving fine hand movements. This requires precise timing control—the activation sequence and intensity of different muscles must match the target movement.

-

Real-time closed loop: From seeing to guiding to completing, the entire cycle must complete in milliseconds, otherwise the user experience suffers severe lag.

Why This Matters

The First Step Toward “Downloading Physical Skills”

This system proves that physical skills can be encoded and transmitted like software.

- An experienced surgeon’s operations can be recorded as AI training data

- A novice can “download” these skills through the wearable system, with AI guiding their hands through precise movements

- In the future, it may be possible to watch a tutorial video and have your muscles automatically execute the movements

Application Scenarios

| Scenario | Value |

|---|---|

| Medical training | Intern doctors can practice surgical movements under AI guidance, reducing training risk |

| Industrial operations | New workers quickly master precision assembly skills |

| Rehabilitation | Stroke patients recover hand movement function through AI guidance |

| Sports training | Athletes precisely reproduce standard movements |

Connection to AI Agents

This system is essentially an Embodied AI Agent. It not only “thinks” but also “executes” through physical interfaces. This complements current AI agent frameworks (like Hermes Agent, OpenClaw):

- Software Agents: Autonomously execute tasks in the digital world

- Embodied Agents: Guide humans to execute tasks in the physical world

Limitations and Controversies

| Issue | Description |

|---|---|

| Safety | Electrical pulse intensity and frequency must be strictly controlled to avoid muscle damage |

| Ethics | Where is the boundary of “controlling human movement”? Could it be abused? |

| Generalization | Currently limited to hands and wrists; full-body movement guidance requires more complex systems |

| Individual differences | Different people have different muscle electrophysiological characteristics, requiring personalized calibration |

Landscape Assessment

The MIT team built this system in just 48 hours, demonstrating that the foundational technology components are mature—cameras, AI vision models, and neuromuscular electrical stimulation are all existing technologies. The real innovation lies in combining these components into a closed-loop system.

This means similar products could move from the lab to consumer markets within 1-2 years.

Action Recommendations

- Medical/rehabilitation professionals: Follow this technology’s development; commercial products may appear in 2-3 years

- AI researchers: Embodied AI is the next hotspot; vision-to-motion mapping is the core technology

- General users: In the short term, pure software-based motion guidance applications (like AI fitness coaches) can serve as alternatives to wearable systems