Key Signal

Alibaba’s Tongyi Qianwen Qwen 3.6 Max Preview has quietly landed on OpenRouter. This is Alibaba’s largest model to date — one trillion parameters built on a sparse Mixture-of-Experts (MoE) architecture, yet priced far below competing models at the same tier.

| Dimension | Qwen 3.6 Max Preview | Claude Opus 4.6 | GPT-5.5 |

|---|---|---|---|

| Parameter Scale | 1 trillion (sparse activation) | Undisclosed | Undisclosed |

| Context Window | 262K | 1M | 200K |

| Input Pricing | $1.30/M tokens | $15.00/M tokens | $10.00/M tokens |

| Output Pricing | $7.80/M tokens | $75.00/M tokens | $50.00/M tokens |

| Optimization Focus | Agentic Coding, Tool Use | General Reasoning | Agentic Capabilities |

| Open Weights | No | No | No |

The price gap speaks for itself: Qwen 3.6 Max Preview’s input cost is just 1/11 of Claude Opus 4.6, and output cost is 1/10.

Architecture Breakdown

Core technical features of Qwen 3.6 Max Preview:

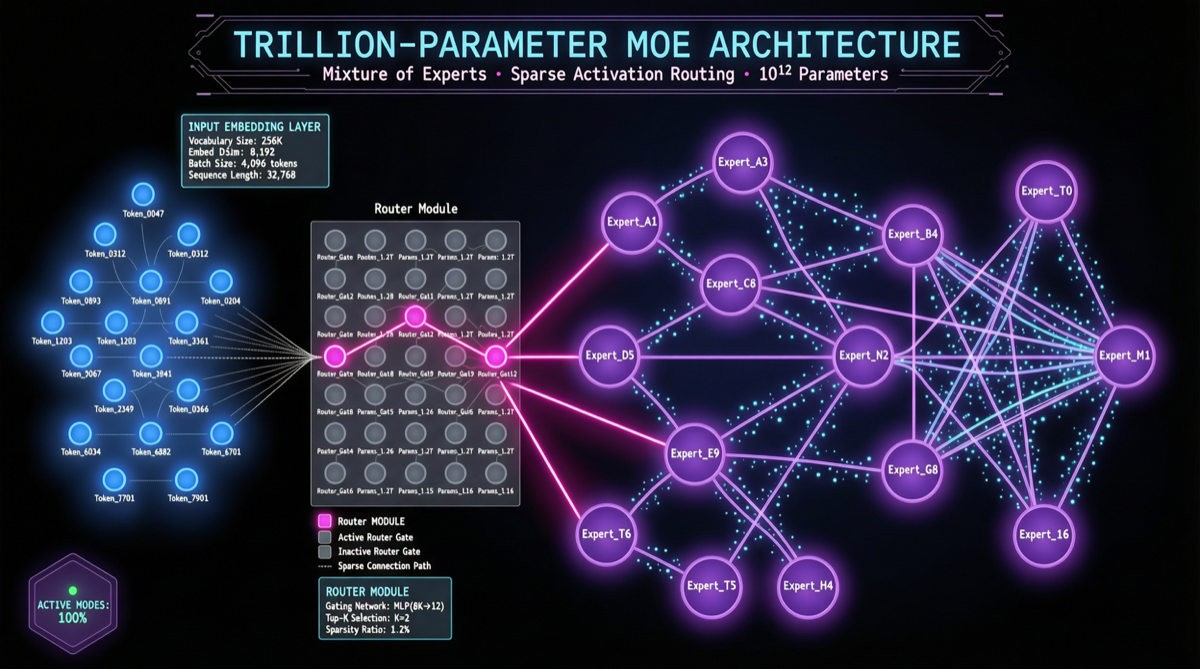

- 1T Parameter Sparse MoE: Total parameters reach 1T, but only a subset of experts are activated per inference pass. This means throughput is significantly higher than dense trillion-parameter models on equivalent hardware.

- 262K Context Window: While not matching Claude’s 1M or Gemini’s 2M, it is sufficient for most codebase-level tasks.

- Agentic Coding Specialization: Fine-tuned specifically for code generation, multi-step tool calling, and autonomous debugging. Performance on Terminal-Bench and SWE-bench benchmarks is worth watching.

Why It Matters

- Cost Advantage Crushes Competition: For agent scenarios requiring heavy token consumption (long-context code analysis, multi-round tool calls), Qwen 3.6 Max Preview’s cost advantage is significant.

- Alibaba Ecosystem Integration: Expected to be deployed first on Alibaba Cloud’s Bailian platform and Tongyi Lingma, giving Chinese developers rapid access.

- Open Source Follow-up: Qwen3.6-27B was previously open-sourced with positive community feedback. The Max version, while not open-sourced, proves Alibaba’s continued investment in frontier models.

Action Recommendations

- Existing Claude/GPT subscribers: Try Qwen 3.6 Max Preview on OpenRouter as the primary model for cost-sensitive tasks, reserving Opus/GPT-5.5 for high-difficulty reasoning.

- Chinese developers: Watch for Bailian platform integration — expect more favorable enterprise pricing.

- Agent developers: The 262K context + low pricing combination is ideal for autonomous coding agents that need long-context codebase analysis.

Risk Notes

- Currently a Preview version; stability remains to be verified

- No open weights — local deployment is not possible

- Actual performance in Chinese-language contexts still needs community feedback