Это русская версия материала. Для полноты языковых маршрутов текст основан на существующей основной версии.

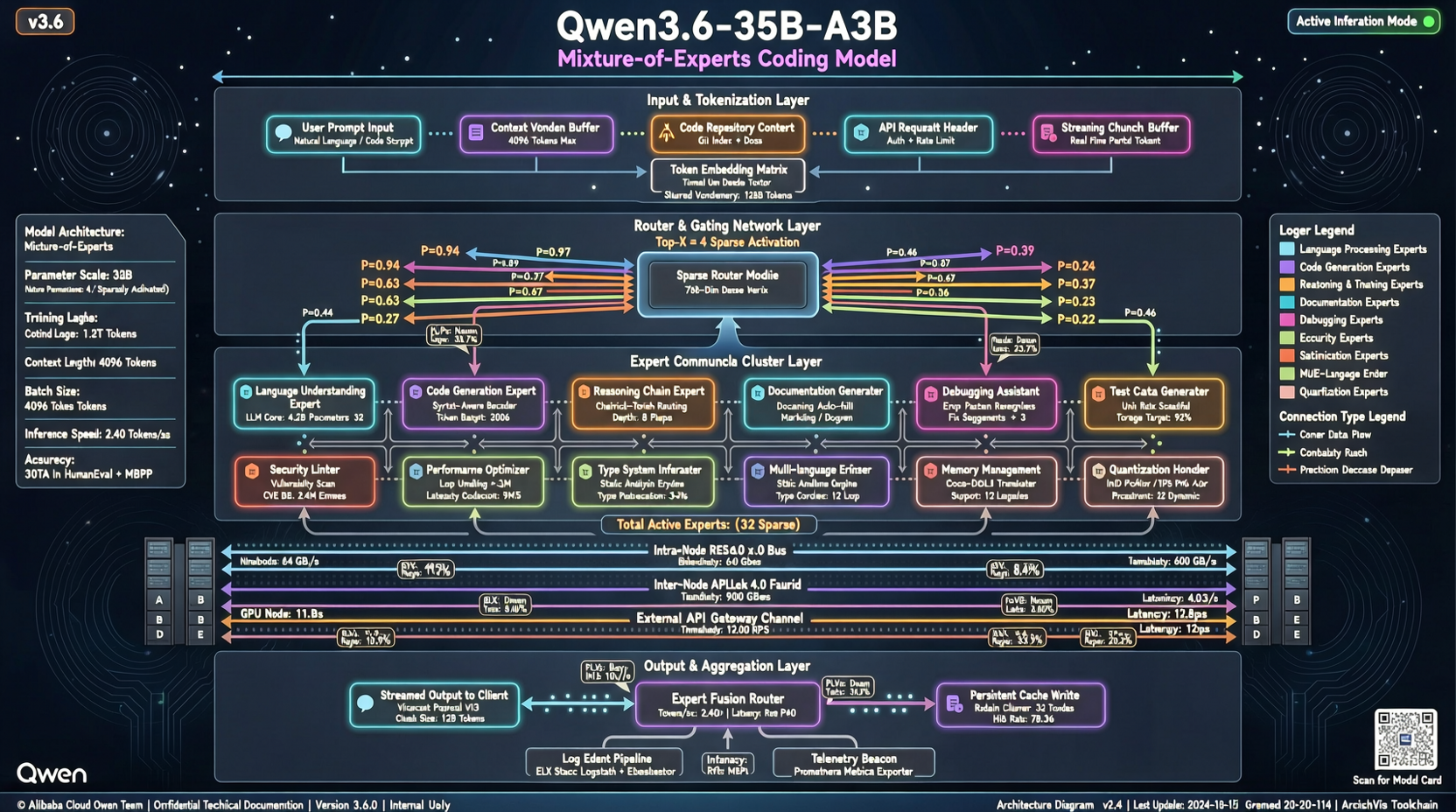

The Qwen team hid an interesting model inside the Qwen 3.6 line: 35B-A3B. It has 35B total parameters, but each token activates only about 3.6B.

Community coding tests are already making noise: on repository-level coding tasks, this MoE model with roughly 3B active parameters is reportedly approaching the level of a 397B dense model.

The Numbers

Core Qwen3.6-35B-A3B configuration:

- Total parameters: 35B

- Active parameters: 3.6B, roughly 10% per inference step

- Architecture: sparse Mixture of Experts, similar in spirit to the Mixtral route

- Deployment: available through AWS JumpStart

Compared with Qwen3.6-27B dense, the 27B model activates all parameters and performs well on coding, but latency and inference cost are much higher.

The point of 35B-A3B is simple: spend about one tenth of the compute and get close to full-size coding performance.

MoE's Cost-Performance Moment

MoE is not new. Mixtral 8x7B proved the direction in late 2023. But Qwen3.6-35B-A3B pushes the practical side further:

- 3.6B active parameters can fit into consumer-GPU territory

- Repository-level coding ability gets close to what previously required far larger dense models

- AWS JumpStart deployment means teams can call it without managing GPUs themselves

For independent developers and small teams, that is a useful combination. You do not need to rent an H100 to run a coding-capable local agent.

The Trade-Offs

Community tests also show the usual MoE weaknesses:

- Routing instability: edge cases can route to the wrong experts and drop quality

- Long-context decay: very long contexts are less stable than dense models

- Harder fine-tuning: LoRA and QLoRA support for MoE is still less mature

These are not fatal, but they do mean MoE is currently better for inference-heavy use than heavy domain adaptation.

Alibaba Cloud's Bet

The Qwen 3.6 lineup is fairly clear: 35B MoE targets the cost-performance middle, 27B dense carries the quality benchmark, smaller models cover edge devices, and Max Preview guards the flagship API tier.

Putting 35B-A3B on AWS JumpStart is also a signal: Qwen is trying to enter the daily workflow of global developers, not just remain a "Chinese model" people test out of curiosity.

The next model worth watching is Qwen 3.6 8B dense. If that gets close to today's 27B coding level, edge deployment gets much more interesting.

Main sources:

- Qwen Hugging Face page

- Community coding test threads

- AWS JumpStart model catalog