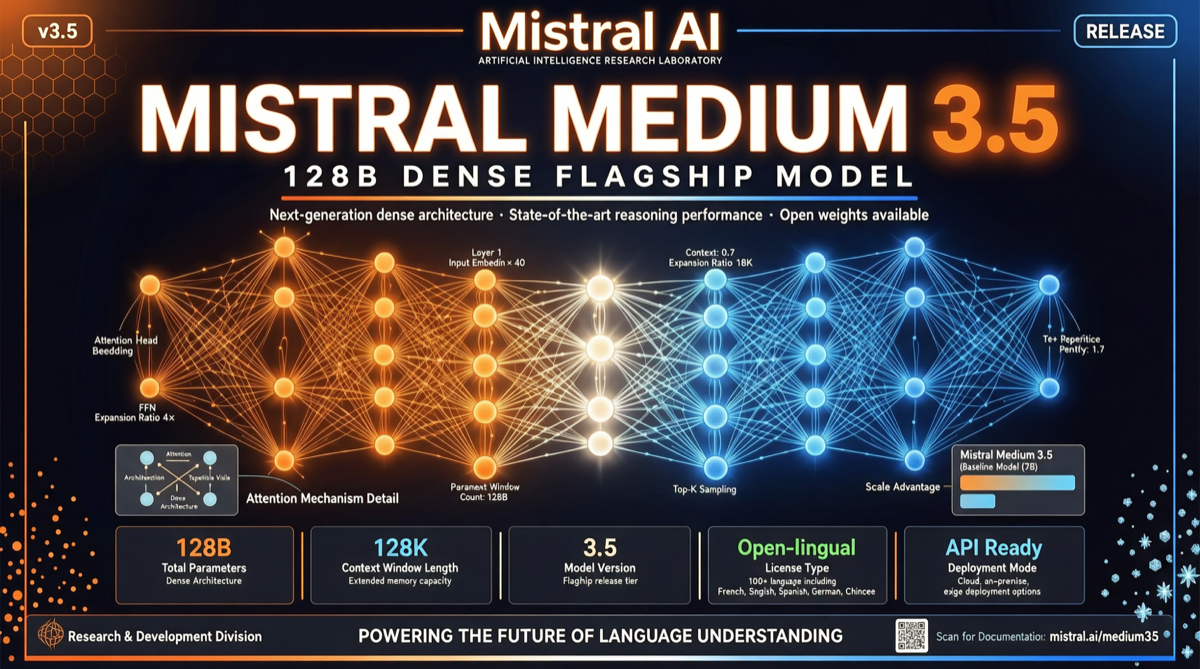

Mistral released Mistral Medium 3.5 on April 29, 2026, a 128B-parameter dense flagship model integrating text and vision understanding. This is Mistral’s latest move in the mid-sized dense model segment and a significant play from a European AI company in the 2026 model competition.

Core Specifications

Medium 3.5 key parameters:

| Metric | Value |

|---|---|

| Parameters | 128B (dense) |

| Context Window | 256K tokens |

| Modality | Text + Vision |

| Reasoning Mode | Configurable reasoning depth |

| SWE-bench Verified | 77.6% |

| τ³ Telecom | 91.4 |

| Local Run Requirement | ~64GB RAM |

Compared to models in the same size range, Medium 3.5 scores 91.4 on the τ³ Telecom benchmark and 77.6% on SWE-bench Verified. These results place it in the same tier as models with 6x the parameters.

Configurable Reasoning: Balancing Efficiency and Precision

The most noteworthy design in Medium 3.5 is configurable reasoning depth. Users can specify reasoning intensity at call time — simple tasks get fast responses, complex tasks trigger deeper reasoning. This means a single model can cover both low-latency and high-precision scenarios, reducing the operational cost of deploying different models for different tasks.

Competitive Landscape

In the same parameter range, Medium 3.5’s competitors include Qwen3.6-27B (smaller but highly efficient) and IBM Granite 4.1-30B (released the same day). From community feedback, Medium 3.5 outperforms Granite 4.1 in coding and vision comprehension, but Qwen3.6-27B still leads on some vertical benchmarks.

A notable difference is the license: Mistral Medium 3.5 uses the Mistral Research License, while IBM Granite 4.1 uses Apache 2.0. For commercial deployment, Apache 2.0 has fewer restrictions.

Local Deployment Feasibility

Medium 3.5 can run locally on machines with ~64GB RAM (GGUF format), providing a viable option for teams with data privacy requirements. Combined with NVIDIA NIM inference service, enterprises can deploy on their own hardware without relying on external APIs.

Landscape Assessment

Medium 3.5’s release signals that European AI companies are shifting from “catching up” to “competing through differentiation” — focusing on the efficiency and deployability of mid-sized dense models rather than chasing the largest parameters. This is consistent with Mistral’s一贯 “efficient open source” approach.

For developers, if you need a model that runs locally with both vision understanding and code repair capabilities, Medium 3.5 is worth testing.

Primary Sources

- Mistral Medium 3.5 Official Release

- Mistral AI Studio

- NVIDIA NIM Deployment Guide