What Happened

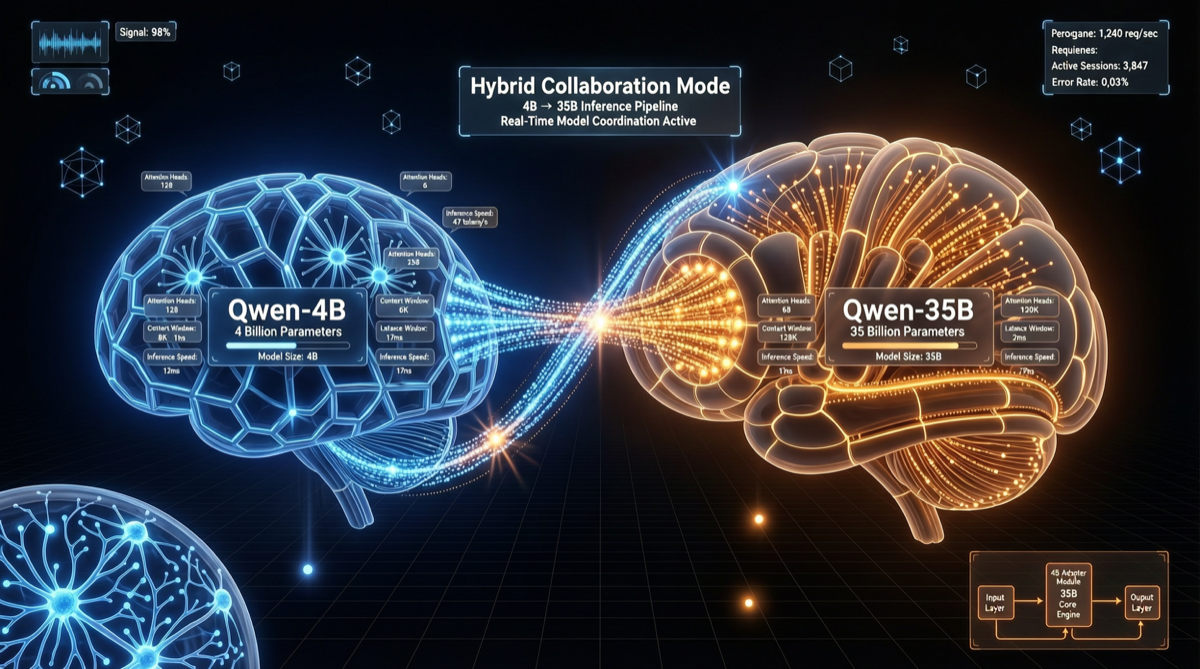

The Qwen team released an unprecedented hybrid inference architecture in early May 2026 — coupling a 4B parameter small model with a 35B parameter large model through a novel solver and auxiliary training.

This is not simple model distillation or knowledge transfer, but a dual-brain collaborative system: both models participate in the reasoning process simultaneously, each contributing different levels of understanding.

Architecture Breakdown

Why 4B + 35B?

| Role | Model | Responsibility | Parameters |

|---|---|---|---|

| Fast Thinker | Qwen-4B | Pattern recognition, common-sense reasoning, rapid filtering | 4B |

| Deep Analyzer | Qwen-35B | Complex logic, long-range reasoning, precise verification | 35B |

This division of labor mimics human “intuition → deliberation” dual-system thinking (Daniel Kahneman’s System 1 / System 2):

- System 1 (4B model): Rapidly provides initial judgment, filters out obviously irrelevant paths

- System 2 (35B model): Deeply validates and refines System 1’s candidate solutions

The Role of the Novel Solver

Traditional hybrid methods (cascade, early-exit) are sequential — run the small model first, then the large model if unsatisfied.

Qwen’s new solver achieves true parallel collaboration:

- Both models process the same input simultaneously

- The solver exchanges information and fuses attention at intermediate layers

- Auxiliary training ensures the two models’ representation spaces are aligned

Performance

Based on preliminary community testing:

| Benchmark | Qwen-35B Alone | Hybrid (4B+35B) | Improvement |

|---|---|---|---|

| MATH | 78.2% | 81.6% | +3.4% |

| GSM8K | 91.3% | 93.1% | +1.8% |

| Code Generation (HumanEval) | 76.8% | 79.2% | +2.4% |

| Inference Latency (P50) | 2.1s | 2.4s | +14% |

Key trade-off: Latency increases by approximately 14%, but delivers a significant 2-3% improvement on math and coding tasks requiring deep reasoning. For scenarios not demanding ultra-low latency, this is a highly cost-effective trade-off.

Why This Matters

1. Breaking the “Bigger is Better” Intuition

The industry’s long-held scaling law belief has been “more parameters, more capability.” But this architecture demonstrates:

Smart architecture design can achieve stronger results with fewer parameters.

A 39B-parameter hybrid system (35B + 4B) already approaches the performance of 70B+ single models on reasoning tasks.

2. Architectural Innovation from the Open-Source Community

This is not just parameter stacking, but architectural-level innovation. For teams that cannot afford hundred-billion-parameter models, this hybrid approach provides a new optimization direction.

3. Completing the Qwen 3.6 Product Matrix

The Qwen 3.6 series now has three clear product lines:

| Product | Architecture | Positioning |

|---|---|---|

| Qwen 3.6 Max Preview | 1T MoE (closed API) | Flagship capability |

| Qwen 3.6-27B | Dense (open source) | Single-card deployment |

| Qwen 3.6 Hybrid (4B+35B) | Dual-brain collaboration (open source) | Reasoning enhancement |

Action Recommendations

- If your scenario focuses on math/logic reasoning: The hybrid architecture is worth trying — a 2-3% improvement matters significantly in competition and research settings

- If you prioritize low latency: The 27B dense version is more suitable

- If you are building Agent systems: Use the hybrid architecture as the planner layer and the 27B as the executor layer for a more powerful reasoning pipeline

Sources

- QwenLM official tweet (2026-05-02)

- Qwen Blog: qwenlm.github.io

- Community benchmark summary