An Overlooked Recommendation

If you’re running AI models locally, or considering doing so, here’s one piece of advice that matters even more than model selection: carefully choose your agentic harness.

This isn’t an academic opinion — it’s a conclusion drawn from extensive practical experience. Countless developers have reported that their local models are “dumb,” “broken,” or “not as good as cloud models.” But in most cases, the problem isn’t the model — it’s the agentic framework they’re using.

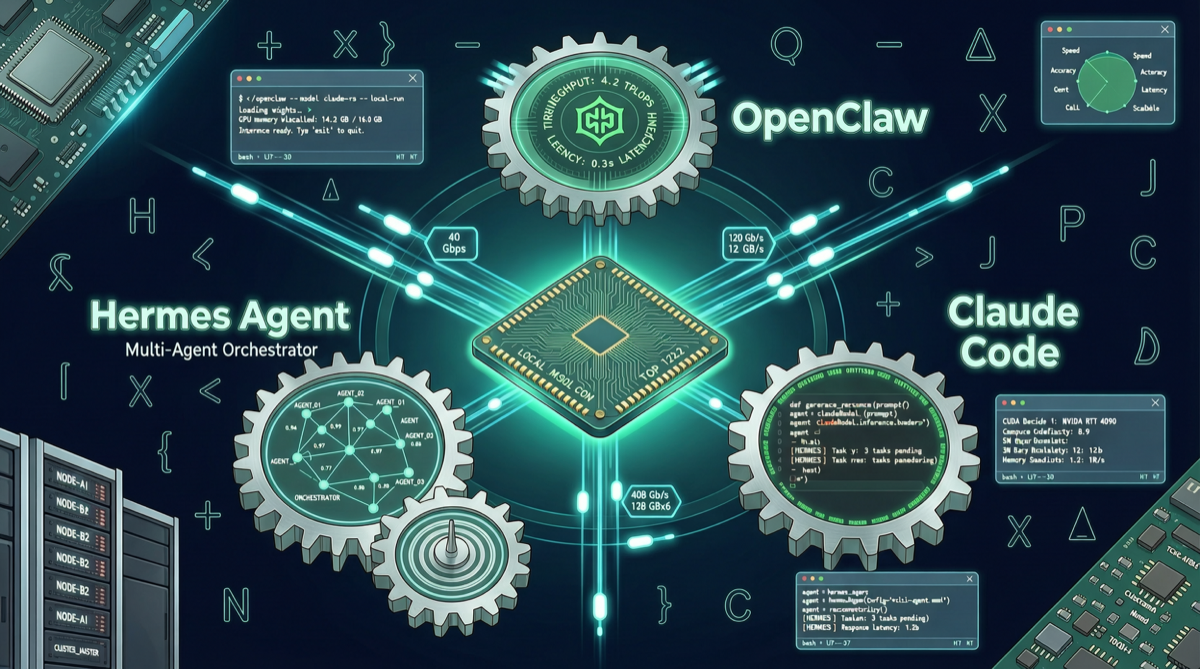

When someone switches their framework from OpenClaw to Claude Code (or vice versa), the same model’s performance can differ dramatically. This isn’t mysticism — it’s the systematic result of differences in framework design philosophy.

What Is an Agentic Framework?

Simply put, an agentic framework is the “operating system” between the model and the execution environment. It determines:

- Context management: How much history the model can see, and how memory is compressed and retrieved

- Tool call orchestration: How the system decides when to call which tool, and how to handle tool return results

- Task decomposition strategy: How to plan execution steps when facing complex tasks

- Error recovery mechanisms: How to back off and retry when tool calls fail

- Security boundaries: Which operations are allowed, and which require human confirmation

The model provides “intelligence,” the framework provides “methodology.” A smart model paired with a poor framework may perform like a mediocrity; a mid-level model paired with an excellent framework may outperform flagship models.

Three Mainstream Frameworks Compared

1. Claude Code (Anthropic)

Positioning: Enterprise-grade coding agent, deeply integrated with the Claude model ecosystem

Advantages:

- Extremely refined context management, supporting layered memory strategies

- Tool call orchestration optimized through extensive real-world development scenarios

- Deepest adaptation for Claude Opus/Sonnet series models

- Mature security mechanisms, well-designed code execution sandbox

Disadvantages:

- Strong binding to Claude models, using other models requires additional adaptation layers

- High resource consumption, not suitable for low-spec machines

- Closed source, limited customization capability

Use cases: Professional development teams, enterprise coding workflows, environments with high security requirements

2. OpenClaw

Positioning: Open-source, multi-model universal agent framework

Advantages:

- Native multi-model routing support, flexible switching between different models

- Deep optimization for cost-effective models like DeepSeek

- Active open-source ecosystem, rich community-contributed tool and skill libraries

- Lightweight design, runnable on consumer-grade hardware

Disadvantages:

- Context management strategy not as refined as Claude Code

- Strategic consistency in ultra-long tasks (dozens of steps or more) needs improvement

- Some advanced features still under development

Use cases: Individual developers, multi-model comparison experiments, budget-conscious coding scenarios

3. Hermes Agent

Positioning: Open-source agent platform for Agent-native workflows

Advantages:

- Native support for multi-agent parallel tasks

- Kanban-style task orchestration suitable for complex project management

- Active plugin ecosystem (ComfyUI creative workflows, desktop virtual workspaces, etc.)

- Community-driven model adaptation, good support for domestic models

Disadvantages:

- Less professional than Claude Code in pure coding scenarios

- Relatively steeper learning curve

- Some advanced features require self-configuration

Use cases: Multi-agent collaboration scenarios, creative workflows, complex projects requiring custom orchestration

The Harsh Reality of Price vs Performance

A noteworthy practical case: a developer who switched their entire workflow to DeepSeek V4 Pro had an excellent experience. The more critical data point:

DeepSeek’s price is only 1/40 of Claude Code, while performance compared to models other than Claude Code shows little difference.

This leads to two important insights:

First, the framework costs more than the model. When model costs are compressed to extremely low levels, the framework’s design quality becomes the decisive factor for experience. Using the best framework with a cheap model offers far better value than using a cheap framework with an expensive model.

Second, different frameworks have different “activation efficiency” for different models. The same DeepSeek V4 Pro performs excellently under Claude Code’s harness, does well under OpenClaw, but may perform dramatically worse under certain other frameworks. This isn’t a model problem — it’s the framework failing to fully unlock the model’s capabilities.

How to Choose Your Harness?

Decision Matrix

| Your Need | Recommended Framework |

|---|---|

| Enterprise coding, ample budget | Claude Code |

| Individual developer, pursuing value | OpenClaw + DeepSeek |

| Multi-agent collaboration | Hermes Agent |

| Creative workflows | Hermes Agent |

| Model experiments/comparison | OpenClaw |

| Low-spec hardware | OpenClaw or Hermes Agent |

Practical Recommendations

-

Don’t just look at model benchmarks. A model scoring 90 on MMLU doesn’t mean it performs well in your workflow. Test different framework + model combinations with your actual tasks.

-

Focus on the framework’s context strategy. For long-horizon tasks, the framework’s context compression and retrieval capability matters more than the model’s token window size.

-

Tool call quality determines everything. Whether the framework can correctly select tools, parse tool outputs, and gracefully back off on failure — these matter more for actual experience than the model’s “intelligence.”

-

Leave room for switching costs. Don’t put all your eggs in one basket. Familiarize yourself with at least two frameworks, so when one framework’s update is suboptimal, you have a backup.

Future Outlook

In 2026, agent frameworks are undergoing rapid differentiation. On one hand, specialized tools like Claude Code are becoming increasingly strong in the coding domain; on the other, open-source frameworks like OpenClaw and Hermes Agent hold advantages in multi-model support and flexibility.

A trend worth watching: the co-evolution of frameworks and models is accelerating. Excellent framework teams adjust orchestration strategies based on models’ output characteristics, while model teams reference framework usage patterns to optimize training objectives. This bidirectional feedback means choosing a framework is no longer a one-time decision, but an ongoing optimization process.

For local AI users, the good news is: whichever framework you choose, the open-source ecosystem is advancing rapidly. The key is finding the one that best matches your workflow.